Source code for this article: GitHub. Click here || GitEE. Click here

1. Introduction to ElasticJob

1. Timing Tasks

In the previous article, you said that QuartJob is a timed task standard that is widely used.But the core point of Quartz is that executing timed tasks is not focused on business models and scenarios, and lacks highly customized functionality.Quartz is able to achieve high availability of tasks based on databases, but it does not have the capability of distributed parallel scheduling.

2. ElasticJob description

- Basic Introduction

Elastic-Job is an open source distributed scheduling middleware composed of two independent subprojects, Elastic-Job-Lite and Elastic-Job-Cloud.Elastic-Job-Lite is a lightweight, non-centralized solution that uses jar packages to provide scheduling and governance of distributed tasks.Elastic-Job-Cloud is a Mesos Framework that relies on Mesos to provide additional services such as resource governance, application distribution, and process isolation.

- Functional features

Distributed Scheduling Coordination Elastic expansion Failover Missed Retrigger of Execution Job Job slicing consistency to ensure that the same slice has only one execution instance in a distributed environment

Supplementary knife: People's official website described this, here to expand on the article.

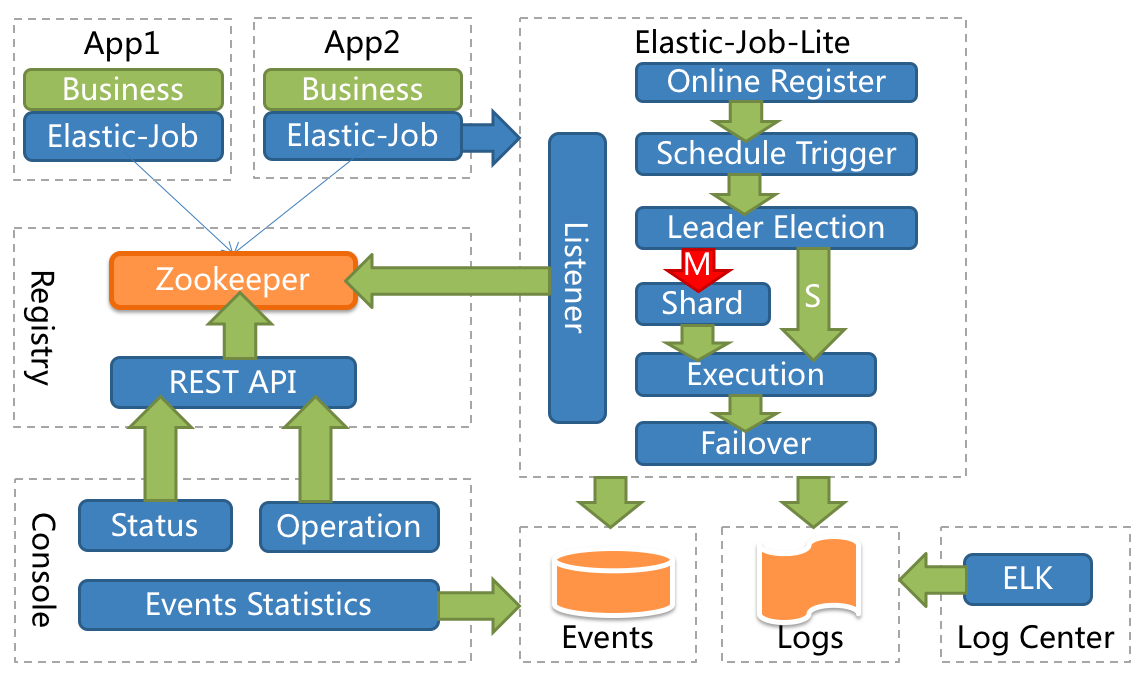

- Infrastructure Framework

This picture is from the ElasticJob website.

The diagram shows the following:

The following example illustrates the need for Zookeeper component support as a distributed scheduling task with good monitoring mechanisms and consoles.

3. Piecewise Management

This concept is the most distinctive and practical in ElasticJob.

- Fragmentation concept

Distributed execution of tasks requires that a task be split into separate task items, and then a distributed server executes one or more fragmented items.

Scenario Description: Assuming there are three servers and three pieces of management, to process 100 data tables, then 100%3 can be executed according to the remaining 0,1,2 distributed to three services, and see the basic logic of the repository and sub-table surge in mind here, which is why many bulls say that programming thinking is important.

- Personalization parameters

The personalization parameter, shardingItemParameter, matches the corresponding relationship with the slice item to convert the number of the slice item into more readable business code.

Scenario Description: It looks like it's elegant when you read it hard here. If the name [0, 1, 2] is ugly or badly marked in three pieces, you can identify the individual name separately, [0=A, 1=B, 2=C].

2. Timing Task Loading

1. Core Dependency Package

Use version 2.0+ here.

<dependency> <groupId>com.dangdang</groupId> <artifactId>elastic-job-lite-core</artifactId> <version>2.1.5</version> </dependency> <dependency> <groupId>com.dangdang</groupId> <artifactId>elastic-job-lite-spring</artifactId> <version>2.1.5</version> </dependency>

2. Core Profile

The main configuration here is the Zookeeper middleware, slicing and slicing parameters.

zookeeper: server: 127.0.0.1:2181 namespace: es-job job-config: cron: 0/10 * * * * ? shardCount: 1 shardItem: 0=A,1=B,2=C,3=D

3. Custom Notes

Looking at the official case, I don't see any useful notes. I can only write one here by myself, referencing the case-based loading process and the core API.

Core Configuration Class:

com.dangdang.ddframe.job.lite.config.LiteJobConfiguration

Depending on how you want to use annotations, for example, I just want to annotate the timer task name and the Ron expression, the other parameters are directly and uniformly configured (this may be too deeply influenced by QuartJob, it may simply be effortless...)

@Inherited @Target({ElementType.TYPE}) @Retention(RetentionPolicy.RUNTIME) public @interface TaskJobSign { @AliasFor("cron") String value() default ""; @AliasFor("value") String cron() default ""; String jobName() default ""; }

4. Job Cases

Print some basic parameters here, and see the configuration and comments at a glance.

@Component @TaskJobSign(cron = "0/5 * * * * ?",jobName = "Hello-Job") public class HelloJob implements SimpleJob { private static final Logger LOG = LoggerFactory.getLogger(HelloJob.class.getName()) ; @Override public void execute(ShardingContext shardingContext) { LOG.info("Current Thread: "+Thread.currentThread().getId()); LOG.info("Task slicing:"+shardingContext.getShardingTotalCount()); LOG.info("Current slice:"+shardingContext.getShardingItem()); LOG.info("Slicing parameters:"+shardingContext.getShardingParameter()); LOG.info("Task parameters:"+shardingContext.getJobParameter()); } }

5. Loading Timer Tasks

Now that you customize the annotations, the loading process naturally needs to be customized, read the custom annotations, configure them, add containers, then initialize them, and wait for the task to execute.

@Configuration public class ElasticJobConfig { @Resource private ApplicationContext applicationContext ; @Resource private ZookeeperRegistryCenter zookeeperRegistryCenter; @Value("${job-config.cron}") private String cron ; @Value("${job-config.shardCount}") private int shardCount ; @Value("${job-config.shardItem}") private String shardItem ; /** * Configure Task Listener */ @Bean public ElasticJobListener elasticJobListener() { return new TaskJobListener(); } /** * Initialize configuration task */ @PostConstruct public void initTaskJob() { Map<String, SimpleJob> jobMap = this.applicationContext.getBeansOfType(SimpleJob.class); Iterator iterator = jobMap.entrySet().iterator(); while (iterator.hasNext()) { // Custom Annotation Management Map.Entry<String, SimpleJob> entry = (Map.Entry)iterator.next(); SimpleJob simpleJob = entry.getValue(); TaskJobSign taskJobSign = simpleJob.getClass().getAnnotation(TaskJobSign.class); if (taskJobSign != null){ String cron = taskJobSign.cron() ; String jobName = taskJobSign.jobName() ; // Build Configuration SimpleJobConfiguration simpleJobConfiguration = new SimpleJobConfiguration( JobCoreConfiguration.newBuilder(jobName, cron, shardCount) .shardingItemParameters(shardItem).jobParameter(jobName).build(), simpleJob.getClass().getCanonicalName()); LiteJobConfiguration liteJobConfiguration = LiteJobConfiguration.newBuilder( simpleJobConfiguration).overwrite(true).build(); TaskJobListener taskJobListener = new TaskJobListener(); // Initialize Task SpringJobScheduler jobScheduler = new SpringJobScheduler( simpleJob, zookeeperRegistryCenter, liteJobConfiguration, taskJobListener); jobScheduler.init(); } } } }

Don't wonder how these APIs know. Look at the official documented cases and how they use these core APIs. Here's how they are written. It's how you customize the loading process of annotation classes.Of course, it is necessary to read the official documents roughly.

To learn how to use some components quickly, first find the official documentation or open source Wiki.No more ReadMe documents (if not, give up as appropriate, look for other ones) to familiarize yourself with the basic functions. If so, look at the basic use cases, familiarize yourself with the API, and finally study the functional modules you need. In personal experience, this process has the fewest detours and pits.

6. Task monitoring

The usage is very simple and implements the ElasticJobListener interface.

@Component public class TaskJobListener implements ElasticJobListener { private static final Logger LOG = LoggerFactory.getLogger(TaskJobListener.class); private long beginTime = 0; @Override public void beforeJobExecuted(ShardingContexts shardingContexts) { beginTime = System.currentTimeMillis(); LOG.info(shardingContexts.getJobName()+"===>start..."); } @Override public void afterJobExecuted(ShardingContexts shardingContexts) { long endTime = System.currentTimeMillis(); LOG.info(shardingContexts.getJobName()+ "===>End...[Time consuming:"+(endTime - beginTime)+"]"); } }

before and after the execution, the target method is executed in the middle, and the standard AOP cut-off idea is executed. So the bottom level determines the speed of understanding the upper frame. Should the dust on this Java Programming Thought be erased?

3. Dynamic Addition

1. Job Tasks

Some scenarios require adding and managing timed tasks dynamically. Based on the loading process above, some steps can be customized.

@Component public class GetTimeJob implements SimpleJob { private static final Logger LOG = LoggerFactory.getLogger(GetTimeJob.class.getName()) ; private static final SimpleDateFormat format = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss") ; @Override public void execute(ShardingContext shardingContext) { LOG.info("Job Name:"+shardingContext.getJobName()); LOG.info("Local Time:"+format.format(new Date())); } }

2. Add Task Service

This dynamically adds the task above.

@Service public class TaskJobService { @Resource private ZookeeperRegistryCenter zookeeperRegistryCenter; public void addTaskJob(final String jobName,final SimpleJob simpleJob, final String cron,final int shardCount,final String shardItem) { // Configuration process JobCoreConfiguration jobCoreConfiguration = JobCoreConfiguration.newBuilder( jobName, cron, shardCount) .shardingItemParameters(shardItem).build(); JobTypeConfiguration jobTypeConfiguration = new SimpleJobConfiguration(jobCoreConfiguration, simpleJob.getClass().getCanonicalName()); LiteJobConfiguration liteJobConfiguration = LiteJobConfiguration.newBuilder( jobTypeConfiguration).overwrite(true).build(); TaskJobListener taskJobListener = new TaskJobListener(); // Load Execution SpringJobScheduler jobScheduler = new SpringJobScheduler( simpleJob, zookeeperRegistryCenter, liteJobConfiguration, taskJobListener); jobScheduler.init(); } }

When added here, the task will be executed regularly. How to stop the task is another problem. You can do some configuration on the task name, such as generating a record [1,job1,state] in the database. If the task is scheduled to state as stopped, you can cut the beard directly.

3. Test Interface

@RestController public class TaskJobController { @Resource private TaskJobService taskJobService ; @RequestMapping("/addJob") public String addJob(@RequestParam("cron") String cron,@RequestParam("jobName") String jobName, @RequestParam("shardCount") Integer shardCount, @RequestParam("shardItem") String shardItem) { taskJobService.addTaskJob(jobName, new GetTimeJob(), cron, shardCount, shardItem); return "success"; } }

4. Source code address

GitHub·address https://github.com/cicadasmile/middle-ware-parent GitEE·address https://gitee.com/cicadasmile/middle-ware-parent