1, Kubernetes (k8s)

k8s full name Kubernetes

With the rapid development of Docker as an advanced container engine, container technology has been applied in Google

For many years, Borg system has run and managed thousands of container applications.

The Kubernetes project is derived from Borg, and it can be said to be the essence of Borg's design thought and its absorption.

The experiences and lessons in Borg system are introduced.

• Kubernetes abstracts computing resources at a higher level by carefully combining containers,

Deliver the final application service to the user.

• Kubernetes benefits:

• hide resource management and error handling, and users only need to pay attention to application development.

• high availability and reliability of services.

• the load can be run in a cluster composed of thousands of machines.

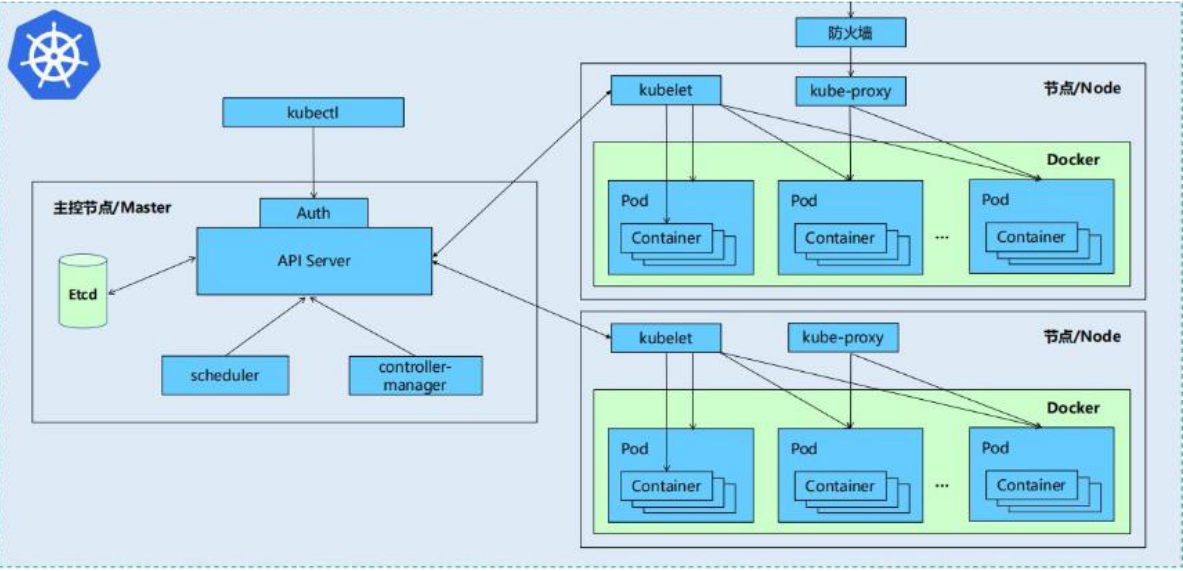

Design architecture of k8s

Kubernetes cluster includes node agent kubelet and Master components (APIs, scheduler, etc.),

Everything is based on a distributed storage system.

• Kubernetes is mainly composed of the following core components:

• Kubernetes is mainly composed of the following core components:

• etcd: saves the status of the entire cluster

• apiserver: it provides a unique entry for resource operation, and provides authentication, authorization, access control, API registration and discovery

Other mechanisms

• controller manager: responsible for maintaining the status of the cluster, such as fault detection, automatic expansion, rolling update, etc

• scheduler: it is responsible for scheduling resources and scheduling pods to corresponding machines according to predetermined scheduling policies

• kubelet: responsible for maintaining the life cycle of containers and managing Volume(CVI) and network (CNI)

• Container runtime: responsible for image management and real operation (CRI) of Pod and container

• Kube proxy: responsible for providing Service discovery and load balancing within the cluster for services

• in addition to the core components, there are some recommended add ons:

• Kube DNS: responsible for providing DNS services for the whole cluster

• Ingress Controller: provides an Internet portal for services

• Heapster: provide resource monitoring

• Dashboard: provide GUI

• Federation: provides clusters across availability zones

• fluent d-elastic search: provides cluster log collection, storage and query

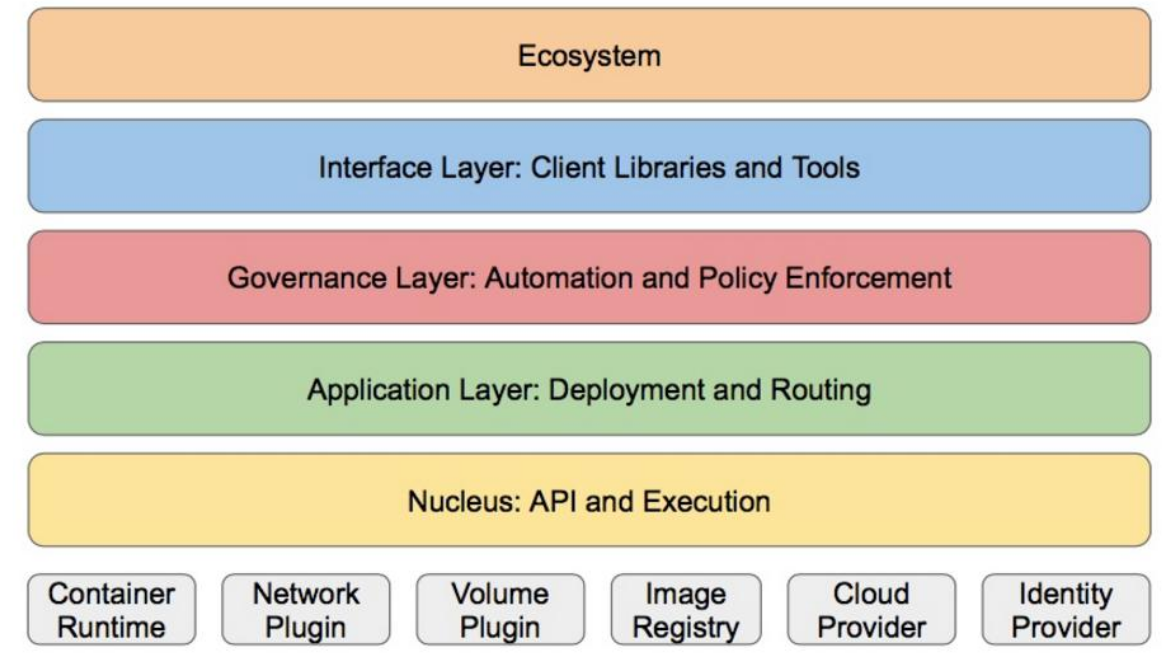

• Kubernetes design concept and function is actually a layered architecture similar to Linux

• core layer: the core function of Kubernetes, which provides API s to build high-level applications externally and plug-ins internally

Application execution environment**

• application layer: Deployment (stateless applications, stateful applications, batch tasks, cluster applications, etc.) and routing (server)

Service discovery, DNS resolution, etc.)

• Management: system measurement (e.g. infrastructure, container and network measurement), automation (e.g. automatic expansion, automation)

Status Provision, etc.) and policy management (RBAC, Quota, PSP, NetworkPolicy, etc.)

• interface layer: kubectl command line tool, CLIENT SDK and cluster Federation

• ecosystem: a huge container cluster management and scheduling ecosystem above the interface layer can be divided into two categories

domain

• Kubernetes external: logging, monitoring, configuration management, CI, CD, Workflow, FaaS

OTS applications, ChatOps, etc

• Kubernetes internal: CRI, CNI, CVI, image warehouse, Cloud Provider, cluster itself

Configuration and management of

2, Deployment of k8s

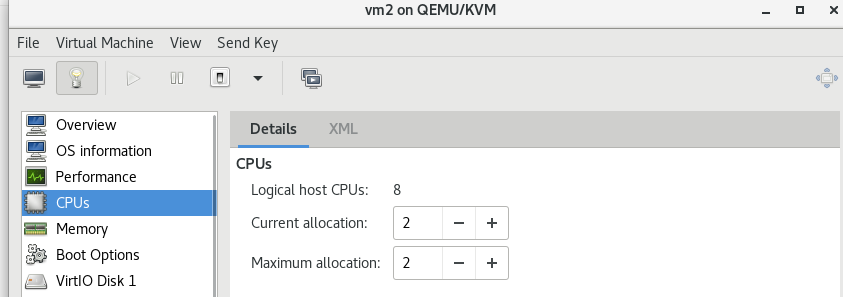

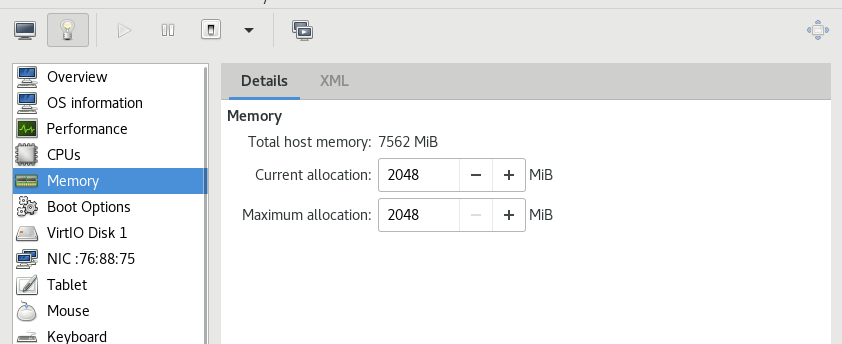

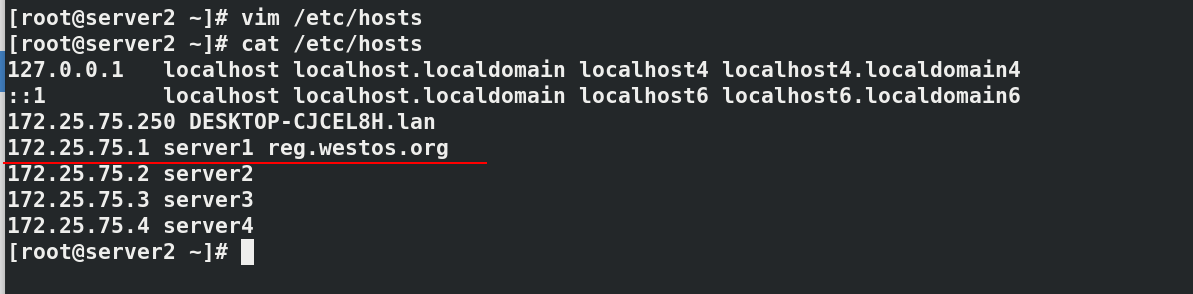

Configure server1 of daoker, three new virtual machines server2, server3 and server4, set the memory to 2G, set two CPU s, and resolve the domain name to reg.westos.org of server1. Use server2 as the master

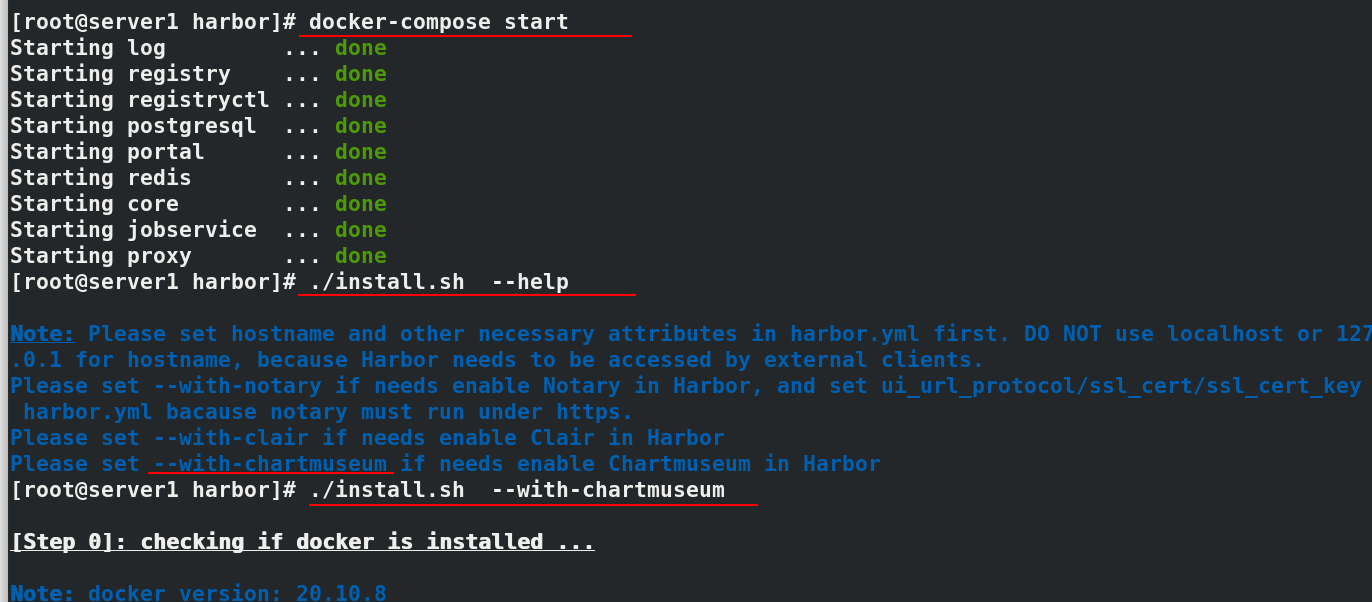

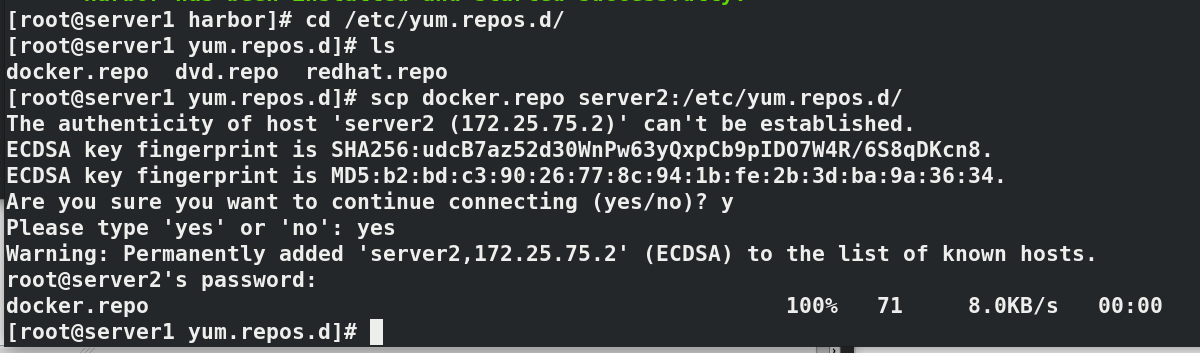

#In server1 docker-compose start ./install.sh --help ./install.sh --with-chartmuseum cd /etc/yum.repos.d/ ls--->docker.repo scp docker.repo server2:/etc/yum.repos.d/

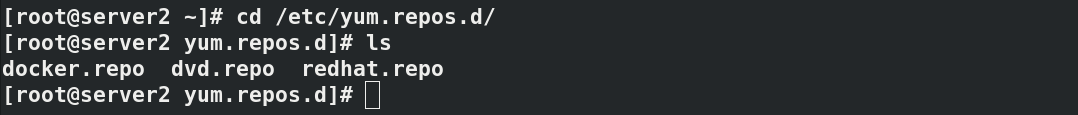

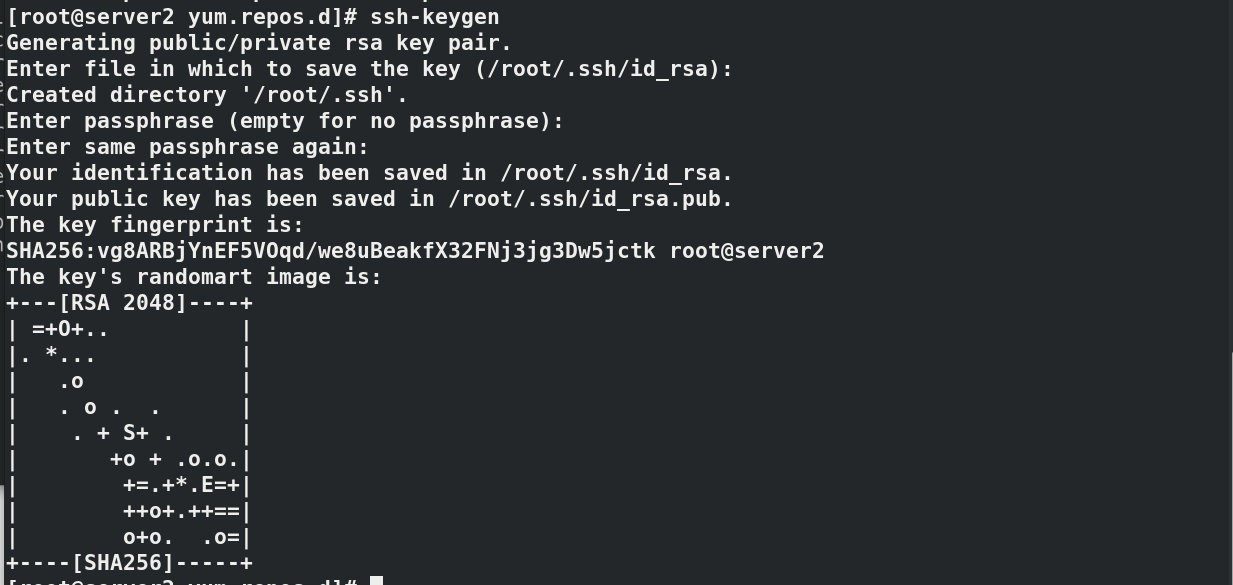

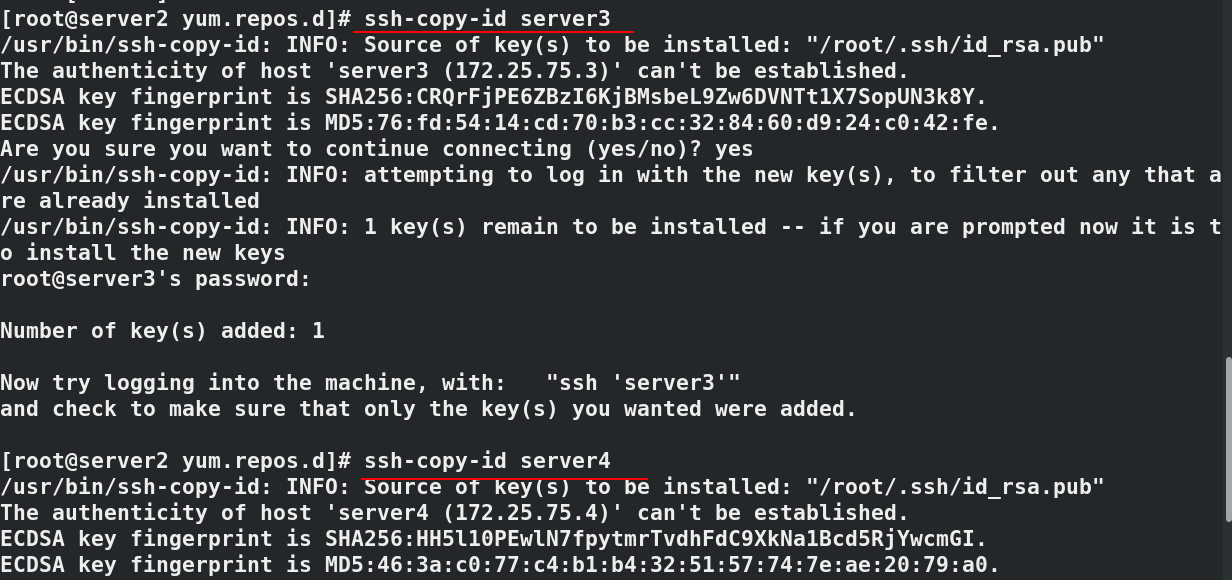

#In server2 cd /etc/yum.repos.d/ ls--->docker.repo #Establish secret free authentication in server2 to facilitate file transfer ssh-keygen ssh-copy-id server3#Add key ssh-copy-id server4 #Pass the docker warehouse to server3 and server4 scp docker.repo server3:/etc/yum.repos.d/ scp docker.repo server4:/etc/yum.repos.d/

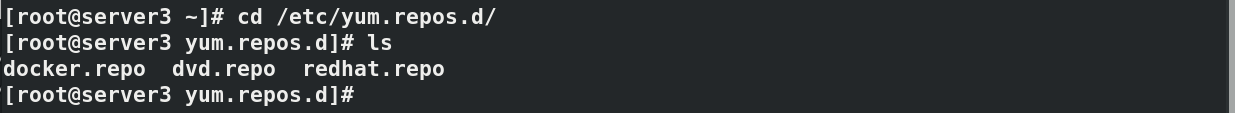

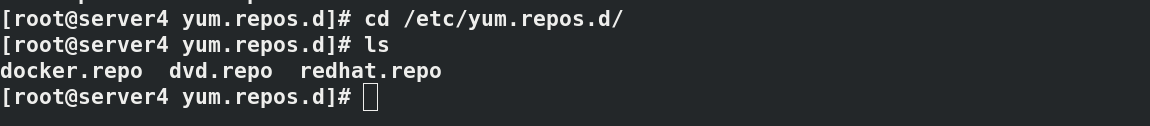

In server3 and server4, you can see that the docker warehouse has been transferred

In server3 and server4, you can see that the docker warehouse has been transferred

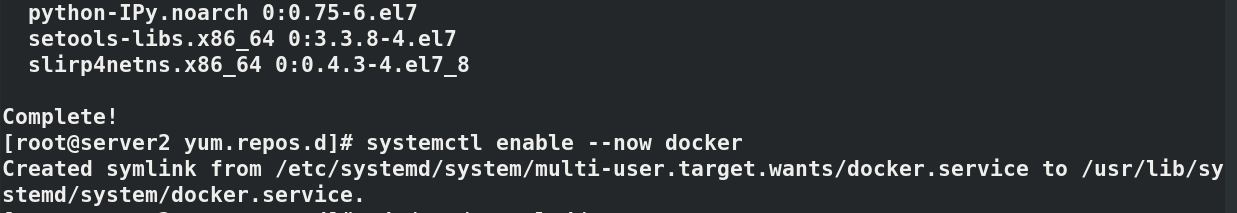

#Operate in server2, 3 and 4 respectively (since then, all three virtual machines are operated at the same time, which will be noted in case of special circumstances)

yum install docker-ce -y

systemctl enable --now docker

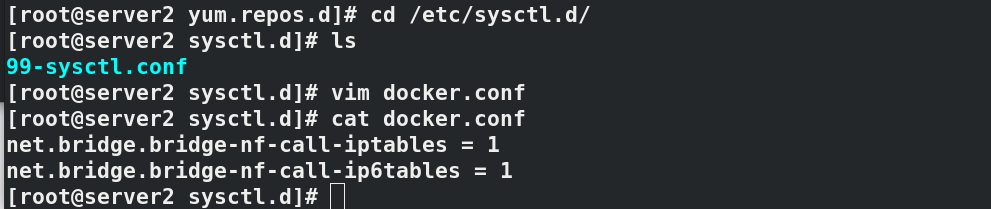

cd /etc/sysctl.d/

vim docker.conf

--------------------------------------

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

--------------------------------------

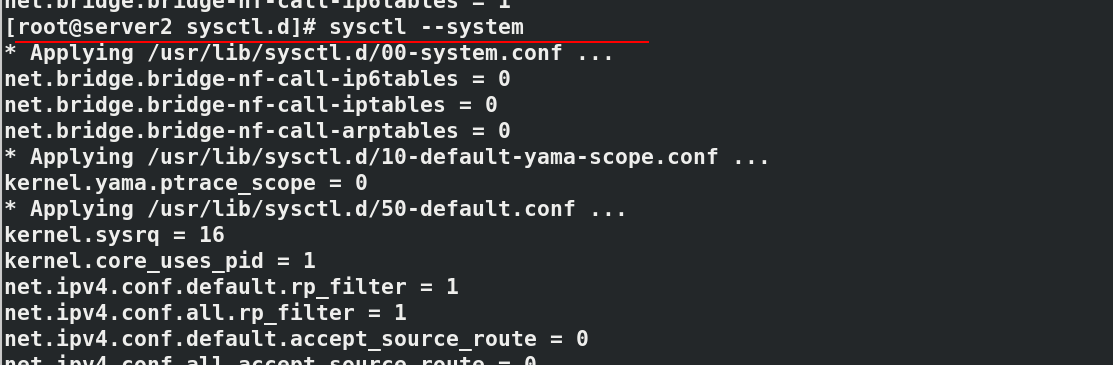

sysctl --system

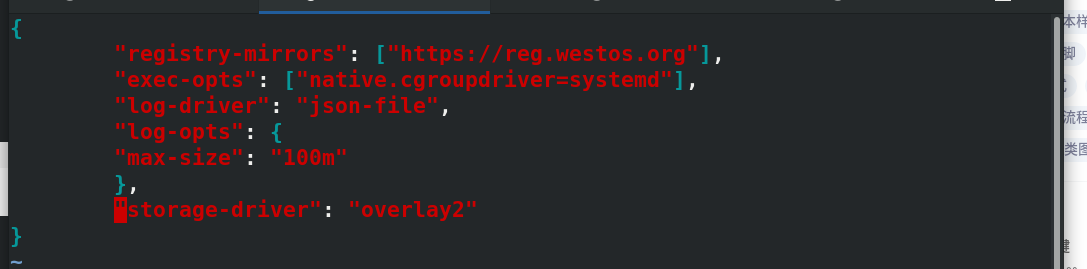

cd /etc/docker

vim daemon.json

--------------------------

{

"registry-mirrors": ["https://reg.westos.org"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2"

}

---------------------------

systemctl restart docker

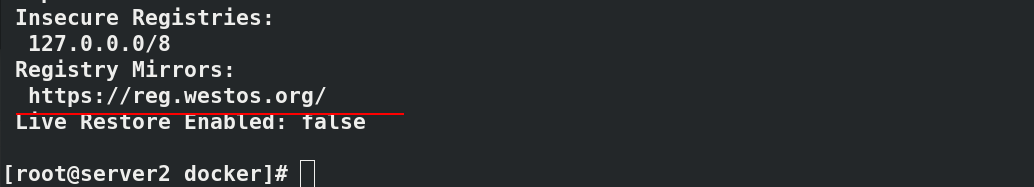

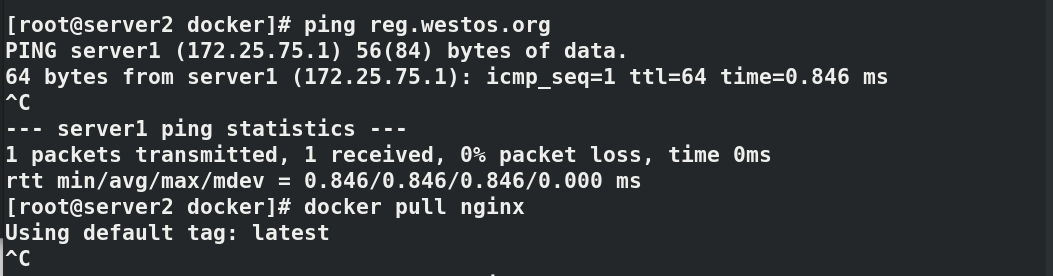

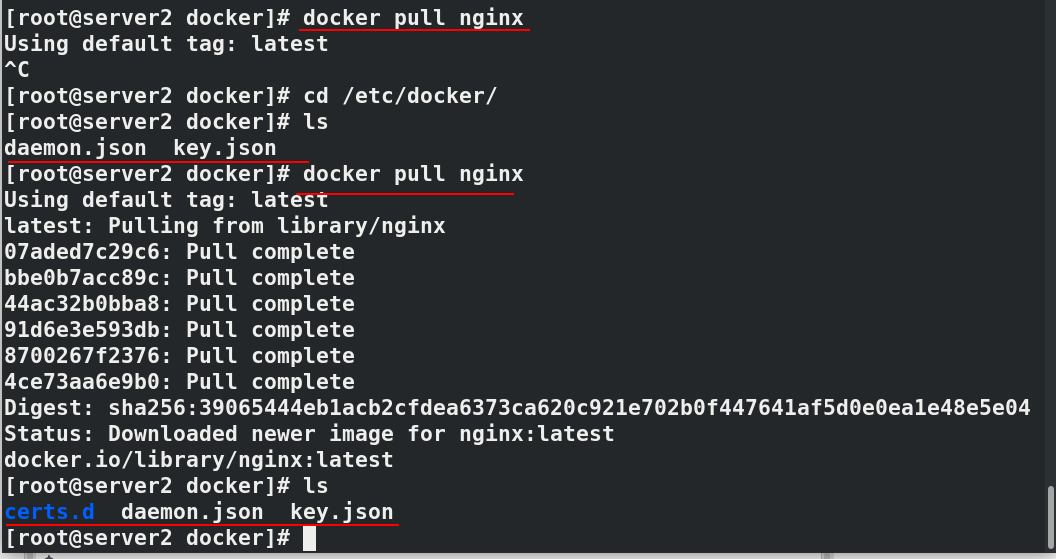

docker info#Check the details of docker, and you can see that the image warehouse points to reg.westos.org ping reg.westos.org#Can ping through docker pull nginx#Unable to pull because server2 does not have encryption authentication

#In server1 cd /etc/docker/ ls---->certs.d scp -r certs.d server2:/etc/docker/ #In server2 ls----->certs.d docker pull nginx#Pull successful

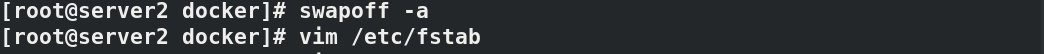

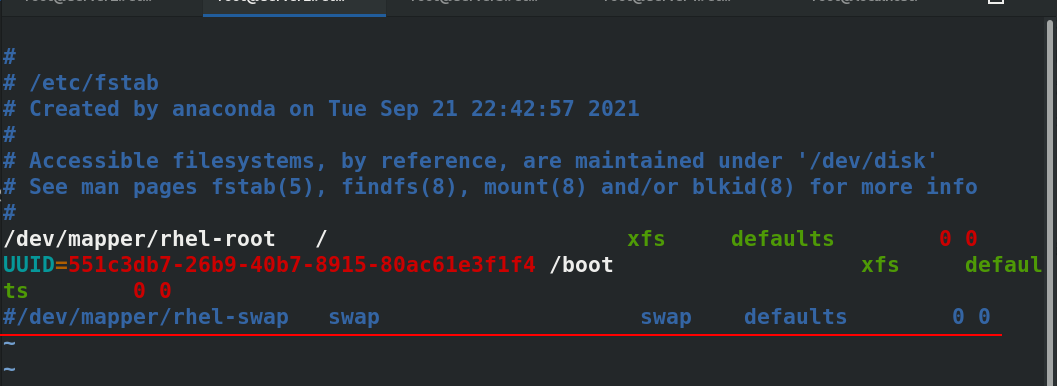

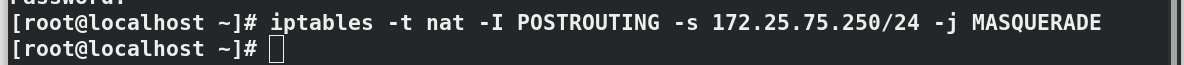

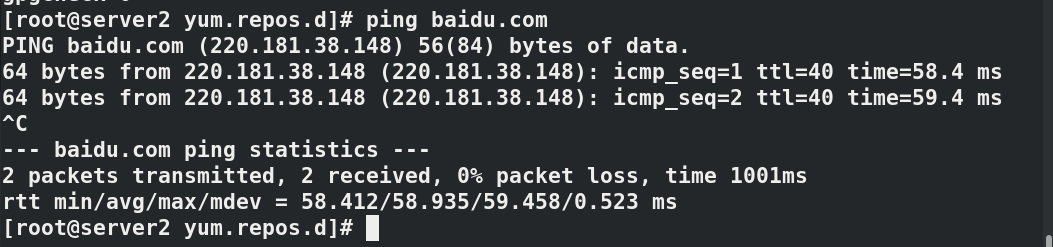

#Disable swap partition swapoff -a vim /etc/fstab #Comment out the swap partition ---------------------------- /dev/mapper/rhel-root / xfs defaults 0 0 UUID=551c3db7-26b9-40b7-8915-80ac61e3f1f4 /boot xfs defaults 0 0 #/dev/mapper/rhel-swap swap swap defaults 0 0 ---------------------------- cd /etc/yum.repos.d/ vim k8s.repo ------------------------- [kubernetes] name=Kubernetes baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/ enabled=1 gpgcheck=0 ------------------------- ping baidi.com #The real machine can ping Baidu after opening iptables address camouflage

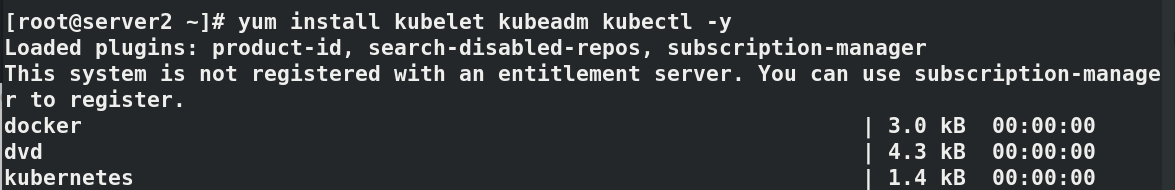

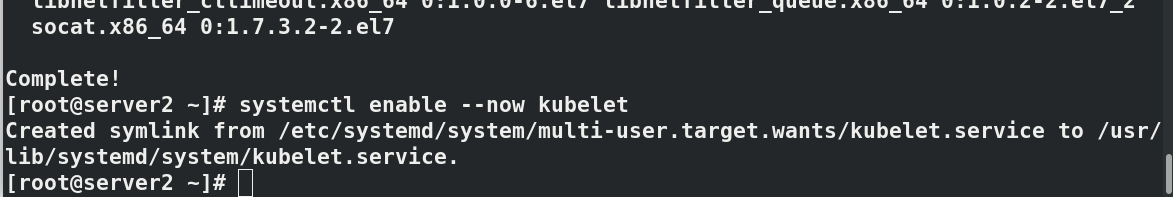

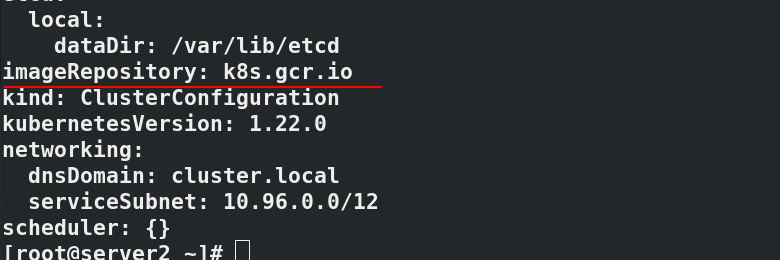

yum install kubelet kubeadm kubectl -y systemctl enable --now kubelet kubeadm config print init-defaults#View default information

#Only in server2 in the future

#By default, the component image is downloaded from k8s.gcr.io. You need to climb over the wall, so you need to modify the image warehouse:

#List the required mirrors

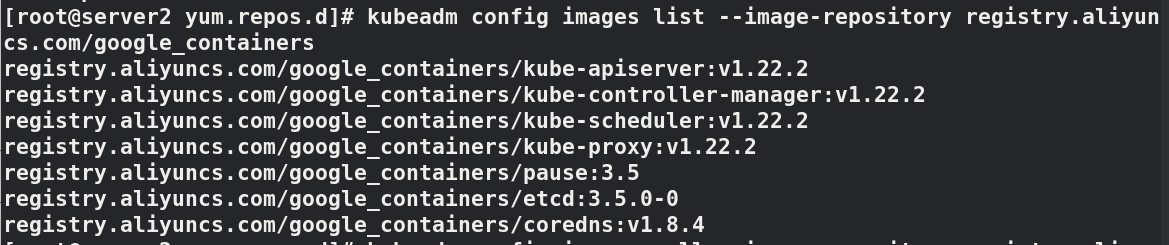

kubeadm config images list --image-repository registry.aliyuncs.com/google_containers

#Pull image

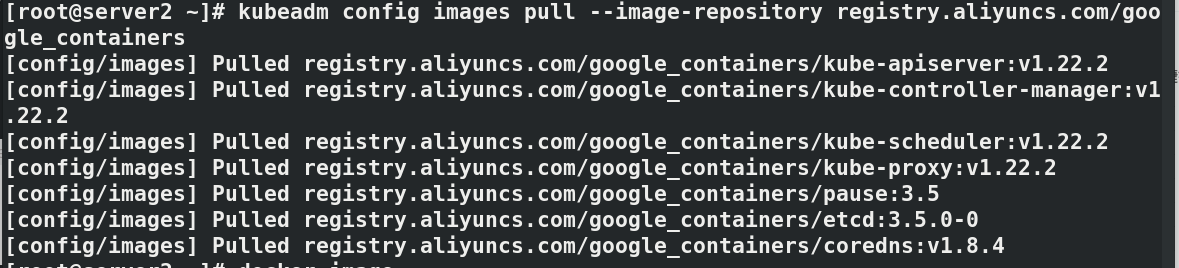

kubeadm config images pull --image-repository registry.aliyuncs.com/google_containers

docker images

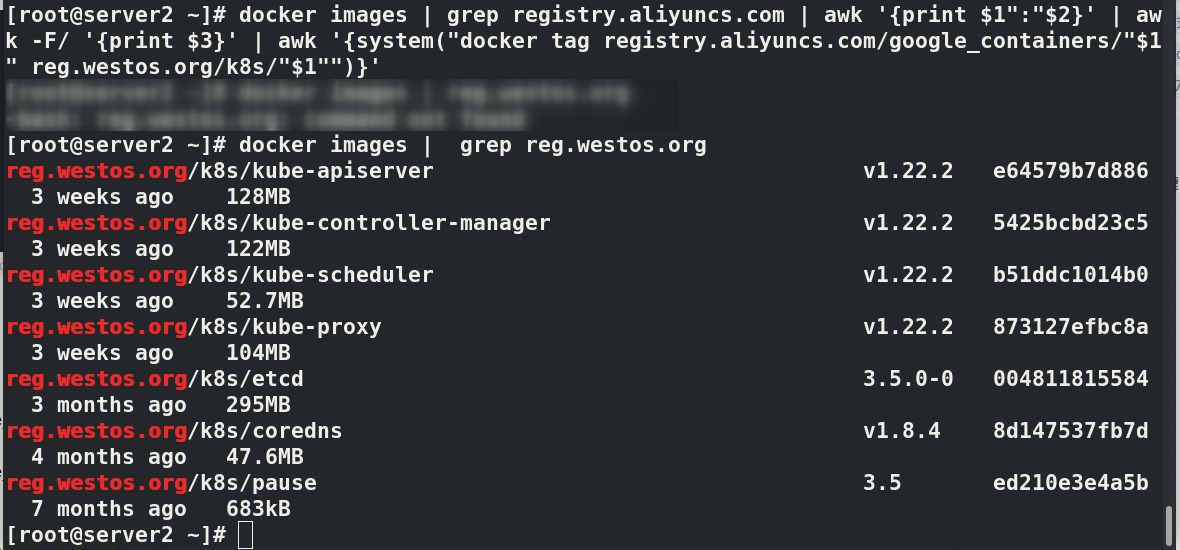

docker images | grep registry.aliyuncs.com | awk '{print $1":"$2}' | awk -F/ '{print $3}' | awk '{system("docker tag registry.aliyuncs.com/google_containers/"$1" reg.westos.org/k8s/"$1"")}'

docker images | grep reg.westos.org

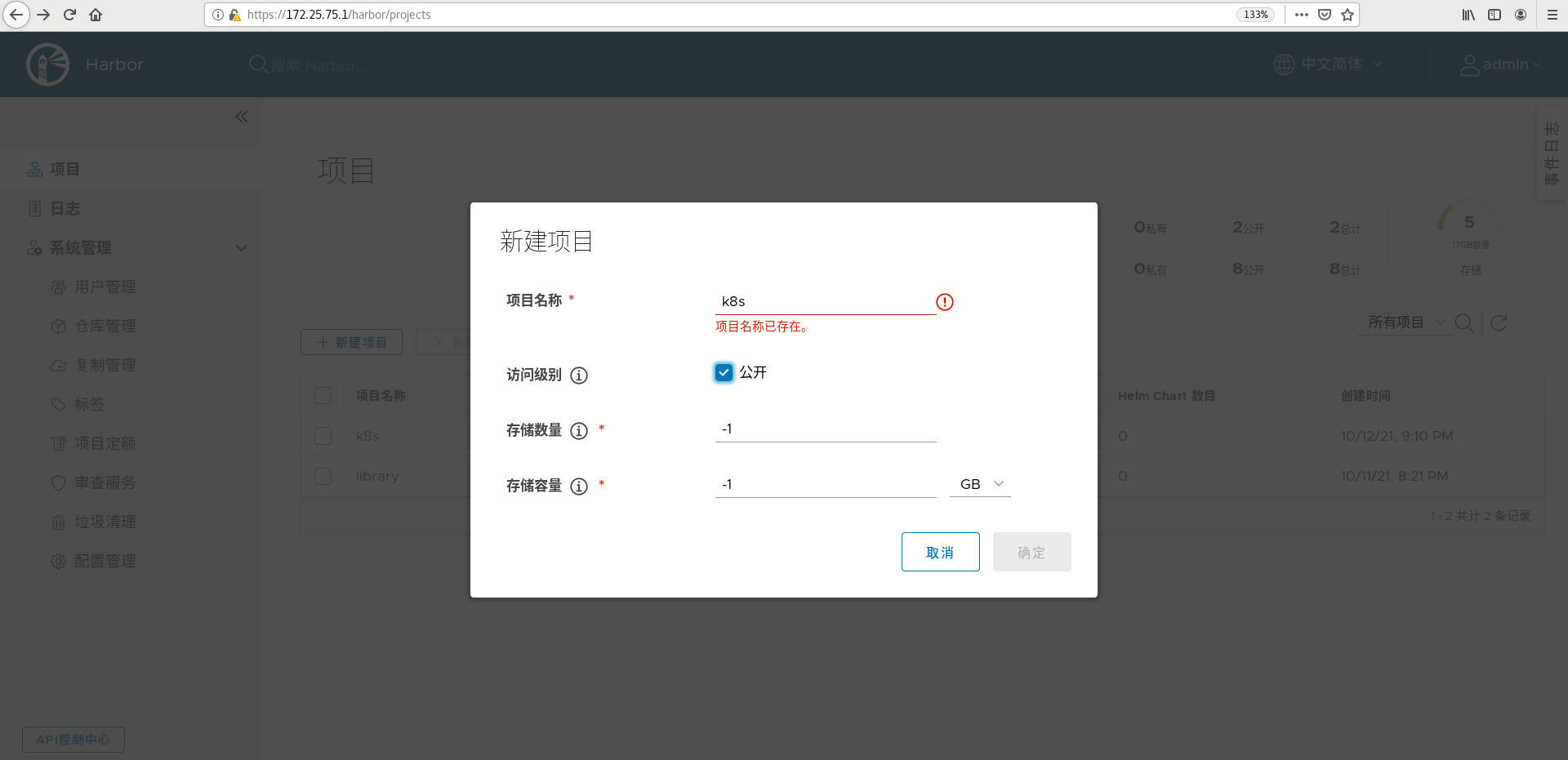

#Create a new public k8s warehouse on the web interface of harbor warehouse (the figure is only an example, which has been established before)

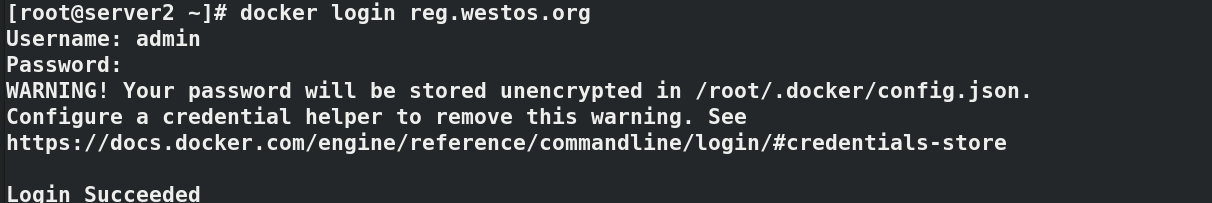

docker login reg.westos.org

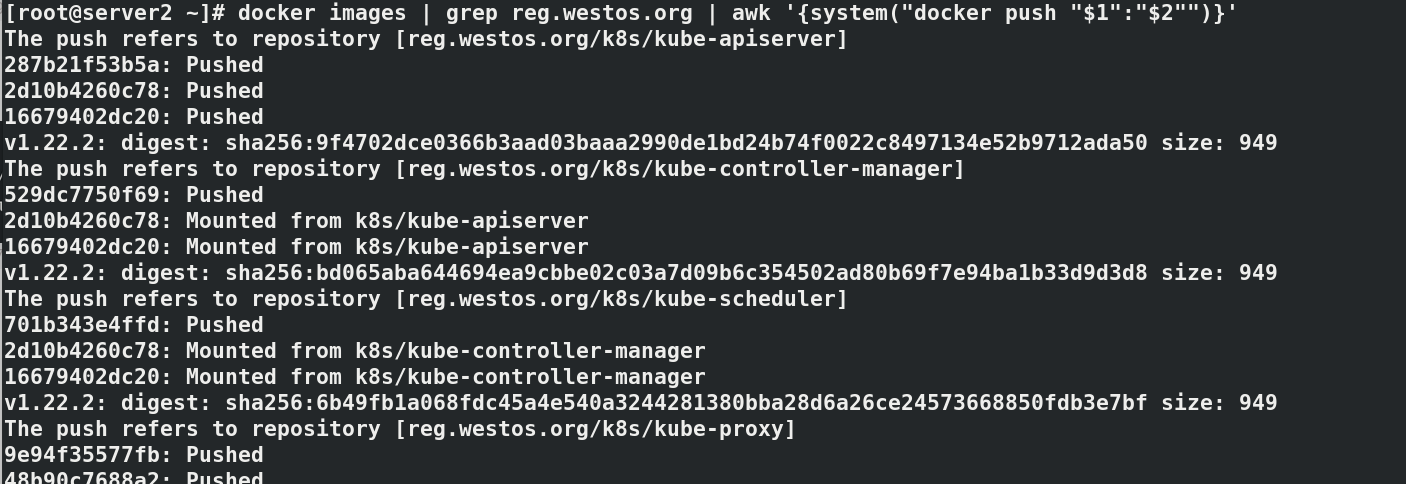

docker images | grep reg.westos.org | awk '{system("docker push "$1":"$2"")}'

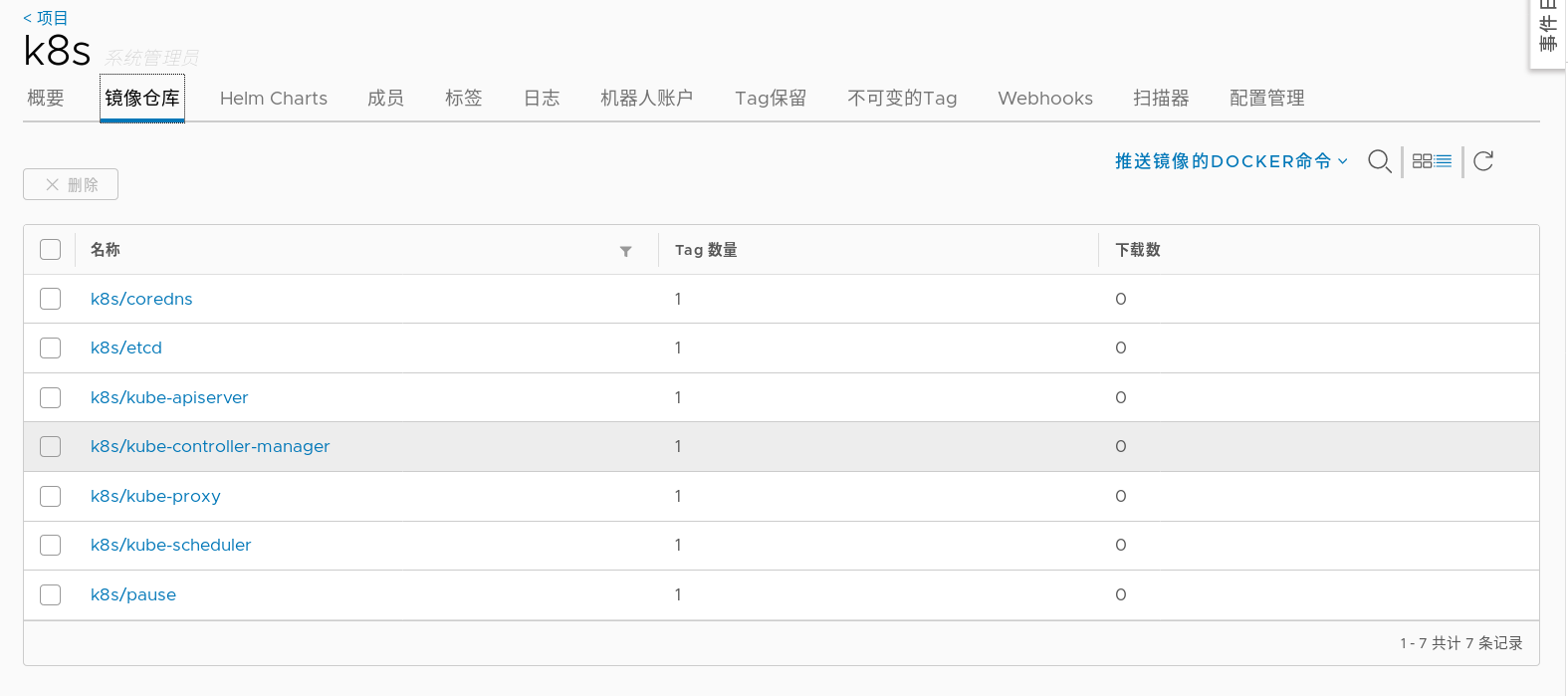

#After uploading the image successfully, you can see 7 images in the web interface

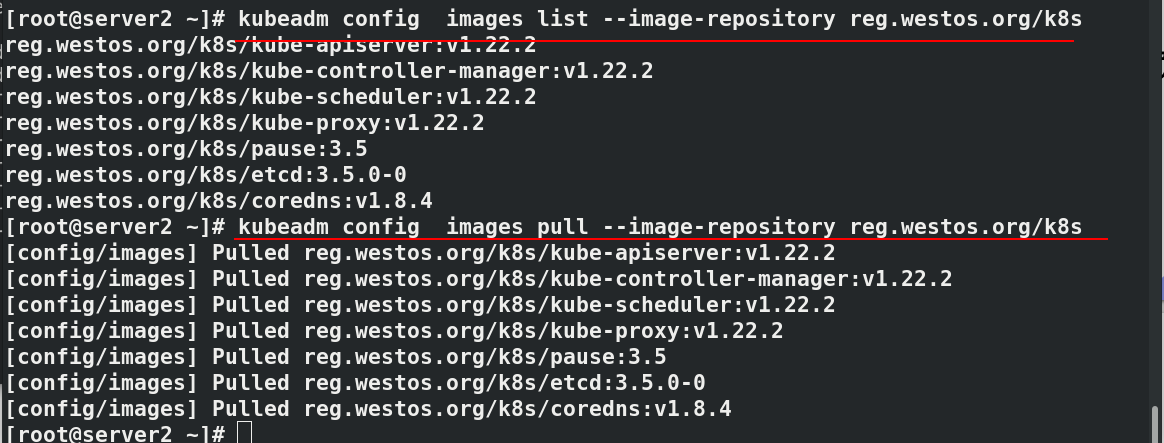

kubeadm config images list --image-repository reg.westos.org/k8s

kubeadm config images pull --image-repository reg.westos.org/k8s

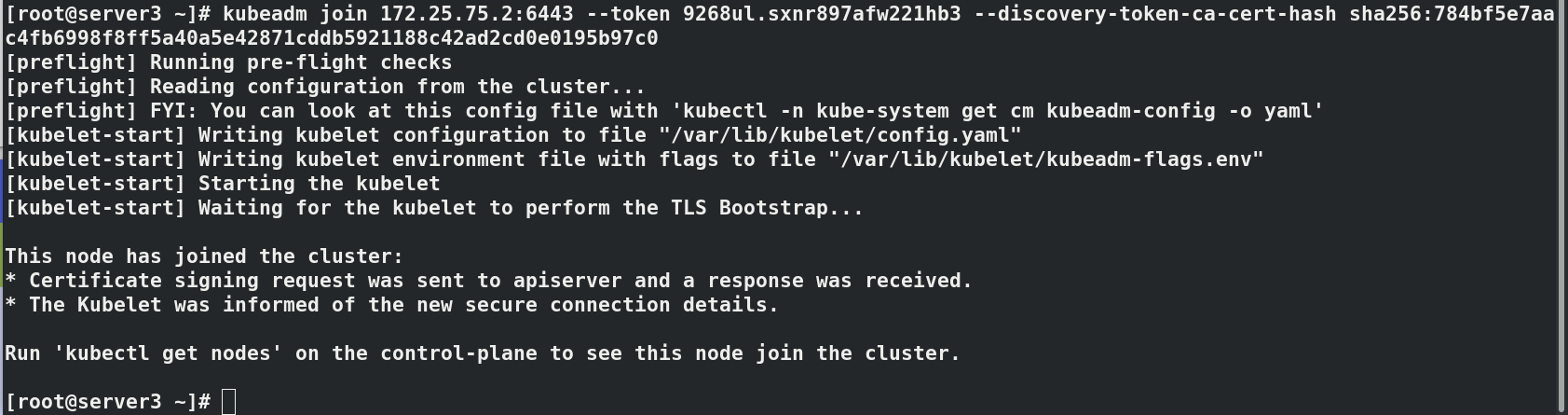

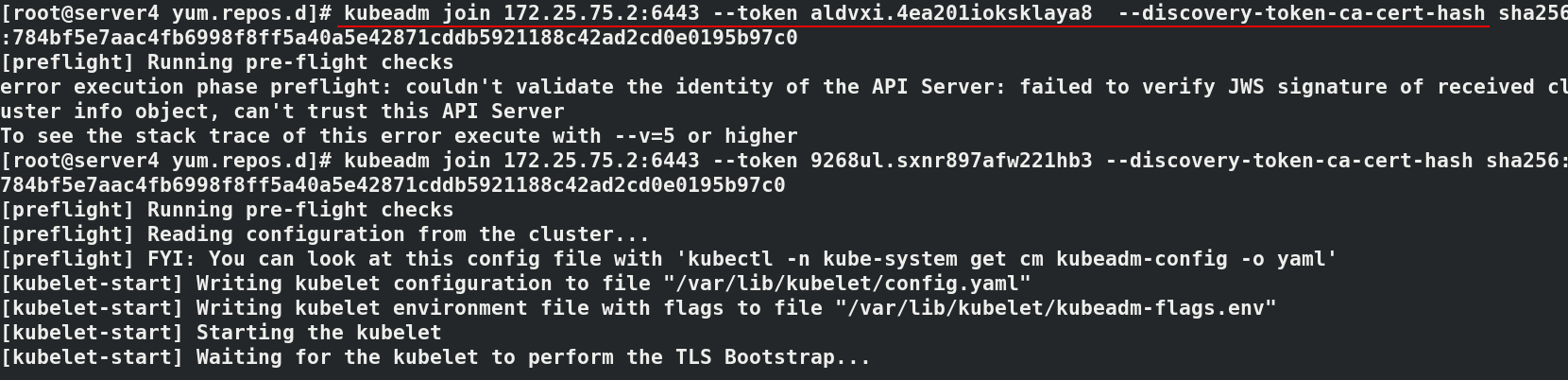

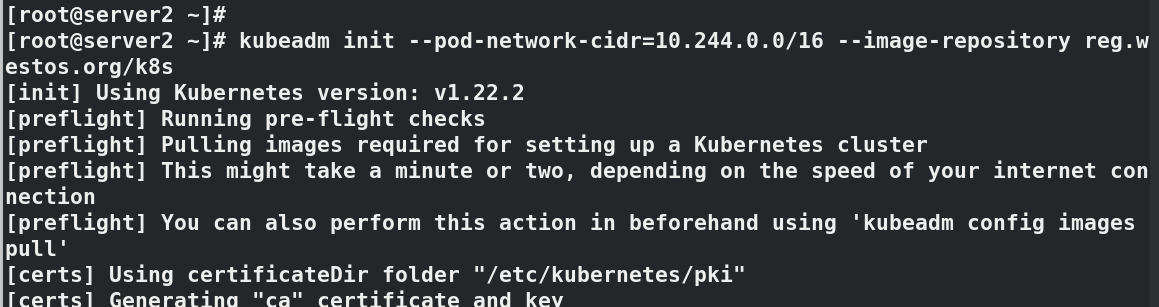

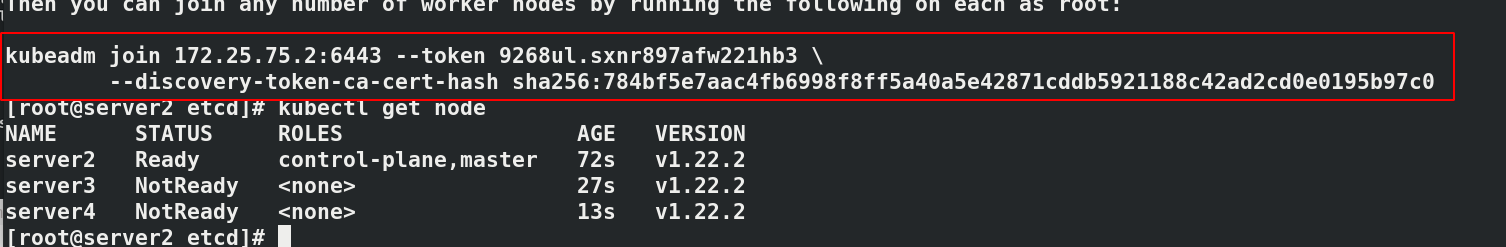

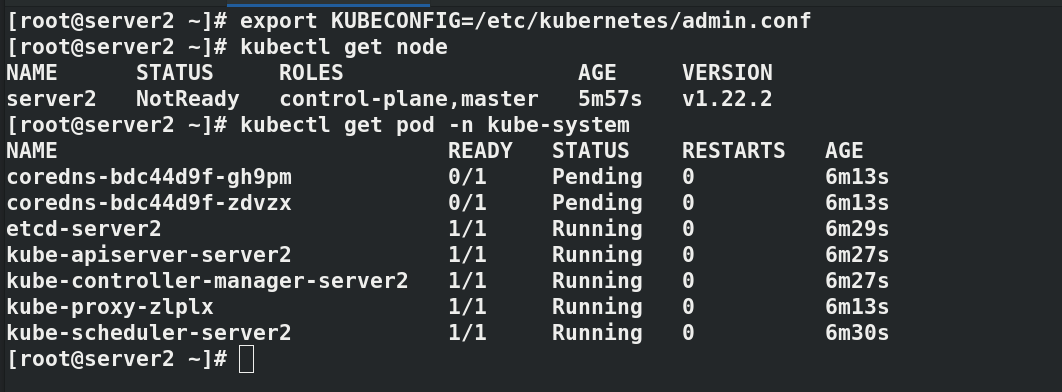

#Initialize cluster kubeadm init --pod-network-cidr=10.244.0.0/16 --image-repository reg.westos.org/k8s #--Pod network CIDR = 10.244.0.0/16 required when using flannel network components # --Kubernetes version specifies the k8s installed version ------------------------------------ #It will be used for subsequent operations, which will vary according to each operation kubeadm join 172.25.75.2:6443 --token 9268ul.sxnr897afw221hb3 \ --discovery-token-ca-cert-hash sha256:784bf5e7aac4fb6998f8ff5a40a5e42871cddb5921188c42ad2cd0e0195b97c0 ------------------------------------- export KUBECONFIG=/etc/kubernetes/admin.conf kubectl get node#NotReady indicates that the component is not working properly kubectl get pod -n kube-system#pending indicates that the component is not working properly

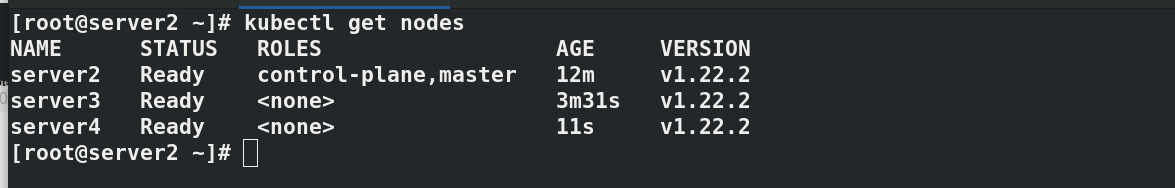

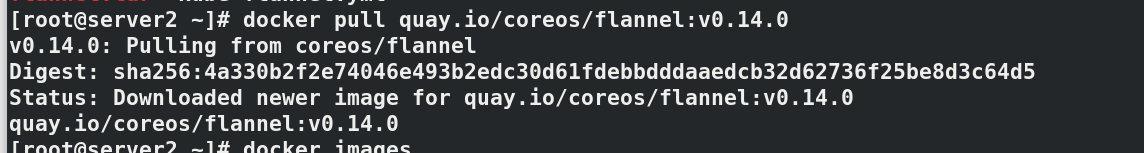

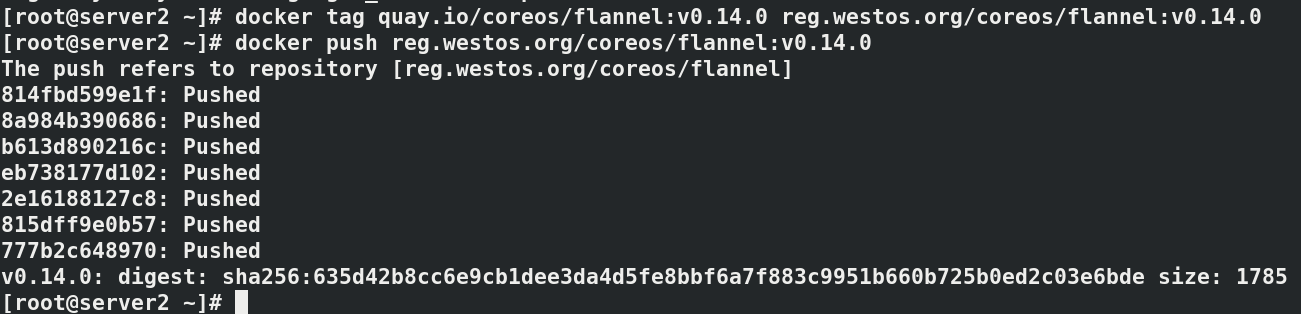

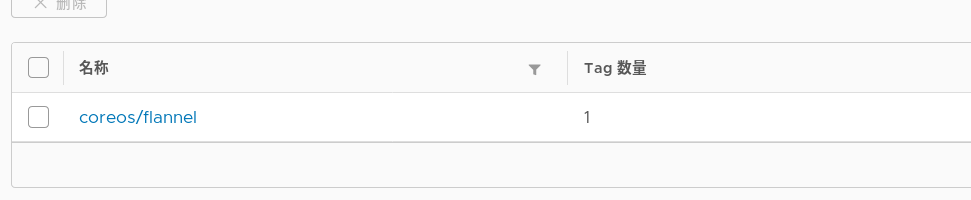

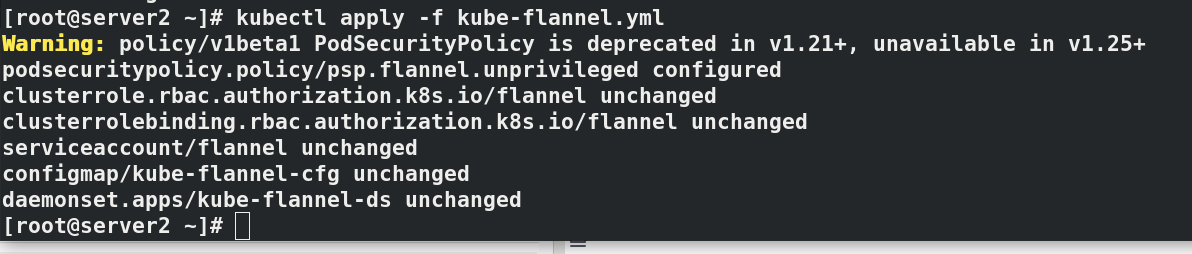

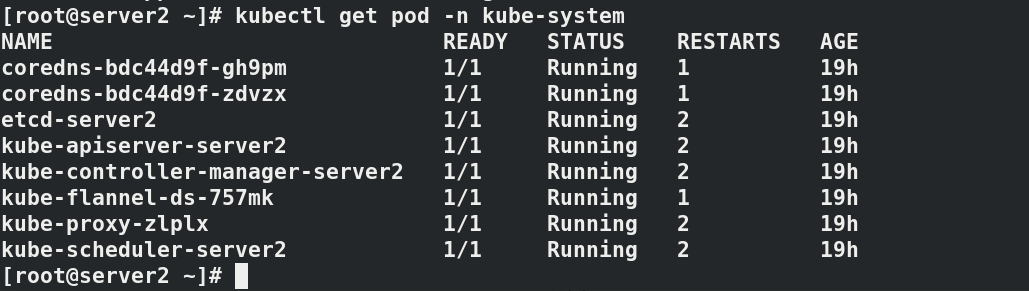

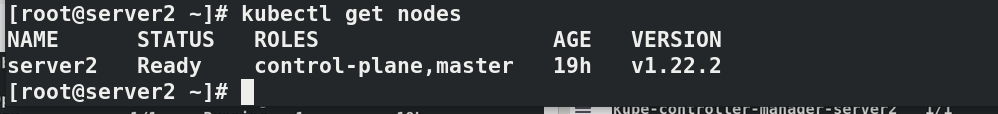

install flannel Network components yum install wget -y wget https://raw.githubusercontent.com/coreos/flannel/master/Documentat ion/kube-flannel.yml kubectl apply -f kube-flannel.yml to configure kubectl Command completion function: echo "source <(kubectl completion bash)" >> ~/.bashrc source .bashrc docker pull quay.io/coreos/flannel:v0.14.0 docker tag quay.io/coreos/flannel:v0.14.0 reg.westos.org/coreos/flannel:v0.14.0 docker push reg.westos.org/coreos/flannel:v0.14.0 kubectl get pod -n kube-system#All in operation kubectl get nodes#server2 is in ready state

! [insert picture description here]( https://img-blog.csdnimg.cn/46210e628285408f989e4e48384a5b17.png

#Paste the prompts initialized in the master node host server2 in server3 and 4 kubeadm join 172.25.75.2:6443 --token 9268ul.sxnr897afw221hb3 \ --discovery-token-ca-cert-hash sha256:784bf5e7aac4fb6998f8ff5a40a5e42871cddb5921188c42ad2cd0e0195b97c0 #In server2 kubectl get node