Cluster Resource and Role Planning

| node1 | node2 | node3 | node4 | node5 |

|---|---|---|---|---|

| zookeeper | zookeeper | zookeeper | ||

| nn1 | nn2 | |||

| datanode | datanode | datanode | datanode | datanode |

| journal | journal | journal | ||

| rm1 | rm2 | |||

| nodemanager | nodemanager | nodemanager | nodemanager | nodemanager |

| HMaster | HMaster | |||

| HRegionServer | HRegionServer | HRegionServer |

I. Editors hbase-env.sh file

#!/usr/bin/env bash

#

#/**

# * Licensed to the Apache Software Foundation (ASF) under one

# * or more contributor license agreements. See the NOTICE file

# * distributed with this work for additional information

# * regarding copyright ownership. The ASF licenses this file

# * to you under the Apache License, Version 2.0 (the

# * "License"); you may not use this file except in compliance

# * with the License. You may obtain a copy of the License at

# *

# * http://www.apache.org/licenses/LICENSE-2.0

# *

# * Unless required by applicable law or agreed to in writing, software

# * distributed under the License is distributed on an "AS IS" BASIS,

# * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# * See the License for the specific language governing permissions and

# * limitations under the License.

# */

# Set environment variables here.

# This script sets variables multiple times over the course of starting an hbase process,

# so try to keep things idempotent unless you want to take an even deeper look

# into the startup scripts (bin/hbase, etc.)

# The java implementation to use. Java 1.8+ required.

export JAVA_HOME=/usr/local/java

# Extra Java CLASSPATH elements. Optional.

# export HBASE_CLASSPATH=

# The maximum amount of heap to use. Default is left to JVM default.

export HBASE_HEAPSIZE=2G

# Uncomment below if you intend to use off heap cache. For example, to allocate 8G of

# offheap, set the value to "8G".

# export HBASE_OFFHEAPSIZE=1G

# Extra Java runtime options.

# Below are what we set by default. May only work with SUN JVM.

# For more on why as well as other possible settings,

# see http://hbase.apache.org/book.html#performance

export HBASE_OPTS="$HBASE_OPTS -XX:+UseConcMarkSweepGC"

# Uncomment one of the below three options to enable java garbage collection logging for the server-side processes.

# This enables basic gc logging to the .out file.

# export SERVER_GC_OPTS="-verbose:gc -XX:+PrintGCDetails -XX:+PrintGCDateStamps"

# This enables basic gc logging to its own file.

# If FILE-PATH is not replaced, the log file(.gc) would still be generated in the HBASE_LOG_DIR .

# export SERVER_GC_OPTS="-verbose:gc -XX:+PrintGCDetails -XX:+PrintGCDateStamps -Xloggc:<FILE-PATH>"

# This enables basic GC logging to its own file with automatic log rolling. Only applies to jdk 1.6.0_34+ and 1.7.0_2+.

# If FILE-PATH is not replaced, the log file(.gc) would still be generated in the HBASE_LOG_DIR .

# export SERVER_GC_OPTS="-verbose:gc -XX:+PrintGCDetails -XX:+PrintGCDateStamps -Xloggc:<FILE-PATH> -XX:+UseGCLogFileRotation -XX:NumberOfGCLogFiles=1 -XX:GCLogFileSize=512M"

# Uncomment one of the below three options to enable java garbage collection logging for the client processes.

# This enables basic gc logging to the .out file.

# export CLIENT_GC_OPTS="-verbose:gc -XX:+PrintGCDetails -XX:+PrintGCDateStamps"

# This enables basic gc logging to its own file.

# If FILE-PATH is not replaced, the log file(.gc) would still be generated in the HBASE_LOG_DIR .

# export CLIENT_GC_OPTS="-verbose:gc -XX:+PrintGCDetails -XX:+PrintGCDateStamps -Xloggc:<FILE-PATH>"

# This enables basic GC logging to its own file with automatic log rolling. Only applies to jdk 1.6.0_34+ and 1.7.0_2+.

# If FILE-PATH is not replaced, the log file(.gc) would still be generated in the HBASE_LOG_DIR .

# export CLIENT_GC_OPTS="-verbose:gc -XX:+PrintGCDetails -XX:+PrintGCDateStamps -Xloggc:<FILE-PATH> -XX:+UseGCLogFileRotation -XX:NumberOfGCLogFiles=1 -XX:GCLogFileSize=512M"

# See the package documentation for org.apache.hadoop.hbase.io.hfile for other configurations

# needed setting up off-heap block caching.

# Uncomment and adjust to enable JMX exporting

# See jmxremote.password and jmxremote.access in $JRE_HOME/lib/management to configure remote password access.

# More details at: http://java.sun.com/javase/6/docs/technotes/guides/management/agent.html

# NOTE: HBase provides an alternative JMX implementation to fix the random ports issue, please see JMX

# section in HBase Reference Guide for instructions.

# export HBASE_JMX_BASE="-Dcom.sun.management.jmxremote.ssl=false -Dcom.sun.management.jmxremote.authenticate=false"

# export HBASE_MASTER_OPTS="$HBASE_MASTER_OPTS $HBASE_JMX_BASE -Dcom.sun.management.jmxremote.port=10101"

# export HBASE_REGIONSERVER_OPTS="$HBASE_REGIONSERVER_OPTS $HBASE_JMX_BASE -Dcom.sun.management.jmxremote.port=10102"

# export HBASE_THRIFT_OPTS="$HBASE_THRIFT_OPTS $HBASE_JMX_BASE -Dcom.sun.management.jmxremote.port=10103"

# export HBASE_ZOOKEEPER_OPTS="$HBASE_ZOOKEEPER_OPTS $HBASE_JMX_BASE -Dcom.sun.management.jmxremote.port=10104"

# export HBASE_REST_OPTS="$HBASE_REST_OPTS $HBASE_JMX_BASE -Dcom.sun.management.jmxremote.port=10105"

# File naming hosts on which HRegionServers will run. $HBASE_HOME/conf/regionservers by default.

# export HBASE_REGIONSERVERS=${HBASE_HOME}/conf/regionservers

# Uncomment and adjust to keep all the Region Server pages mapped to be memory resident

#HBASE_REGIONSERVER_MLOCK=true

#HBASE_REGIONSERVER_UID="hbase"

# File naming hosts on which backup HMaster will run. $HBASE_HOME/conf/backup-masters by default.

# export HBASE_BACKUP_MASTERS=${HBASE_HOME}/conf/backup-masters

# Extra ssh options. Empty by default.

# export HBASE_SSH_OPTS="-o ConnectTimeout=1 -o SendEnv=HBASE_CONF_DIR"

# Where log files are stored. $HBASE_HOME/logs by default.

export HBASE_LOG_DIR=/home/hadoop/hbase-data/logs

export HBASE_MASTER_OPTS="-Xmx512m"

export HBASE_REGIONSERVER_OPTS="-Xmx1024m"

# Enable remote JDWP debugging of major HBase processes. Meant for Core Developers

# export HBASE_MASTER_OPTS="$HBASE_MASTER_OPTS -Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=n,address=8070"

# export HBASE_REGIONSERVER_OPTS="$HBASE_REGIONSERVER_OPTS -Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=n,address=8071"

# export HBASE_THRIFT_OPTS="$HBASE_THRIFT_OPTS -Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=n,address=8072"

# export HBASE_ZOOKEEPER_OPTS="$HBASE_ZOOKEEPER_OPTS -Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=n,address=8073"

# export HBASE_REST_OPTS="$HBASE_REST_OPTS -Xdebug -Xrunjdwp:transport=dt_socket,server=y,suspend=n,address=8074"

# A string representing this instance of hbase. $USER by default.

# export HBASE_IDENT_STRING=$USER

# The scheduling priority for daemon processes. See 'man nice'.

# export HBASE_NICENESS=10

# The directory where pid files are stored. /tmp by default.

export HBASE_PID_DIR=/home/hadoop/hbase-data/hbase-pids

# Seconds to sleep between slave commands. Unset by default. This

# can be useful in large clusters, where, e.g., slave rsyncs can

# otherwise arrive faster than the master can service them.

# export HBASE_SLAVE_SLEEP=0.1

# Tell HBase whether it should manage it's own instance of ZooKeeper or not.

export HBASE_MANAGES_ZK=false

# The default log rolling policy is RFA, where the log file is rolled as per the size defined for the

# RFA appender. Please refer to the log4j.properties file to see more details on this appender.

# In case one needs to do log rolling on a date change, one should set the environment property

# HBASE_ROOT_LOGGER to "<DESIRED_LOG LEVEL>,DRFA".

# For example:

# HBASE_ROOT_LOGGER=INFO,DRFA

# The reason for changing default to RFA is to avoid the boundary case of filling out disk space as

# DRFA doesn't put any cap on the log size. Please refer to HBase-5655 for more context.

Editing hbase-site.xml

<configuration>

<property>

<name>hbase.rootdir</name>

<value>hdfs://leo/hbase2</value>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

<description>Is it completely distributed?</description>

</property>

<!-- Set up HMaster Of rpc port -->

<property>

<name>hbase.master.port</name>

<value>16000</value>

</property>

<!-- Set up HMaster Of http port -->

<property>

<name>hbase.master.info.port</name>

<value>16010</value>

</property>

<!-- Specify the path to cache file storage -->

<property>

<name>hbase.tmp.dir</name>

<value>/home/hadoop/hbase-data/tmp/</value>

</property>

<property>

<name>hbase.zookeeper.quorum</name>

<value>node3,node4,node5</value>

</property>

<property>

<name>hbase.zookeeper.property.clientPort</name>

<value>2181</value>

</property>

<property>

<name>hbase.zookeeper.property.dataDir</name>

<value>/home/hadoop/zookeeper-data/data</value>

<description>property from zoo.cfg,the directory where the snapshot is stored</description>

</property>

<!-- \\\\\\\\\\The following is the optimization configuration item\\\\\\\\\\ -->

<!-- Turn off Distributed Log Splitting -->

<property>

<name>hbase.master.distributed.log.splitting</name>

<value>false</value>

</property>

<!-- hbase Client rpc Number of rows scanned once -->

<property>

<name>hbase.client.scanner.caching</name>

<value>2000</value>

</property>

<!-- HRegion Maximum file size before splitting (10 G) -->

<property>

<name>hbase.hregion.max.filesize</name>

<value>10737418240</value>

</property>

<!-- HRegionServer The largest region Number -->

<property>

<name>hbase.regionserver.reginoSplitLimit</name>

<value>2000</value>

</property>

<!-- StoreFile When the number exceeds that, the merger begins. -->

<property>

<name>hbase.hstore.compactionThreshold</name>

<value>6</value>

</property>

<!-- When one region Of storefile If the number reaches this value block Write, Wait compact -->

<property>

<name>hbase.hstore.blockingStoreFiles</name>

<value>14</value>

</property>

<!-- Exceed memstore When the multiple of size reaches this value block All write requests, self-protection -->

<property>

<name>hbase.hregion.memstore.block.multiplier</name>

<value>20</value>

</property>

<!-- service Working sleep interval -->

<property>

<name>hbase.server.thread.wakefrequency</name>

<value>500</value>

</property>

<!-- ZooKeeper Number of concurrent connections accessed by clients at the same time -->

<property>

<name>hbase.zookeeper.property.maxClientCnxns</name>

<value>2000</value>

</property>

<!-- Configuration according to business situation -->

<property>

<name>hbase.regionserver.global.memstore.size.lower.limit</name>

<value>0.3</value>

</property>

<property>

<name>hbase.regionserver.global.memstore.size</name>

<value>0.39</value>

</property>

<property>

<name>hbase.block.cache.size</name>

<value>0.4</value>

</property>

<!-- RegionServer Request processing IO Number of threads -->

<property>

<name>hbase.reginoserver.handler.count</name>

<value>300</value>

</property>

<!-- Maximum number of client retries -->

<property>

<name>hbase.client.retries.number</name>

<value>5</value>

</property>

<!-- Sleep time of client retry -->

<property>

<name>hbase.client.pause</name>

<value>100</value>

</property>

<property>

<name>hbase.unsafe.stream.capability.enforce</name>

<value>false</value>

<description>Fully distributed must be false</description>

</property>

</configuration>

Configuration of regionservers

node3 node4 node5

4. Create a new backup-masters file and configure it

node2

5. Create a directory of cached files for hbase

mkdir -p /home/hadoop/hbase-data/tmp/

6. Create a directory of log files for hbase

mkdir -p /home/hadoop/hbase-data/logs/

7. Create pid file directory for hbase

mkdir -p /home/hadoop/hbase-data/hbase-pids/

8. Distributing hbase to other nodes

scp -r hbase-2.1.3/ hadoop@node2:/home/hadoop/hbase-2.1.3/

9. Modify the environment variables on the rest of the cluster nodes to create the required directories

export HBASE_HOME=/home/hadoop/hbase-2.1.3 export PATH=$PATH:$HBASE_HOME/bin:$HBASE_HOME/sbin:$PATH

10. Delete slf4j-log4j12-1.7.25.jar of hbase to resolve LSF4J package conflict between hbase and hadoop

mv /home/hadoop/hbase-2.1.3/lib/client-facing-thirdparty/slf4j-log4j12-1.7.25.jar /home/hadoop/hbase-2.1.3/lib/client-facing-thirdparty/slf4j-log4j12-1.7.25.jar.bk

Integration of HDFS and HBase

HBase stores data on HDFS, so copy hdfs-site.xml and core-site.xml files to the conf directory of hbase.

cp /home/hadoop/hadoop-2.7.4/etc/hadoop/hdfs-site.xml /home/hadoop/hbase-2.1.3/conf/ cp /home/hadoop/hadoop-2.7.4/etc/hadoop/core-site.xml /home/hadoop/hbase-2.1.3/conf/

Duplicate htrace packages

cp /home/hadoop/hbase-2.1.3/lib/client-facing-thirdparty/htrace-core-3.1.0-incubating.jar /home/hadoop/hbase-2.1.3/lib/

For specific reasons, please refer to HBase HMaster process java.lang.NoClassDefFoundError: org/apache/htrace/SamplerBuilder solution

13. Start Hbase

We configure the Base with five nodes. When we start the hbase, which node is the hmaster when we start the hbase.

Run commands on node1 node:

start-hbase.sh

jps view related processes

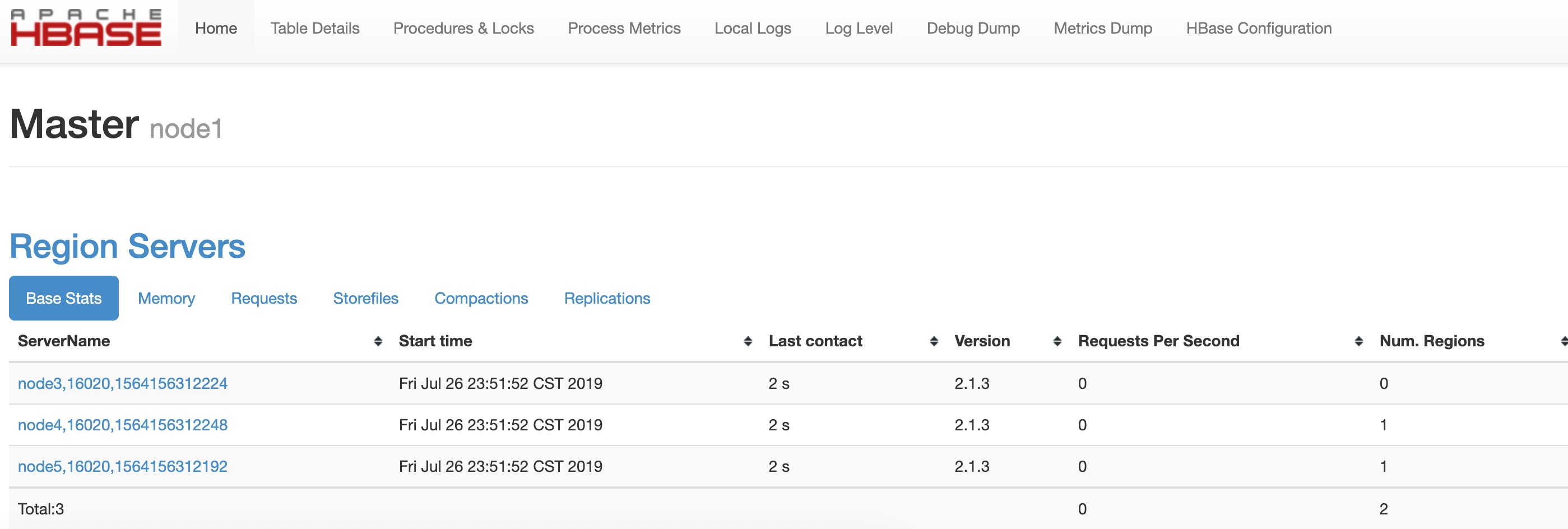

web ui Views HBASE Cluster Information

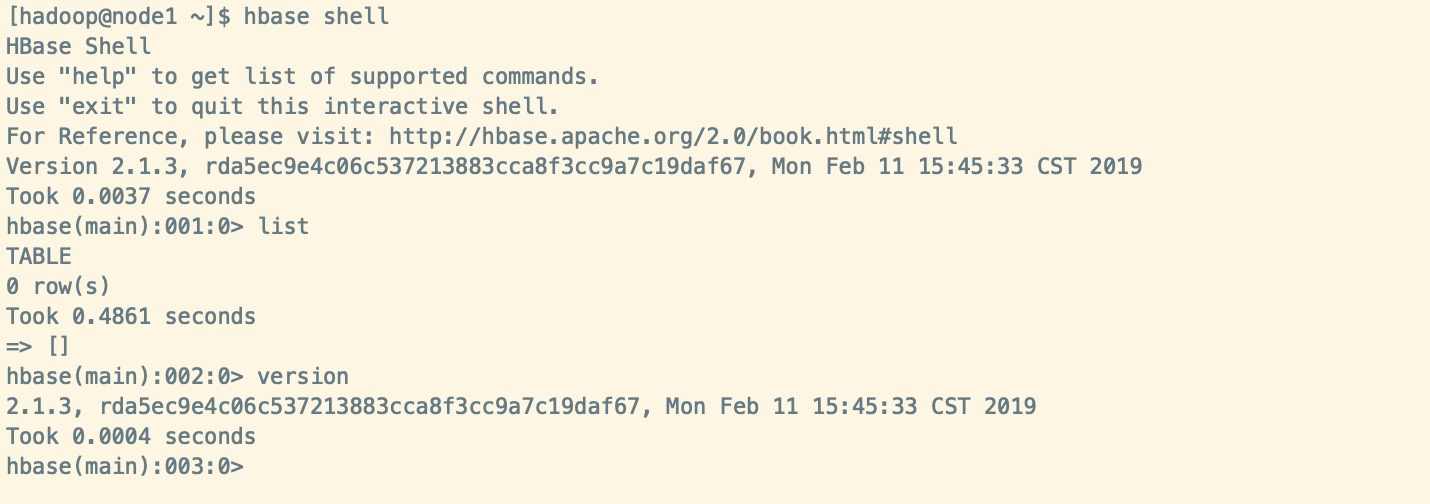

hbase shell

summary

This is the process of building and configuring hbase high availability cluster. For specific use or other operations, please refer to other documents.