Container network configuration of Docker

The creation of namespace in Linux kernel

ip netns command

You can complete various operations on the Network Namespace with the help of the ip netns command. The ip netns command comes from the iproute installation package. Generally, the system will install it by default. If not, please install it yourself.

Note: sudo permission is required when the ip netns command modifies the network configuration.

You can complete the operations related to the Network Namespace through the ip netns command. You can view the command help information through the ip netns help:

[root@docker ~]# yum -y install iproute [root@docker ~]# ip netns help Usage: ip netns list ip netns add NAME ip netns attach NAME PID ip netns set NAME NETNSID ip [-all] netns delete [NAME] ip netns identify [PID] ip netns pids NAME ip [-all] netns exec [NAME] cmd ... ip netns monitor ip netns list-id [target-nsid POSITIVE-INT] [nsid POSITIVE-INT] NETNSID := auto | POSITIVE-INT

By default, there is no Network Namespace in the Linux system, so the ip netns list command will not return any information.

Create a Network Namespace

Create a namespace named xym through the command:

[root@docker ~]# ip netns list [root@docker ~]# ip netns add xym [root@docker ~]# ip netns list xym

The newly created Network Namespace will appear in the / var/run/netns / directory. If a namespace with the same name already exists, the command will report an error of Cannot create namespace file "/ var/run/netns/xym": File exists.

[root@docker ~]# ls /var/run/netns/ xym [root@docker ~]# ip netns add xym Cannot create namespace file "/var/run/netns/xym": File exists

For each Network Namespace, it will have its own independent network card, routing table, ARP table, iptables and other network related resources.

Operation Network Namespace

The ip command provides the ip netns exec subcommand, which can be executed in the corresponding Network Namespace.

View the network card information of the newly created Network Namespace

[root@docker ~]# ip netns exec xym ip addr

1: lo: <LOOPBACK> mtu 65536 qdisc noop state DOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

You can see that a lo loopback network card will be created by default in the newly created Network Namespace, and the network card is closed at this time. At this time, if you try to ping the lo loopback network card, you will be prompted that Network is unreachable

[root@docker ~]# ip netns exec xym ping 127.0.0.1 connect: Network is unreachable

Enable lo loopback network card with the following command:

[root@docker ~]# ip netns exec xym ip link set lo up [root@docker ~]# ip netns exec xym ping 127.0.0.1 PING 127.0.0.1 (127.0.0.1) 56(84) bytes of data. 64 bytes from 127.0.0.1: icmp_seq=1 ttl=64 time=0.046 ms 64 bytes from 127.0.0.1: icmp_seq=2 ttl=64 time=0.025 ms 64 bytes from 127.0.0.1: icmp_seq=3 ttl=64 time=0.022 ms 64 bytes from 127.0.0.1: icmp_seq=4 ttl=64 time=0.027 ms

Transfer equipment

We can transfer devices (such as veth) between different network namespaces. Since a device can only belong to one Network Namespace, the device cannot be seen in the Network Namespace after transfer.

Among them, veth devices are transferable devices, while many other devices (such as lo, vxlan, ppp, bridge, etc.) are not transferable.

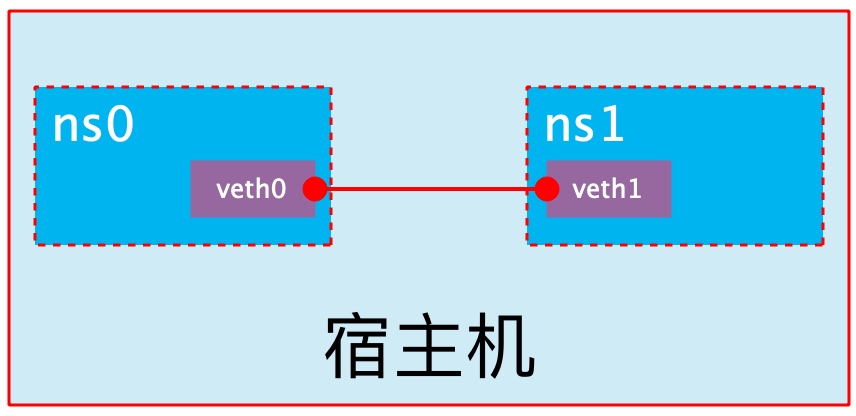

veth pair

The full name of veth pair is Virtual Ethernet Pair. It is a pair of ports. All packets entering from one end of the pair of ports will come out from the other end, and vice versa.

veth pair is introduced to communicate directly in different network namespaces. It can be used to connect two network namespaces directly.

[root@docker ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:d1:aa:86 brd ff:ff:ff:ff:ff:ff

inet 192.168.200.150/24 brd 192.168.200.255 scope global dynamic noprefixroute ens33

valid_lft 1258sec preferred_lft 1258sec

inet6 fe80::3f7:eb08:5d5b:98f5/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:63:35:ef:ca brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

[root@docker ~]# ip link add type veth

[root@docker ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:d1:aa:86 brd ff:ff:ff:ff:ff:ff

inet 192.168.200.150/24 brd 192.168.200.255 scope global dynamic noprefixroute ens33

valid_lft 1237sec preferred_lft 1237sec

inet6 fe80::3f7:eb08:5d5b:98f5/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:63:35:ef:ca brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

4: veth0@veth1: <BROADCAST,MULTICAST,M-DOWN> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 9a:3c:a3:f8:7c:b5 brd ff:ff:ff:ff:ff:ff

5: veth1@veth0: <BROADCAST,MULTICAST,M-DOWN> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 1a:58:1d:76:26:1c brd ff:ff:ff:ff:ff:ff

You can see that a pair of Veth pairs are added to the system to connect the two virtual network cards veth0 and veth1. At this time, the pair of Veth pairs are in the "not enabled" state.

Enable communication between network namespaces

Next, we use veth pair to realize the communication between two different network namespaces. Just now, we have created a Network Namespace named xym. Next, we will create another information Network Namespace named linlu

[root@docker ~]# ip netns list

linlu

xym

[root@docker ~]# ip netns exec xym ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

[root@docker ~]# ip netns exec linlu ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

Then we add veth0 to xym and veth1 to linlu

[root@docker ~]# ip link set veth0 netns xym

[root@docker ~]# ip netns exec xym ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

4: veth0@if5: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 9a:3c:a3:f8:7c:b5 brd ff:ff:ff:ff:ff:ff link-netnsid 0

[root@docker ~]# ip link set veth1 netns linlu

[root@docker ~]# ip netns exec linlu ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

5: veth1@if4: <BROADCAST,MULTICAST> mtu 1500 qdisc noop state DOWN group default qlen 1000

link/ether 1a:58:1d:76:26:1c brd ff:ff:ff:ff:ff:ff link-netns xym

Then we configure the ip addresses for these Veth pairs and enable them

[root@docker ~]# ip netns exec xym ip link set veth0 up

[root@docker ~]# ip netns exec xym ip addr add 192.168.200.180/24 dev veth0

[root@docker ~]# ip netns exec xym ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

4: veth0@if5: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state LOWERLAYERDOWN group default qlen 1000

link/ether 9a:3c:a3:f8:7c:b5 brd ff:ff:ff:ff:ff:ff link-netns linlu

inet 192.168.200.180/24 scope global veth0

valid_lft forever preferred_lft forever

[root@docker ~]# ip netns exec linlu ip link set veth1 up

[root@docker ~]# ip netns exec linlu ip addr add 192.168.200.190/24 dev veth1

[root@docker ~]# ip netns exec linlu ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

5: veth1@if4: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 1a:58:1d:76:26:1c brd ff:ff:ff:ff:ff:ff link-netns xym

inet 192.168.200.190/24 scope global veth1

valid_lft forever preferred_lft forever

inet6 fe80::1858:1dff:fe76:261c/64 scope link

valid_lft forever preferred_lft forever

As can be seen from the above, we have successfully enabled this veth pair and assigned the corresponding ip address to each veth device. We try to access the ip address in ns0 in ns1:

[root@docker ~]# ip netns exec xym ping 192.168.200.190 PING 192.168.200.190 (192.168.200.190) 56(84) bytes of data. 64 bytes from 192.168.200.190: icmp_seq=1 ttl=64 time=0.038 ms 64 bytes from 192.168.200.190: icmp_seq=2 ttl=64 time=0.025 ms

It can be seen that veth pair successfully realizes the network interaction between two different network namespaces.

veth device rename

[root@docker ~]# ip netns exec xym ip link set veth0 down

[root@docker ~]# ip netns exec xym ip link set dev veth0 name eth0[root@docker ~]# ip netns exec xym ip link set eth0 up

[root@docker ~]# ip netns exec xym ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

4: eth0@if5: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default qlen 1000

link/ether 9a:3c:a3:f8:7c:b5 brd ff:ff:ff:ff:ff:ff link-netns linlu

inet 192.168.200.180/24 scope global eth0

valid_lft forever preferred_lft forever

inet6 fe80::983c:a3ff:fef8:7cb5/64 scope link

valid_lft forever preferred_lft forever

Four network mode configurations

bridge mode configuration

[root@docker ~]# docker run -it --name nginx --rm linlusama/centos-nginx:v1.20.1 /bin/sh

sh-4.4# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

6: eth0@if7: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

sh-4.4# exit

exit

[root@docker ~]# docker container ls -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

# Adding -- network bridge when creating a container has the same effect as not adding -- network option

[root@docker ~]# docker run -it --name nginx --rm --network bridge linlusama/centos-nginx:v1.20.1 /bin/sh

sh-4.4# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

8: eth0@if9: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

none mode configuration

[root@docker ~]# docker run -it --name nginx --rm --network none linlusama/centos-nginx:v1.20.1 /bin/sh

sh-4.4# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

container mode configuration

Start the first container

[root@docker ~]# docker run -it --name nginx1 --rm linlusama/centos-nginx:v1.20.1 /bin/sh

sh-4.4# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

10: eth0@if11: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

Start the second container

[root@docker ~]# docker run -it --name nginx2 --rm --network container:nginx1 linlusama/centos-nginx:v1.20.1 /bin/sh

sh-4.4# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

10: eth0@if11: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:ac:11:00:02 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 172.17.0.2/16 brd 172.17.255.255 scope global eth0

valid_lft forever preferred_lft forever

At this point, we create a directory on the b1 container

sh-4.4# mkdir /tmp/xym sh-4.4# ls /tmp/ ks-script-4luisyla ks-script-o23i7rc2 ks-script-x6ei4wuu xym //nginx2 see sh-4.4# ls /tmp/ ks-script-4luisyla ks-script-o23i7rc2 ks-script-x6ei4wuu

Check the / tmp directory on the b2 container and you will find that there is no such directory, because the file system is isolated and only shares the network.

Deploy a site on the b2 container

sh-4.4# mkdir -p /var/www/html sh-4.4# echo "I am Xu Meng's father" > / var / www / HTML / index.html sh-4.4# /bin/httpd -f -h /var/www/html/index.html

Use a local address on the b1 container to access this site

sh-4.4# wget -qO - 127.0.0.1 I'm Xu Meng's father

It can be seen that the relationship between containers in container mode is equivalent to two different processes on a host

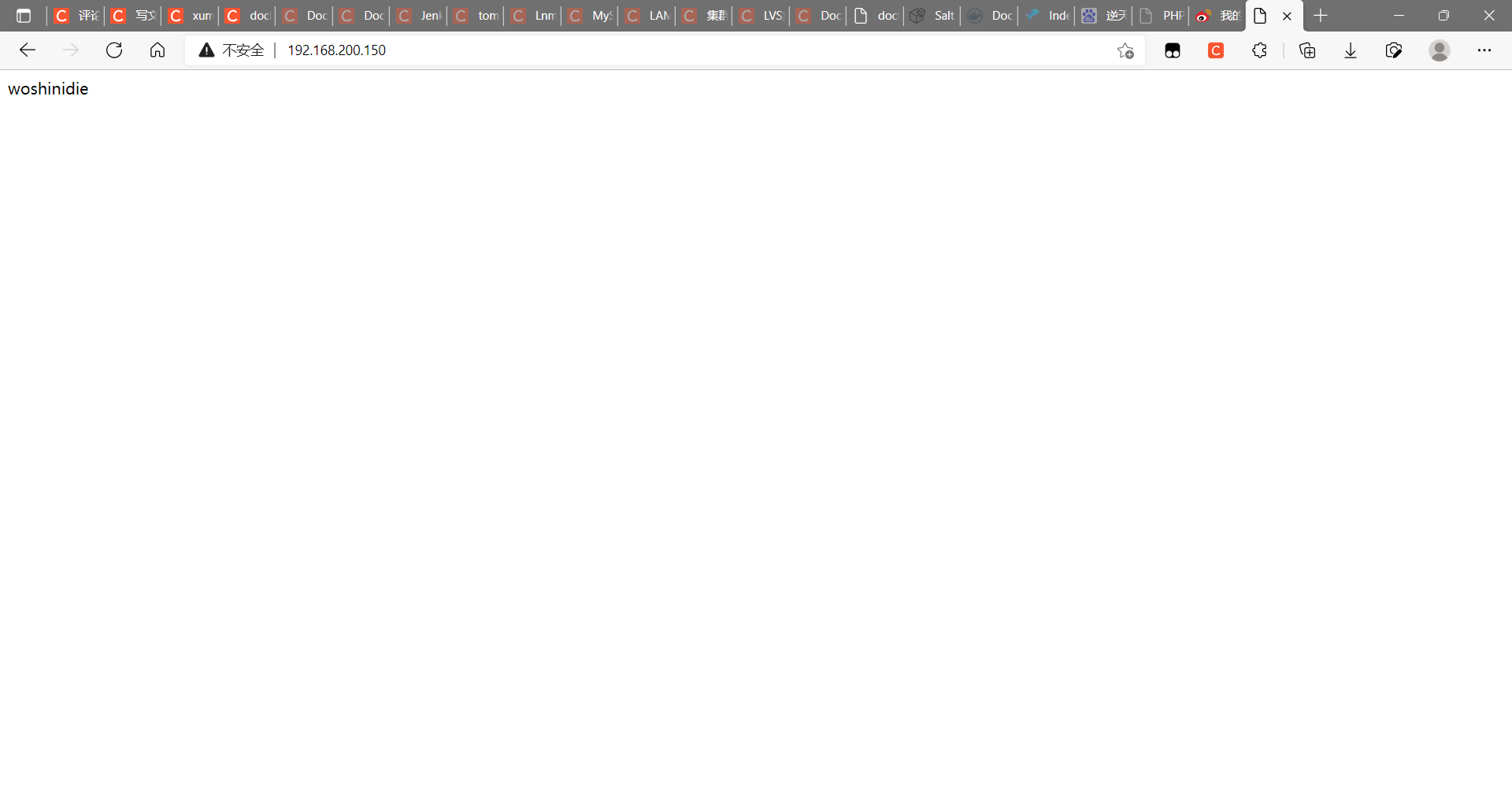

host mode configuration

Directly indicate that the mode is host when starting the container

[root@docker ~]# docker run -it --name nginx --rm --network host linlusama/centos-nginx:v1.20.1 /bin/sh

sh-4.4# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:d1:aa:86 brd ff:ff:ff:ff:ff:ff

inet 192.168.200.150/24 brd 192.168.200.255 scope global dynamic noprefixroute ens33

valid_lft 1158sec preferred_lft 1158sec

inet6 fe80::3f7:eb08:5d5b:98f5/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:63:35:ef:ca brd ff:ff:ff:ff:ff:ff

inet 172.17.0.1/16 brd 172.17.255.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:63ff:fe35:efca/64 scope link

valid_lft forever preferred_lft forever

At this point, if we start an http site in this container, we can directly access the site in this container in the browser with the IP of the host.

sh-4.4# echo "woshinidie" > /usr/local/nginx/html/index.html sh-4.4# /usr/local/nginx/sbin/nginx sh-4.4# ss -antl State Recv-Q Send-Q Local Address:Port Peer Address:Port Process LISTEN 0 25 0.0.0.0:514 0.0.0.0:* LISTEN 0 128 0.0.0.0:80 0.0.0.0:* LISTEN 0 128 0.0.0.0:22 0.0.0.0:* LISTEN 0 25 [::]:514 [::]:* LISTEN 0 128 [::]:22 [::]:*

Common operations of containers

View the host name of the container

[root@docker ~]# docker run -it --name nginx -p 80:80 linlusama/centos-nginx:v1.20.2 /bin/bash [root@b16c5957d542 /]# hostname b16c5957d542

Inject hostname when container starts

[root@docker ~]# docker run -it --rm --hostname node1 centos /bin/bash [root@node1 /]# cat /etc/hosts 127.0.0.1 localhost ::1 localhost ip6-localhost ip6-loopback fe00::0 ip6-localnet ff00::0 ip6-mcastprefix ff02::1 ip6-allnodes ff02::2 ip6-allrouters 172.17.0.3 node1 [root@node1 /]# cat /etc/resolv.conf / / set the gateway to the host machine # Generated by NetworkManager search localdomain nameserver 192.168.200.2 [root@node1 /]# ping www.baidu.com PING www.a.shifen.com (36.152.44.95) 56(84) bytes of data. 64 bytes from localhost (36.152.44.95): icmp_seq=1 ttl=127 time=44.4 ms 64 bytes from localhost (36.152.44.95): icmp_seq=2 ttl=127 time=43.5 ms

Manually specify the DNS to be used by the container

[root@docker ~]# docker run -it --rm --hostname node1 --dns 8.8.8.8 centos /bin/bash [root@node1 /]# cat /etc/resolv.conf search localdomain nameserver 8.8.8.8

Manually inject the host name to IP address mapping into the / etc/hosts file

[root@docker ~]# docker run -it --rm --hostname node1 --add-host node2:192.168.200.145 centos /bin/bash [root@node1 /]# cat /etc/hosts 127.0.0.1 localhost ::1 localhost ip6-localhost ip6-loopback fe00::0 ip6-localnet ff00::0 ip6-mcastprefix ff02::1 ip6-allnodes ff02::2 ip6-allrouters 192.168.200.145 node2 172.17.0.3 node1

Open container port

When docker run is executed, there is a - p option to map the application ports in the container to the host, so that the external host can access the applications in the container by accessing a port of the host.

-The p option can be used multiple times, and the port it can expose must be the port that the container is actually listening to.

-Use format of p option:

- -p

- Maps the specified container port to a dynamic port at all addresses of the host

- -p :

- Map the container port to the specified host port

- -p ::

- Maps the specified container port to the dynamic port specified by the host

- -p ::

- Map the specified container port to the port specified by the host

Dynamic ports refer to random ports. The specific mapping results can be viewed using the docker port command.

[root@docker ~]# docker run -it --rm --name nginx -p 80 linlusama/centos-nginx:v1.20.2 /bin/bash

After the above command is executed, it will occupy the front end all the time. Let's open a new terminal connection to see what port 80 of the container is mapped to the host

[root@docker ~]# docker container ls CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 286002a8316b linlusama/centos-nginx:v1.20.2 "/bin/bash" About a minute ago Up About a minute 0.0.0.0:49153->80/tcp, :::49153->80/tcp nginx root@docker ~]# ss -antl State Recv-Q Send-Q Local Address:Port Peer Address:PortProcess LISTEN 0 128 0.0.0.0:49153 0.0.0.0:* LISTEN 0 25 0.0.0.0:514 0.0.0.0:* LISTEN 0 128 0.0.0.0:22 0.0.0.0:* LISTEN 0 128 [::]:49153 [::]:* LISTEN 0 25 [::]:514 [::]:* LISTEN 0 128 [::]:22 [::]:*

It can be seen that port 80 of the container is exposed to port 49153 of the host. At this time, we can access this port on the host to see if we can access the sites in the container

[root@docker ~]# curl http://127.0.0.1:49153

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

<style>

body {

width: 35em;

margin: 0 auto;

font-family: Tahoma, Verdana, Arial, sans-serif;

}

</style>

</head>

<body>

<h1>Welcome to nginx!</h1>

<p>If you see this page, the nginx web server is successfully installed and

working. Further configuration is required.</p>

<p>For online documentation and support please refer to

<a href="http://nginx.org/">nginx.org</a>.<br/>

Commercial support is available at

<a href="http://nginx.com/">nginx.com</a>.</p>

<p><em>Thank you for using nginx.</em></p>

</body>

</html>

iptables firewall rules will be generated automatically with the creation of the container and deleted automatically with the deletion of the container.

Maps the container port to a random port of the specified IP

[root@docker ~]# docker run -itd --rm --name nginx -p 192.168.200.150::80 linlusama/centos-nginx:v1.20.1 e1d06b64f07ddf8625c3b473992a217bae3fb6f0e59ca5eceeb1a5e4f7537f6b

View the port mapping on another terminal

[root@docker ~]# docker container ls CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES e1d06b64f07d linlusama/centos-nginx:v1.20.1 "/usr/local/nginx/sb..." 3 seconds ago Up 2 seconds 192.168.200.150:49153->80/tcp nginx [root@docker ~]# curl 192.168.200.150:49153 I'm Xu Meng, his father

Map the container port to the specified port of the host

[root@docker ~]# docker run -itd --rm --name nginx -p 8080:80 linlusama/centos-nginx:v1.20.1 87ba6e9db605786c198ce8cfc2772ec715465b8d5e455eed7d0cc6aad4597e2e

View the port mapping on another terminal

[root@docker ~]# docker container ls CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 87ba6e9db605 linlusama/centos-nginx:v1.20.1 "/usr/local/nginx/sb..." 3 seconds ago Up 2 seconds 0.0.0.0:8080->80/tcp, :::8080->80/tcp nginx [root@docker ~]# ss -antl State Recv-Q Send-Q Local Address:Port Peer Address:Port Process LISTEN 0 25 0.0.0.0:514 0.0.0.0:* LISTEN 0 128 0.0.0.0:8080 0.0.0.0:* LISTEN 0 128 0.0.0.0:22 0.0.0.0:* LISTEN 0 25 [::]:514 [::]:* LISTEN 0 128 [::]:8080 [::]:* LISTEN 0 128 [::]:22 [::]:* [root@docker ~]# curl 192.168.200.150:8080 I'm Xu Meng, his father

Network attribute information of custom docker0 Bridge

Official document related configuration

To customize the network attribute information of docker0 bridge, you need to modify the / etc/docker/daemon.json configuration file

[root@docker ~]# cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://6yrl18rf.mirror.aliyuncs.com"],

"bip": "192.168.66.88/24"

}

[root@docker ~]# systemctl restart docker

[root@docker ~]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

inet6 ::1/128 scope host

valid_lft forever preferred_lft forever

2: ens33: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc fq_codel state UP group default qlen 1000

link/ether 00:0c:29:d1:aa:86 brd ff:ff:ff:ff:ff:ff

inet 192.168.200.150/24 brd 192.168.200.255 scope global dynamic noprefixroute ens33

valid_lft 1464sec preferred_lft 1464sec

inet6 fe80::3f7:eb08:5d5b:98f5/64 scope link noprefixroute

valid_lft forever preferred_lft forever

3: docker0: <NO-CARRIER,BROADCAST,MULTICAST,UP> mtu 1500 qdisc noqueue state DOWN group default

link/ether 02:42:63:35:ef:ca brd ff:ff:ff:ff:ff:ff

inet 192.168.66.88/24 brd 192.168.66.255 scope global docker0

valid_lft forever preferred_lft forever

inet6 fe80::42:63ff:fe35:efca/64 scope link

valid_lft forever preferred_lft forever

The core option is bip, which means bridge ip. It is used to specify the IP address of docker0 bridge itself. Other options can be calculated from this address.

docker remote connection

The C/S of the dockerd daemon only listens to the address in Unix Socket format (/ var/run/docker.sock) by default. If you want to use TCP sockets, you need to modify the / etc/docker/daemon.json configuration file, add the following contents, and then restart the docker service:

"hosts": ["tcp://0.0.0.0:2375", "unix:///var/run/docker.sock"]

Pass the "- H | - host" option directly to dockerd on the client to specify which host to control the docker container on

docker -H 192.168.10.145:2375 ps

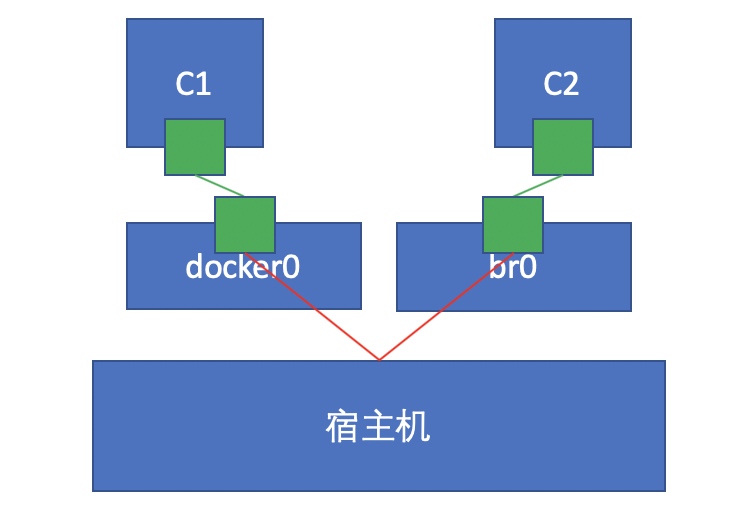

docker create custom bridge

Create an additional custom bridge, which is different from docker0

[root@docker ~]# docker network ls NETWORK ID NAME DRIVER SCOPE 46a740510c53 bridge bridge local e60478a9ce12 host host local b1eca0cb44c6 none null local [root@docker ~]# docker network create -d bridge --subnet "192.168.88.0/24" --gateway "192.168.88.1" br0 d972ef8d61498e25b29c9bb0b0978c9c9505e35d84158d308aa7790cc08942e5 [root@docker ~]# docker network ls NETWORK ID NAME DRIVER SCOPE d972ef8d6149 br0 bridge local 46a740510c53 bridge bridge local e60478a9ce12 host host local b1eca0cb44c6 none null local

Create a container using the newly created custom bridge:

[root@docker ~]# docker run -it --rm --name nginx --network br0 centos

[root@62554f569846 /]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

31: eth0@if32: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:c0:a8:58:02 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 192.168.88.2/24 brd 192.168.88.255 scope global eth0

valid_lft forever preferred_lft forever

Create another container and use the default bridge:

[root@docker ~]# docker run -it --rm --name nginx1 --network bridge centos

[root@b65b63b12508 /]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

33: eth0@if34: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:c0:a8:42:01 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 192.168.66.1/24 brd 192.168.66.255 scope global eth0

valid_lft forever preferred_lft forever

Imagine whether b2 and b1 can communicate with each other at this time? If not, how to realize communication?

We can connect the bridge. br0 is created by uploading. Connect the default bridge to the bridge just created

[root@docker ~]# docker container ls CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 0d42fbacaf65 centos "/bin/bash" 24 seconds ago Up 23 seconds nginx1 4e9457637bbd centos "/bin/bash" About a minute ago Up About a minute nginx [root@docker ~]# docker network connect br0 0d42fbacaf65

ping the nginx1 container

[root@0d42fbacaf65 /]# ip a

1: lo: <LOOPBACK,UP,LOWER_UP> mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

37: eth0@if38: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:c0:a8:42:01 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 192.168.66.1/24 brd 192.168.66.255 scope global eth0

valid_lft forever preferred_lft forever

39: eth1@if40: <BROADCAST,MULTICAST,UP,LOWER_UP> mtu 1500 qdisc noqueue state UP group default

link/ether 02:42:c0:a8:58:03 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 192.168.88.3/24 brd 192.168.88.255 scope global eth1

valid_lft forever preferred_lft forever

[root@0d42fbacaf65 /]# ping 192.168.88.2

PING 192.168.88.2 (192.168.88.2) 56(84) bytes of data.

64 bytes from 192.168.88.2: icmp_seq=1 ttl=64 time=0.103 ms

64 bytes from 192.168.88.2: icmp_seq=2 ttl=64 time=0.050 ms

64 bytes from 192.168.88.2: icmp_seq=3 ttl=64 time=0.073 ms

64 bytes from 192.168.88.2: icmp_seq=4 ttl=64 time=0.049 ms

64 bytes from 192.168.88.2: icmp_seq=5 ttl=64 time=0.051 ms

^C

--- 192.168.88.2 ping statistics ---

5 packets transmitted, 5 received, 0% packet loss, time 4134ms

rtt min/avg/max/mdev = 0.049/0.065/0.103/0.021 ms