Big data Hadoop cluster construction

1, Environment

Server configuration:

CPU model: Intel ® Xeon ® CPU E5-2620 v4 @ 2.10GHz

CPU cores: 16

Memory: 64GB

operating system

Version: CentOS Linux release 7.5.1804 (Core)

Host list:

| IP | host name |

|---|---|

| 192.168.1.101 | node1 |

| 192.168.1.102 | node2 |

| 192.168.1.103 | node3 |

| 192.168.1.104 | node4 |

| 192.168.1.105 | node5 |

Software installation package path / data/tools/

JAVA_HOME path / opt / Java ා Java is the soft link, pointing to the specified version of jdk

Hadoop cluster path / data/bigdata/

Software version and deployment distribution:

| Component name | Installation package | explain | node1 | node2 | node3 | node4 | node5 |

|---|---|---|---|---|---|---|---|

| JDK | jdk-8u162-linux-x64.tar.gz | Basic environment | ✔ | ✔ | ✔ | ✔ | ✔ |

| zookeeper | zookeeper-3.4.12.tar.gz | ✔ | ✔ | ✔ | |||

| Hadoop | hadoop-2.7.6.tar.gz | ✔ | ✔ | ✔ | ✔ | ✔ | |

| spark | spark-2.1.2-bin-hadoop2.7.tgz | ✔ | ✔ | ✔ | ✔ | ✔ | |

| scala | scala-2.11.12.tgz | ✔ | ✔ | ✔ | ✔ | ✔ | |

| hbase | hbase-1.2.6-bin.tar.gz | ✔ | ✔ | ✔ | |||

| hive | apache-hive-2.3.3-bin.tar.gz | ✔ | |||||

| kylin | apache-kylin-2.3.1-hbase1x-bin.tar.gz | ✔ | |||||

| kafka | kafka_2.11-1.1.0.tgz | ✔ | ✔ | ||||

| hue | hue-3.12.0.tgz | ✔ | |||||

| flume | apache-flume-1.8.0-bin.tar.gz | ✔ | ✔ | ✔ | ✔ | ✔ |

Note: all soft links cannot be transferred across servers and should be created separately; otherwise, the files or the entire directory pointed to by the soft links will be transferred;

2, Common commands

1. To view the basic system configuration:

[root@localhost ~]# uname -a Linux node1 3.10.0-123.9.3.el7.x86_64 #1 SMP Thu Nov 6 15:06:03 UTC 2014 x86_64 x86_64 x86_64 GNU/Linux [root@localhost ~]# cat /etc/redhat-release CentOS Linux release 7.5.1804 (Core) [root@localhost ~]# free -m total used free shared buffers cached Mem: 64267 2111 62156 16 212 1190 -/+ buffers/cache: 708 63559 Swap: 32000 0 32000 [root@localhost ~]# lscpu Architecture: x86_64 CPU op-mode(s): 32-bit, 64-bit Byte Order: Little Endian CPU(s): 16 On-line CPU(s) list: 0-15 Thread(s) per core: 2 Core(s) per socket: 8 Socket(s): 1 NUMA node(s): 1 Vendor ID: GenuineIntel CPU family: 6 Model: 79 Model name: Intel(R) Xeon(R) CPU E5-2620 v4 @ 2.10GHz Stepping: 1 CPU MHz: 2095.148 BogoMIPS: 4190.29 Hypervisor vendor: KVM Virtualization type: full L1d cache: 32K L1i cache: 32K L2 cache: 256K L3 cache: 20480K NUMA node0 CPU(s): 0-15 [root@localhost ~]# df -h //File system capacity used% free used% mount point /dev/sda2 100G 3.1G 97G 4% / devtmpfs 7.7G 0 7.7G 0% /dev tmpfs 7.8G 0 7.8G 0% /dev/shm tmpfs 7.8G 233M 7.5G 3% /run tmpfs 7.8G 0 7.8G 0% /sys/fs/cgroup /dev/sda1 500M 9.8M 490M 2% /boot/efi /dev/sda4 1.8T 9.3G 1.8T 1% /data tmpfs 1.6G 0 1.6G 0% /run/user/1000

2. Start cluster

start-dfs.sh start-yarn.sh

3. Shut down the cluster

stop-yarn.sh stop-dfs.sh

4. Monitoring cluster

hdfs dfsadmin -report

5. Single process startup / shutdown

hadoop-daemon.sh start|stop namenode|datanode| journalnode yarn-daemon.sh start |stop resourcemanager|nodemanager

3, Environment preparation (all servers)

1. Set host name (other similar)

[root@localhost ~]# hostnamectl set-hostname node1

2. Turn off firewall firewalld and disable booting and SELINUX

[root@node1 ~]# systemctl disable firewalld.service [root@node1 ~]# systemctl stop firewalld.service [root@node1 ~]# systemctl status firewalld.service ● firewalld.service - firewalld - dynamic firewall daemon Loaded: loaded (/usr/lib/systemd/system/firewalld.service; disabled; vendor preset: enabled) Active: inactive (dead) Docs: man:firewalld(1) [root@node1 ~]# setenforce 0 [root@node1 ~]# sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config [root@node1 ~]# grep SELINUX=disabled /etc/selinux/config SELINUX=disabled

3. Modify the hosts file

[root@node1 ~]# vim /etc/hosts #127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4 #::1 localhost localhost.localdomain localhost6 localhost6.localdomain6 192.168.1.101 node1 192.168.1.102 node2 192.168.1.103 node3 192.168.1.104 node4 192.168.1.105 node5

4. Set ssh password free login, available in ansible

[root@node1 ~]# ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa [root@node1 ~]# cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys [root@node1 ~]# ll -d .ssh/ drwx------ 2 root root 4096 Jun 5 08:50 .ssh/ [root@node1 ~]# ll .ssh/ total 12 -rw-r--r-- 1 root root 599 Jun 5 08:50 authorized_keys -rw------- 1 root root 672 Jun 5 08:50 id_dsa -rw-r--r-- 1 root root 599 Jun 5 08:50 id_dsa.pub # Put ~ /. SSH / ID of other servers_ dsa.pub The content is also appended to ~ /. SSH / authorized of node1 server_ Keys file, and then distribute [root@node1 ~]# scp –rp ~/.ssh/authorized_keys node2: ~/.ssh/ [root@node1 ~]# scp –rp ~/.ssh/authorized_keys node3: ~/.ssh/ [root@node1 ~]# scp –rp ~/.ssh/authorized_keys node4: ~/.ssh/ [root@node1 ~]# scp –rp ~/.ssh/authorized_keys node5: ~/.ssh/

node1 can also generate a set of keys, and then distribute ~ /. ssh to other servers, sharing a key

5. Number of modified file handles

[root@node1 ~]# vim /etc/security/limits.conf #---------custom----------------------- # * soft nofile 240000 * hard nofile 655350 * soft nproc 240000 * hard nproc 655350 #-----------end----------------------- [root@node1 ~]# source /etc/security/limits.conf [root@node1 ~]# ulimit -n 24000

6. Time synchronization

ntp server settings

# An ntp server is set in the LAN. Other NTPs can be synchronized with this ntp. Generally, the ECS is synchronized by default [root@node1 ~]# yum install ntp -y # Install ntp service [root@node1 ~]# cp -a /etc/ntp.conf{,.bak} [root@node1 ~]# vim /etc/ntp.conf restrict default kod nomodify notrap nopeer noquery # restrict and default define default access rules. nomodify forbids remote hosts to modify local servers restrict 127.0.0.1 # The query here is the query of the state of the server itself. restrict -6 ::1 #server 0.centos.pool.ntp.org iburst # Note the official website #server 1.centos.pool.ntp.org iburst #server 2.centos.pool.ntp.org iburst #server 3.centos.pool.ntp.org iburst server ntp1.aliyun.com # Destination server network location server 127.127.1.0 # local clock: when the server loses contact with the public time server, it is unable to connect to the Internet. The time server in the local area network provides time synchronization service for the client. fudge 127.127.1.0 stratum 10 # If the scheduled task has time synchronization, comment first, and the two usages will conflict. [root@node1 ~]# crontab –e #*/30 * * * * /usr/sbin/ntpdate ntp1.aliyun.com > /dev/null 2>&1;/sbin/hwclock -w # Start the service and set to start self start: [root@node1 /]# systemctl start ntpd.service # Start service [root@node1 /]# systemctl enable ntpd.service # Set to startup [root@node1 ~]# systemctl status ntpd.service ● ntpd.service - Network Time Service Loaded: loaded (/usr/lib/systemd/system/ntpd.service; disabled; vendor preset: disabled) Active: active (running) since I. 2018-05-21 13:47:33 CST; 1 weeks 2 days ago Main PID: 17915 (ntpd) CGroup: /system.slice/ntpd.service └─17915 /usr/sbin/ntpd -u ntp:ntp -g 5 November 23:41:40 node1 ntpd[17915]: Listen normally on 14 enp0s25 192.168.1.101 UDP 123 5 November 23:41:40 node1 ntpd[17915]: new interface(s) found: waking up resolver 5 November 23:41:42 node1 ntpd[17915]: Listen normally on 18 enp0s25 fe80::6a85:bbb1:ad57:f6ae UDP 123 [root@node1 ~]# ntpq -p # Check that the time server is synchronized correctly remote refid st t when poll reach delay offset jitter ============================================================================== *time5.aliyun.co 10.137.38.86 2 u 468 1024 377 14.374 -4.292 6.377 //When the jitter value of all remote servers (not local servers) is 4000, and the value of reach and dalay is 0, there is a problem with time synchronization. There are two possible reasons: 1)The firewall setting on the server side blocks port 123 iptables -t filter -A INPUT -p udp --destination-port 123 -j ACCEPT Solution) 2)Every reboot ntp After the server, about 3-5 Minutes before the client can establish a connection with the server, the time synchronization can be carried out after the connection is established, otherwise, the client synchronization time will be displayed no server suitable for synchronization found You don't need to worry about the wrong information. It will be OK later.

Other host settings, take node2 as an example

[root@node2 /]# systemctl stop ntpd.service # Shut down ntp service [root@node2 /]# systemctl disable ntpd.service # No booting [root@node2 ~]# yum install ntpdate -y [root@node2 ~]# /usr/sbin/ntpdate 192.168.1.101 30 May 17:54:09 ntpdate[20937]: adjust time server 192.168.1.101 offset 0.000758 sec [root@node2 ~]# crontab –e */30 * * * * /usr/sbin/ntpdate 192.168.1.101 > /dev/null 2>&1;/sbin/hwclock -w [root@node2 ~]# systemctl restart crond.service [root@node2 ~]# systemctl status crond.service ● crond.service - Command Scheduler Loaded: loaded (/usr/lib/systemd/system/crond.service; enabled; vendor preset: enabled) Active: active (running) since IV. 2018-05-31 09:05:39 CST; 11s ago Main PID: 12162 (crond) CGroup: /system.slice/crond.service └─12162 /usr/sbin/crond -n 5 31 / 09:05:39 node2 systemd[1]: Started Command Scheduler. 5 31 / 09:05:39 node2 systemd[1]: Starting Command Scheduler...

7. Upload the installation package to node1 server

[root@node1 ~]# mkdir -pv /data/tools [root@node1 ~]# cd /data/tools [root@node1 tools]# ll total 1221212 -rw-r--r-- 1 root root 58688757 May 24 10:23 apache-flume-1.8.0-bin.tar.gz -rw-r--r-- 1 root root 232229830 May 24 10:25 apache-hive-2.3.3-bin.tar.gz -rw-r--r-- 1 root root 286104833 May 24 10:26 apache-kylin-2.3.1-hbase1x-bin.tar.gz -rw-r--r-- 1 root root 216745683 May 24 10:28 hadoop-2.7.6.tar.gz -rw-r--r-- 1 root root 104659474 May 24 10:27 hbase-1.2.6-bin.tar.gz -rw-r--r-- 1 root root 47121634 May 24 10:29 hue-3.12.0.tgz -rw-r--r-- 1 root root 56969154 May 24 10:49 kafka_2.11-1.1.0.tgz -rw-r--r-- 1 root root 193596110 May 24 10:29 spark-2.1.2-bin-hadoop2.7.tgz -rw-r--r-- 1 root root 36667596 May 24 10:28 zookeeper-3.4.12.tar.gz [root@node1 bigdata]#

8. Install JDK

[root@node1 ~]# tar xf /data/tools/jdk-8u162-linux-x64.tar.gz -C /opt/ [root@node1 ~]# [ -L "/opt/java" ] && rm -f /opt/java [root@node1 ~]# cd /opt/ && ln -s /opt/jdk1.8.0_162 /opt/java [root@node1 ~]# chown -R root:root /opt/jdk1.8.0_162 [root@node1 ~]# echo -e "# java\nexport JAVA_HOME=/opt/java\nexport PATH=\${PATH}:\${JAVA_HOME}/bin:\${JAVA_HOME}/jre/bin\nexport CLESSPATH=.:\${JAVA_HOME}/lib:\${JAVA_HOME}/jre/lib" > /etc/profile.d/java_version.sh [root@node1 ~]# source /etc/profile.d/java_version.sh [root@node1 ~]# java -version java version "1.8.0_162" Java(TM) SE Runtime Environment (build 1.8.0_162-b12) Java HotSpot(TM) 64-Bit Server VM (build 25.162-b12, mixed mode)

4, Install zookeeper

1. Unzip zookeeper

[root@node1 ~]# mkdir -pv /data/bigdata/src [root@node1 ~]# tar -zxvf /data/tools/zookeeper-3.4.12.tar.gz -C /data/bigdata/src [root@node1 ~]# ln -s /data/bigdata/src/zookeeper-3.4.12 /data/bigdata/zookeeper # Add environment variable [root@node1 ~]# echo -e "# zookeeper\nexport ZOOKEEPER_HOME=/data/bigdata/zookeeper\nexport PATH=\$ZOOKEEPER_HOME/bin:\$PATH" > /etc/profile.d/bigdata_path.sh [root@node1 ~]# cat /etc/profile.d/bigdata_path.sh # zookeeper export ZOOKEEPER_HOME=/data/bigdata/zookeeper export PATH=$ZOOKEEPER_HOME/bin:$PATH [root@node1 ~]#

2. Configuration zoo.cfg file

[root@node1 ~]# cd /data/bigdata/zookeeper/conf/ #Enter the conf directory [root@node1 conf]# cp zoo_sample.cfg zoo.cfg #Copy template [root@node1 conf]# vim zoo.cfg # The number of millinode2s of each tick tickTime=2000 # The number of ticks that the initial # synchronization phase can take initLimit=10 # The number of ticks that can pass between # sending a request and getting an acknowledgement syncLimit=5 # the directory where the snapshot is stored. # do not use /tmp for storage, /tmp here is just # example sakes. dataDir=/data/bigdata/zookeeper/data # add to dataLogDir=/data/bigdata/zookeeper/dataLog # add to # the port at which the clients will connect clientPort=2181 # the maximum number of client connections. # increase this if you need to handle more clients #maxClientCnxns=60 # # Be sure to read the maintenance section of the # administrator guide before turning on autopurge. # # http://zookeeper.apache.org/doc/current/zookeeperAdmin.html#sc_maintenance # # The number of snapshots to retain in dataDir #autopurge.snapRetainCount=3 # Purge task interval in hours # Set to "0" to disable auto purge feature #autopurge.purgeInterval=1 server.1=node1:2888:3888 # add to server.2=node2:2888:3888 server.3=node3:2888:3888

3. Add myid, distribute (odd number of installations)

# Create the specified directory: add the myid file in the dataDir directory; write the id of the current zookeeper service in the myid, because the server.1=node1:2888:3888 server specifies 1, [root@node1 conf]# mkdir -pv /data/bigdata/zookeeper/{data,dataLog} [root@node1 conf]# echo 1 > /data/bigdata/zookeeper/data/myid

4. Distribution:

[root@node2 ~]# mkdir -pv /data/bigdata/ [root@node3 ~]# mkdir -pv /data/bigdata/ [root@node1 conf]# scp -rp /data/bigdata/src node2:/data/bigdata/ [root@node1 conf]# scp -rp /data/bigdata/src node3:/data/bigdata/ [root@node2 ~]# ln -s /data/bigdata/src/zookeeper-3.4.12 /data/bigdata/zookeeper [root@node3 ~]# ln -s /data/bigdata/src/zookeeper-3.4.12 /data/bigdata/zookeeper # In other machine configurations, the myid under node2 is 2, and the myid under node3 is 3. These are all based on the server [root@node2 ~]# echo 2 > /data/bigdata/zookeeper/data/myid [root@node3 ~]# echo 3 > /data/bigdata/zookeeper/data/myid

5, Install Hadoop

- Production environment: two primary nodes only have namenode, not datanode;

1. Decompress hadoop

[root@node1 ~]# tar -zxvf /data/tools/hadoop-2.7.6.tar.gz -C /data/bigdata/src [root@node1 ~]# ln -s /data/bigdata/src/hadoop-2.7.6 /data/bigdata/hadoop # Add environment variable [root@node1 ~]# echo -e "\n# hadoop\nexport HADOOP_HOME=/data/bigdata/hadoop\nexport PATH=\$HADOOP_HOME/bin:\$HADOOP_HOME/sbin\$PATH" >> /etc/profile.d/bigdata_path.sh [root@node1 ~]# cat /etc/profile.d/bigdata_path.sh # zookeeper export ZOOKEEPER_HOME=/data/bigdata/zookeeper export PATH=$ZOOKEEPER_HOME/bin:$PATH # hadoop export HADOOP_HOME=/data/bigdata/hadoop export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH [root@node1 ~]#

2. Configure hadoop-env.sh

[root@node1 ~]# cd /data/bigdata/hadoop/etc/hadoop/ [root@node1 hadoop]# vim hadoop-env.sh export JAVA_HOME=/opt/java # add to export HADOOP_SSH_OPTS="-p 22"

3. Configure core-site.xml

[root@node1 hadoop]# vim core-site.xml <?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <!--Yarn Need to use fs.defaultFS appoint NameNode URI --> <property> <name>fs.defaultFS</name> <value>hdfs://mycluster</value> </property> <property> <name>hadoop.tmp.dir</name> <value>/data/bigdata/tmp</value> </property> <property> <name>ha.zookeeper.quorum</name> <value>node1:2181,node2:2181,node3:2181</value> <discription>zookeeper Client connection address</discription> </property> <property> <name>ha.zookeeper.session-timeout.ms</name> <value>10000</value> </property> <property> <name>fs.trash.interval</name> <value>1440</value> <discription>Garbage collection time in minutes. If the data in the garbage station exceeds this time, it will be deleted. If 0, the garbage collection mechanism is turned off.</discription> </property> <property> <name>fs.trash.checkpoint.interval</name> <value>1440</value> <discription>Garbage collection check interval in minutes.</discription> </property> <property> <name>hadoop.security.authentication</name> <value>simple</value> <discription>The values that can be set are simple (No certification) perhaps kerberos(A security authentication system)</discription> </property> <!-- And hue Configuration required for integration--> <property> <name>hadoop.proxyuser.hue.hosts</name> <value>*</value> </property> <property> <name>hadoop.proxyuser.hue.groups</name> <value>*</value> </property> <property> <name>hadoop.proxyuser.root.hosts</name> <value>*</value> </property> <property> <name>hadoop.proxyuser.root.groups</name> <value>*</value> </property> </configuration>

New specified directory

[root@node1 hadoop]# mkdir -p /data/bigdata/tmp

4. Configure yarn-site.xml

[root@node1 hadoop]# vim yarn-site.xml <?xml version="1.0"?> <configuration> <property> <name>yarn.app.mapreduce.am.scheduler.connection.wait.interval-ms</name> <value>5000</value> <discription>schelduler Lost connection waiting time</discription> </property> <property> <name>yarn.nodemanager.aux-services</name> <value>mapreduce_shuffle</value> <discription>NodeManager Ancillary services running on. To be configured as mapreduce_shuffle,To run MapReduce program</discription> </property> <property> <name>yarn.resourcemanager.connect.retry-interval.ms</name> <value>5000</value> <description>How often to try connecting to the ResourceManager.</description> </property> <property> <name>yarn.resourcemanager.ha.enabled</name> <value>true</value> <discription>Enable or not RM HA,Default is false(Not enabled)</discription> </property> <property> <name>yarn.resourcemanager.ha.automatic-failover.enabled</name> <value>true</value> <discription>Whether automatic failover is enabled. By default, when on HA Enables automatic failover.</discription> </property> <property> <name>yarn.resourcemanager.ha.automatic-failover.embedded</name> <value>true</value> <discription>Enable built-in automatic failover. By default, when on HA Enables built-in automatic failover.</discription> </property> <property> <name>yarn.resourcemanager.cluster-id</name> <value>cluster1</value> <discription>Clustered Id,elector Use this value to ensure RM It will not be used as another cluster active. </discription> </property> <property> <name>yarn.resourcemanager.ha.rm-ids</name> <value>rm1,rm2</value> <discription>RMs Logic of id list,rm Manage Explorer; usually two, one for other standby; separated by commas,as:rm1,rm2 </discription> </property> <property> <name>yarn.resourcemanager.hostname.rm1</name> <value>node3</value> <discription>RM Of hostname</discription> </property> <property> <name>yarn.resourcemanager.scheduler.address.rm1</name> <value>${yarn.resourcemanager.hostname.rm1}:8030</value> <discription>RM Yes AM Exposed address,AM Think through the address RM Apply for resources,Release resources, etc</discription> </property> <property> <name>yarn.resourcemanager.resource-tracker.address.rm1</name> <value>${yarn.resourcemanager.hostname.rm1}:8031</value> <discription>RM Yes NM Exposure address,NM Through this address RM Report heartbeat,Receive task, etc</discription> </property> <property> <name>yarn.resourcemanager.address.rm1</name> <value>${yarn.resourcemanager.hostname.rm1}:8032</value> <discription>RM Address exposed to client,Through this address, the client RM Submit applications, etc</discription> </property> <property> <name>yarn.resourcemanager.admin.address.rm1</name> <value>${yarn.resourcemanager.hostname.rm1}:8033</value> <discription>RM Address exposed to administrator.Through this address, the administrator can RM Send management command, etc</discription> </property> <property> <name>yarn.resourcemanager.webapp.address.rm1</name> <value>${yarn.resourcemanager.hostname.rm1}:8088</value> <discription>RM Exposed web http Address, through which users can view the cluster information in the browser</discription> </property> <property> <description>The https adddress of the RM web application.</description> <name>yarn.resourcemanager.webapp.https.address.rm1</name> <value>${yarn.resourcemanager.hostname.rm1}:8090</value> </property> <property> <name>yarn.resourcemanager.hostname.rm2</name> <value>node4</value> </property> <property> <name>yarn.resourcemanager.scheduler.address.rm2</name> <value>${yarn.resourcemanager.hostname.rm2}:8030</value> </property> <property> <name>yarn.resourcemanager.resource-tracker.address.rm2</name> <value>${yarn.resourcemanager.hostname.rm2}:8031</value> </property> <property> <name>yarn.resourcemanager.address.rm2</name> <value>${yarn.resourcemanager.hostname.rm2}:8032</value> </property> <property> <name>yarn.resourcemanager.admin.address.rm2</name> <value>${yarn.resourcemanager.hostname.rm2}:8033</value> </property> <property> <name>yarn.resourcemanager.webapp.address.rm2</name> <value>${yarn.resourcemanager.hostname.rm2}:8088</value> </property> <property> <description>The https adddress of the RM web application.</description> <name>yarn.resourcemanager.webapp.https.address.rm2</name> <value>${yarn.resourcemanager.hostname.rm2}:8090</value> </property> <property> <name>yarn.resourcemanager.recovery.enabled</name> <value>true</value> <discription>The default value is false,in other words resourcemanager Hang the corresponding running task at rm Cannot restart after recovery</discription> </property> <property> <name>yarn.resourcemanager.store.class</name> <value>org.apache.hadoop.yarn.server.resourcemanager.recovery.ZKRMStateStore</value> <discription>State stored class</discription> </property> <property> <name>ha.zookeeper.quorum</name> <value>node1:2181,node2:2181,node3:2181</value> </property> <property> <name>yarn.resourcemanager.zk-address</name> <value>${ha.zookeeper.quorum}</value> <discription>ZooKeeper Address of the server (host: port number) for both state storage and embedded leader-election. </discription> </property> <property> <name>yarn.nodemanager.address</name> <value>${yarn.nodemanager.hostname}:8041</value> <discription>The address of the container manager in the NM.</discription> </property> <property> <name>yarn.nodemanager.resource.memory-mb</name> <value>58000</value> <discription>On this node nodemanager Total physical memory available</discription> </property> <property> <name>yarn.nodemanager.resource.cpu-vcores</name> <value>16</value> <discription>On this node nodemanager Available virtual CPU number</discription> </property> <property> <name>yarn.nodemanager.vmem-pmem-ratio</name> <value>2</value> <discription>1 for each task used MB Physical memory. The maximum amount of virtual memory can be used. The default is 2.1. </discription> </property> <property> <name>yarn.scheduler.minimum-allocation-mb</name> <value>1024</value> <discription>Minimum amount of physical memory that can be requested by a single task</discription> </property> <property> <name>yarn.scheduler.maximum-allocation-mb</name> <value>58000</value> <discription>Maximum amount of physical memory that can be requested by a single task</discription> </property> <property> <name>yarn.scheduler.minimum-allocation-vcores</name> <value>1</value> <discription>Minimum virtual that a single task can request CPU number</discription> </property> <property> <name>yarn.scheduler.maximum-allocation-vcores</name> <value>16</value> <discription>Maximum virtual that a single task can request CPU number</discription> </property> </configuration>

5. Configure mapred-site.xml

[root@node1 hadoop]# cp mapred-site.xml{.template,}

[root@node1 hadoop]# vim mapred-site.xml

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

<property>

<name>mapreduce.jobhistory.address</name>

<value>sjfx:10020</value>

</property>

</configuration>

6. Configure hdfs-site.xml

[root@node1 hadoop]# vim hdfs-site.xml <?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <property> <name>dfs.permissions</name> <value>true</value> <description> If "true", enable permission checking in HDFS. If "false", permission checking is turned off, but all other behavior is unchanged. Switching from one parameter value to the other does not change the mode, owner or group of files or directories. </description> </property> <property> <name>dfs.replication</name> <value>2</value> <description>Number of copies saved</description> </property> <property> <discription>Persistent storage namespace, local path of transaction log</discription> <name>dfs.namenode.name.dir</name> <value>/data/bigdata/hdfs/name</value> </property> <property> <discription>datanode Path to store data, single node, multiple directories separated by commas</discription> <name>dfs.datanode.data.dir</name> <value>/data/bigdata/hdfs/data</value> </property> <property> <discription>Specify for use in DataNode Inter transmission block Maximum threads of data</discription> <name>dfs.datanode.max.transfer.threads</name> <value>16384</value> </property> <property> <name>dfs.datanode.balance.bandwidthPerSec</name> <value>52428800</value> <description> Specifies the maximum amount of bandwidth that each datanode can utilize for the balancing purpose in term of the number of bytes per second. </description> </property> <property> <name>dfs.datanode.balance.max.concurrent.moves</name> <value>50</value> <description>increase DataNode Up transfer block Of Xceiver The maximum number of.</description> </property> <property> <name>dfs.nameservices</name> <value>mycluster</value> </property> <property> <name>dfs.ha.namenodes.mycluster</name> <value>nn1,nn2</value> </property> <property> <name>dfs.namenode.rpc-address.mycluster.nn1</name> <value>node1:8020</value> </property> <property> <name>dfs.namenode.rpc-address.mycluster.nn2</name> <value>node2:8020</value> </property> <property> <name>dfs.namenode.http-address.mycluster.nn1</name> <value>node1:50070</value> </property> <property> <name>dfs.namenode.http-address.mycluster.nn2</name> <value>node2:50070</value> </property> <property> <name>dfs.namenode.journalnode</name> <value>node1:8485;node2:8485;node3:8485</value> <discription>journalnode In order to solve hadoop Single point of failure namenode For metadata synchronization, odd,Generally 3 or 5</discription> </property> <property> <name>dfs.namenode.shared.edits.dir</name> <value>qjournal://${dfs.namenode.journalnode}/mycluster</value> </property> <property> <name>dfs.client.failover.proxy.provider.mycluster</name> <value>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</value> </property> <property> <name>dfs.ha.fencing.methods</name> <value>sshfence</value> </property> <property> <name>dfs.ha.fencing.ssh.private-key-files</name> <value>/root/.ssh/id_dsa</value> </property> <property> <name>dfs.journalnode.edits.dir</name> <value>${hadoop.tmp.dir}/dfs/journal</value> </property> <property> <name>dfs.permissions.superusergroup</name> <value>root</value> <description>Superuser group name</description> </property> <property> <name>dfs.ha.automatic-failover.enabled</name> <value>true</value> <description>Turn on automatic failover</description> </property> </configuration>

Create a new directory

[root@node1 hadoop]# mkdir -pv /data/bigdata/tmp/dfs/journal

7. Configure capacity-scheduler.xml

[root@node1 hadoop]# vim capacity-scheduler.xml <configuration> <property> <name>yarn.scheduler.capacity.maximum-applications</name> <value>10000</value> <description> Maximum number of applications that can be pending and running. </description> </property> <property> <name>yarn.scheduler.capacity.maximum-am-resource-percent</name> <value>0.1</value> <description> Maximum percent of resources in the cluster which can be used to run application masters i.e. controls number of concurrent running applications. </description> </property> <property> <name>yarn.scheduler.capacity.resource-calculator</name> <value>org.apache.hadoop.yarn.util.resource.DominantResourceCalculator</value> <description> The ResourceCalculator implementation to be used to compare Resources in the scheduler. The default i.e. DefaultResourceCalculator only uses Memory while DominantResourceCalculator uses dominant-resource to compare multi-dimensional resources such as Memory, CPU etc. </description> </property> <property> <name>yarn.scheduler.capacity.root.queues</name> <value>default</value> <description> The queues at the this level (root is the root queue). </description> </property> <property> <name>yarn.scheduler.capacity.root.default.capacity</name> <value>100</value> <description>Default queue target capacity.</description> </property> <property> <name>yarn.scheduler.capacity.root.default.user-limit-factor</name> <value>1</value> <description> Default queue user limit a percentage from 0.0 to 1.0. </description> </property> <property> <name>yarn.scheduler.capacity.root.default.maximum-capacity</name> <value>100</value> <description> The maximum capacity of the default queue. </description> </property> <property> <name>yarn.scheduler.capacity.root.default.state</name> <value>RUNNING</value> <description> The state of the default queue. State can be one of RUNNING or STOPPED. </description> </property> <property> <name>yarn.scheduler.capacity.root.default.acl_submit_applications</name> <value>*</value> <description> The ACL of who can submit jobs to the default queue. </description> </property> <property> <name>yarn.scheduler.capacity.root.default.acl_administer_queue</name> <value>*</value> <description> The ACL of who can administer jobs on the default queue. </description> </property> <property> <name>yarn.scheduler.capacity.node-locality-delay</name> <value>40</value> <description> Number of missed scheduling opportunities after which the CapacityScheduler attempts to schedule rack-local containers. Typically this should be set to number of nodes in the cluster, By default is setting approximately number of nodes in one rack which is 40. </description> </property> <property> <name>yarn.scheduler.capacity.queue-mappings</name> <value></value> <description> A list of mappings that will be used to assign jobs to queues The syntax for this list is [u|g]:[name]:[queue_name][,next mapping]* Typically this list will be used to map users to queues, for example, u:%user:%user maps all users to queues with the same name as the user. </description> </property> <property> <name>yarn.scheduler.capacity.queue-mappings-override.enable</name> <value>false</value> <description> If a queue mapping is present, will it override the value specified by the user? This can be used by administrators to place jobs in queues that are different than the one specified by the user. The default is false. </description> </property> </configuration>

8. Configure slaves

[root@node1 hadoop]# vim slaves node1 node2 node3 node4 node5

9. Modify $HADOOP_HOME/sbin/hadoop-daemon.sh

[root@node1 hadoop]# cd /data/bigdata/hadoop/sbin/ [root@node1 sbin]# vim hadoop-daemon.sh #add to: HADOOP_PID_DIR=/data/bigdata/hdfs/pids YARN_PID_DIR=/data/bigdata/hdfs/pids # Create a new directory [root@node1 sbin]# mkdir -pv /data/bigdata/hdfs/{name,data,pids}

10, Modify $HADOOP_HOME/sbin/yarn-daemon.sh

#add to: [root@node1 sbin]# vim yarn-daemon.sh HADOOP_PID_DIR=/data/bigdata/hdfs/pids YARN_PID_DIR=/data/bigdata/hdfs/pids

11. Distribution

[root@node1 sbin]# scp -rp /data/bigdata/src/hadoop-2.7.6 node2:/data/bigdata/src/ [root@node1 sbin]# scp -rp /data/bigdata/src/hadoop-2.7.6 node3:/data/bigdata/src/ [root@node1 sbin]# scp -rp /data/bigdata/src/hadoop-2.7.6 node4:/data/bigdata/src/ [root@node1 sbin]# scp -rp /data/bigdata/src/hadoop-2.7.6 node5:/data/bigdata/src/ [root@node2 ~]# ln -s /data/bigdata/src/hadoop-2.7.6 /data/bigdata/hadoop [root@node3 ~]# ln -s /data/bigdata/src/hadoop-2.7.6 /data/bigdata/hadoop [root@node4 ~]# ln -s /data/bigdata/src/hadoop-2.7.6 /data/bigdata/hadoop [root@node5 ~]# ln -s /data/bigdata/src/hadoop-2.7.6 /data/bigdata/hadoop

6, Start up process

1. Start the zookeeper service: choose one of the following two methods

(1) Turn on all zookeeper nodes at the same time

# node1 node [root@node1 ~]# cd /data/bigdata/zookeeper/bin [root@node1 conf]# zkServer.sh start # node2 node [root@node2 ~]# cd /data/bigdata/zookeeper/bin [root@node2 conf]# zkServer.sh start # node3 node [root@node3 ~]# cd /data/bigdata/zookeeper/bin [root@node3 conf]# zkServer.sh start # Corresponding process (other similar) [root@node1 ~]# jps 23993 QuorumPeerMain 24063 Jps [root@node1 ~]#

(2) Cluster start

because zookeeper The execution script for starting all nodes in the cluster at the same time is not provided. It is a little troublesome to start one node by one in production. A custom script is used to start all nodes in the cluster, as follows: [root@node1 bigdata]# cat zookeeper_all_op.sh #!/bin/bash # start zookeeper zookeeperHome=/data/bigdata/src/zookeeper-3.4.12 zookeeperArr=( "node1" "node2" "node3" ) for znode in ${zookeeperArr[@]}; do ssh -p 22 -q root@$znode \ " export PATH=/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/sbin:/usr/bin:/root/bin source /etc/profile $zookeeperHome/bin/zkServer.sh $1 " echo "$znode zookeeper $1 done" done # start-up [root@node1 bigdata]# ./zookeeper_all_op.sh start # View: leader is the leader (one), and follower is the follower; [root@node1 bigdata]# ./zookeeper_all_op.sh status ZooKeeper JMX enabled by default Using config: /data/bigdata/src/zookeeper-3.4.12/bin/../conf/zoo.cfg Mode: follower node1 zookeeper status done ZooKeeper JMX enabled by default Using config: /data/bigdata/src/zookeeper-3.4.12/bin/../conf/zoo.cfg Mode: leader node2 zookeeper status done ZooKeeper JMX enabled by default Using config: /data/bigdata/src/zookeeper-3.4.12/bin/../conf/zoo.cfg Mode: follower node3 zookeeper status done [root@node1 bigdata]#

(3) Start client script

[root@node1 bigdata]# ./zookeeper/bin/zkCli.sh -server node2:2181 Connecting to node2:2181 2018-06-13 17:28:21,115 [myid:] - INFO [main:Environment@100] - Client environment:zookeeper.version=3.4.12-e5259e437540f349646870ea94dc2658c4e44b3b, built on 03/27/2018 03:55 GMT 2018-06-13 17:28:21,119 [myid:] - INFO [main:Environment@100] - Client environment:host.name=node1 ...... //ellipsis ...... 2018-06-13 17:28:21,220 [myid:] - INFO [main-SendThread(node2:2181):ClientCnxn$SendThread@878] - Socket connection established to node2/192.168.1.102:2181, initiating session 2018-06-13 17:28:21,229 [myid:] - INFO [main-SendThread(node2:2181):ClientCnxn$SendThread@1302] - Session establishment complete on server node2/192.168.1.102:2181, sessionid = 0x20034776a7c000e, negotiated timeout = 30000 WATCHER:: WatchedEvent state:SyncConnected type:None path:null [zk: node2:2181(CONNECTED) 0] help ZooKeeper -server host:port cmd args stat path [watch] set path data [version] ls path [watch] delquota [-n|-b] path ls2 path [watch] setAcl path acl setquota -n|-b val path history redo cmdno printwatches on|off delete path [version] sync path listquota path rmr path get path [watch] create [-s] [-e] path data acl addauth scheme auth quit getAcl path close connect host:port [zk: node2:2181(CONNECTED) 1] quit Quitting... 2018-06-13 17:28:37,984 [myid:] - INFO [main:ZooKeeper@687] - Session: 0x20034776a7c000e closed 2018-06-13 17:28:37,986 [myid:] - INFO [main-EventThread:ClientCnxn$EventThread@521] - EventThread shut down for session: 0x20034776a7c000e [root@node1 bigdata]#

2. Start all journalnode nodes

# node1 node [root@node1 ~]# cd /data/bigdata/hadoop/ [root@node1 hadoop]# ./sbin/hadoop-daemon.sh start journalnode starting journalnode, logging to /data/bigdata/src/hadoop-2.7.6/logs/hadoop-root-journalnode-node1.out # Corresponding process (other similar) [root@node1 ~]# jps 23993 QuorumPeerMain 24474 JournalNode # Newly started process 24910 Jps [root@node1 ~]# # I have three journal nodes # node2 node [root@node2 ~]# cd /data/bigdata/hadoop/ [root@node2 hadoop]# ./sbin/hadoop-daemon.sh start journalnode # node3 node [root@node3 ~]# cd /data/bigdata/hadoop/ [root@node3 hadoop]# ./sbin/hadoop-daemon.sh start journalnode

3. Format the namenode directory (primary node node1)

[root@node1 hadoop]# cd /data/bigdata/hadoop [root@node1 hadoop]# ./bin/hdfs namenode -format 18/06/04 14:24:03 INFO namenode.NameNode: STARTUP_MSG: /************************************************************ STARTUP_MSG: Starting NameNode STARTUP_MSG: host = node1/192.168.1.101 STARTUP_MSG: args = [-format] STARTUP_MSG: version = 2.7.6 .......................................... Omit some ........................................... 18/06/04 14:24:05 INFO namenode.NNStorageRetentionManager: Going to retain 1 images with txid >= 0 18/06/04 14:24:05 INFO util.ExitUtil: Exiting with status 0 18/06/04 14:24:05 INFO namenode.NameNode: SHUTDOWN_MSG: /************************************************************ SHUTDOWN_MSG: Shutting down NameNode at node1/192.168.1.101 ************************************************************/ [root@node1 hadoop]#

4. Start the currently formatted namenode process (primary node node1)

[root@node1 hadoop]# ./sbin/hadoop-daemon.sh start namenode starting namenode, logging to /data/bigdata/src/hadoop-2.7.6/logs/hadoop-root-namenode-node1.out [root@node1 ~]# jps # # Corresponding process 25155 Jps 23993 QuorumPeerMain 25050 NameNode # name node 24474 JournalNode [root@node1 ~]#

5. Execute synchronization command on unformatted NN (sub node node2)

[root@node2 hadoop]# ./bin/hdfs namenode -bootstrapStandby 18/06/04 14:26:55 INFO namenode.NameNode: STARTUP_MSG: /************************************************************ STARTUP_MSG: Starting NameNode STARTUP_MSG: host = node2/192.168.1.102 STARTUP_MSG: args = [-bootstrapStandby] STARTUP_MSG: version = 2.7.6 .......................................... Omit some ........................................... ************************************************************/ 18/06/04 14:26:55 INFO namenode.NameNode: registered UNIX signal handlers for [TERM, HUP, INT] 18/06/04 14:26:55 INFO namenode.NameNode: createNameNode [-bootstrapStandby] 18/06/04 14:26:55 WARN common.Util: Path /data/bigdata/hdfs/name should be specified as a URI in configuration files. Please update hdfs configuration. 18/06/04 14:26:55 WARN common.Util: Path /data/bigdata/hdfs/name should be specified as a URI in configuration files. Please update hdfs configuration. ===================================================== About to bootstrap Standby ID nn2 from: Nameservice ID: mycluster Other Namenode ID: nn1 Other NN's HTTP address: http://node1:50070 Other NN's IPC address: node1/192.168.1.101:8020 Namespace ID: 736429223 Block pool ID: BP-1022667957-192.168.1.101-1528093445721 Cluster ID: CID-9d4854cd-7201-4e0d-9536-36e73195dc5a Layout version: -63 isUpgradeFinalized: true ===================================================== 18/06/04 14:26:56 INFO common.Storage: Storage directory /data/bigdata/hdfs/name has been successfully formatted. 18/06/04 14:26:56 WARN common.Util: Path /data/bigdata/hdfs/name should be specified as a URI in configuration files. Please update hdfs configuration. 18/06/04 14:26:56 WARN common.Util: Path /data/bigdata/hdfs/name should be specified as a URI in configuration files. Please update hdfs configuration. 18/06/04 14:26:57 INFO namenode.TransferFsImage: Opening connection to http://node1:50070/imagetransfer?getimage=1&txid=0&storageInfo=-63:736429223:0:CID-9d4854cd-7201-4e0d-9536-36e73195dc5a 18/06/04 14:26:57 INFO namenode.TransferFsImage: Image Transfer timeout configured to 60000 milliseconds 18/06/04 14:26:57 INFO namenode.TransferFsImage: Transfer took 0.00s at 0.00 KB/s 18/06/04 14:26:57 INFO namenode.TransferFsImage: Downloaded file fsimage.ckpt_0000000000000000000 size 306 bytes. 18/06/04 14:26:57 INFO util.ExitUtil: Exiting with status 0 18/06/04 14:26:57 INFO namenode.NameNode: SHUTDOWN_MSG: /************************************************************ SHUTDOWN_MSG: Shutting down NameNode at node2/192.168.1.102 ************************************************************/ # If not, directly copy the / data/bigdata/hdfs/name directory of node1 node, and then # Start slave [root@node2 hadoop]# ./sbin/hadoop-daemon.sh start namenode starting namenode, logging to /data/bigdata/src/hadoop-2.7.6/logs/hadoop-root-namenode-node2.out

6. Format ZKFC

format zkfc,Let in zookeeper Generated in ha Node, in master Execute the following command to complete the format: [root@node1 hadoop]# ./bin/hdfs zkfc -formatZK 18/06/04 16:53:15 INFO tools.DFSZKFailoverController: Failover controller configured for NameNode NameNode at node1/192.168.1.101:8020 18/06/04 16:53:16 INFO zookeeper.ZooKeeper: Client environment:zookeeper.version=3.4.6-1569965, built on 02/20/2014 09:09 GMT 18/06/04 16:53:16 INFO zookeeper.ZooKeeper: Client environment:host.name=node1 18/06/04 16:53:16 INFO zookeeper.ZooKeeper: Client environment:java.version=1.8.0_162 18/06/04 16:53:16 INFO zookeeper.ZooKeeper: Client environment:java.vendor=Oracle Corporation 18/06/04 16:53:16 INFO zookeeper.ZooKeeper: Client environment:java.home=/opt/jdk1.8.0_162/jre .......................................... //Omit some ........................................... 18/06/04 16:53:16 INFO ha.ActiveStandbyElector: Session connected. 18/06/04 16:53:16 INFO ha.ActiveStandbyElector: Successfully created /hadoop-ha/mycluster in ZK. 18/06/04 16:53:16 INFO zookeeper.ZooKeeper: Session: 0x20034776a7c0000 closed 18/06/04 16:53:16 INFO zookeeper.ClientCnxn: EventThread shut down [root@node1 hadoop]# ./sbin/hadoop-daemon.sh start zkfc starting zkfc, logging to /data/bigdata/src/hadoop-2.7.6/logs/hadoop-root-zkfc-node1.out [root@node1 hadoop]# jps 5443 DFSZKFailoverController # New process 4664 JournalNode 23993 QuorumPeerMain 5545 Jps 4988 NameNode [root@node1 hadoop]# # The other node starts zkfc, and all nodes with namenode running must start zkfc [root@node2 hadoop]# ./sbin/hadoop-daemon.sh start zkfc

7. Start hdfs (datanode)

[root@node1 hadoop]# ./sbin/start-dfs.sh Starting namenodes on [node1 node2] node1: namenode running as process 25050. Stop it first. node2: namenode running as process 30976. Stop it first. node1: starting datanode, logging to /data/bigdata/src/hadoop-2.7.6/logs/hadoop-root-datanode-node1.out node2: starting datanode, logging to /data/bigdata/src/hadoop-2.7.6/logs/hadoop-root-datanode-node2.out node5: starting datanode, logging to /data/bigdata/src/hadoop-2.7.6/logs/hadoop-root-datanode-node5.out node3: starting datanode, logging to /data/bigdata/src/hadoop-2.7.6/logs/hadoop-root-datanode-node3.out node4: starting datanode, logging to /data/bigdata/src/hadoop-2.7.6/logs/hadoop-root-datanode-node4.out Starting journal nodes [node1 node2 node3] node1: journalnode running as process 24474. Stop it first. node3: journalnode running as process 19893. Stop it first. node2: journalnode running as process 29871. Stop it first. Starting ZK Failover Controllers on NN hosts [node1 node2] node1: starting zkfc, logging to /data/bigdata/src/hadoop-2.7.6/logs/hadoop-root-zkfc-node1.out node2: starting zkfc, logging to /data/bigdata/src/hadoop-2.7.6/logs/hadoop-root-zkfc-node2.out [root@node1 hadoop]# jps 25968 DataNode # All nodes have 23993 QuorumPeerMain 5443 DFSZKFailoverController 25050 NameNode 24474 JournalNode 26525 Jps [root@node1 hadoop]#

8. Start yarn:

[root@node1 hadoop]# ./sbin/start-yarn.sh starting yarn daemons starting resourcemanager, logging to /data/bigdata/src/hadoop-2.7.6/logs/yarn-root-resourcemanager-node1.out node1: starting nodemanager, logging to /data/bigdata/src/hadoop-2.7.6/logs/yarn-root-nodemanager-node1.out node2: starting nodemanager, logging to /data/bigdata/src/hadoop-2.7.6/logs/yarn-root-nodemanager-node2.out node4: starting nodemanager, logging to /data/bigdata/src/hadoop-2.7.6/logs/yarn-root-nodemanager-node4.out node3: starting nodemanager, logging to /data/bigdata/src/hadoop-2.7.6/logs/yarn-root-nodemanager-node3.out node5: starting nodemanager, logging to /data/bigdata/src/hadoop-2.7.6/logs/yarn-root-nodemanager-node5.out [root@node1 hadoop]# jps 25968 DataNode 23993 QuorumPeerMain 5443 DFSZKFailoverController 25050 NameNode 24474 JournalNode 27068 Jps 26894 NodeManager # All nodes have [root@node1 hadoop]#

9. Start resourcemanager on two resourcemanagers

(1) Single start

[root@node3 hadoop]# ./sbin/yarn-daemon.sh start resourcemanager starting resourcemanager, logging to /data/bigdata/src/hadoop-2.7.6/logs/yarn-root-resourcemanager-node3.out [root@node4 hadoop]# ./sbin/yarn-daemon.sh start resourcemanager # Corresponding process (other similar) [root@node3 ~]# jps 21088 NodeManager 21297 ResourceManager # This process 19459 QuorumPeerMain 19893 JournalNode 20714 DataNode 21535 Jps [root@node3 ~]#

(2) Cluster start

An hdfs cluster in production will have two ResourceManager nodes. If it is a little bit troublesome to start one by one, a custom script is used to start all ResourceManager nodes in the cluster, as follows:

[root@node1 bigdata]# pwd /data/bigdata [root@node1 bigdata]# cat yarn_all_resourcemanager.sh #!/bin/bash # resourcemanager management hadoop_yarn_daemon_home=/data/bigdata/hadoop/sbin/ yarn_resourcemanager_node=( "node3" "node4" ) for renode in ${yarn_resourcemanager_node[@]}; do ssh -p 22 -q root@$znode " export PATH=/usr/local/sbin:/usr/local/bin:/sbin:/bin:/usr/sbin:/usr/bin:/root/bin source /etc/profile cd $hadoop_yarn_daemon_home/ && ./yarn-daemon.sh $1 resourcemanager " echo "$renode resourcemanager $1 done" done [root@node1 bigdata]# HDFS and yarn Of web The default listening ports of the console are 50070 and 8088 respectively. You can view the operation through browse and play. //For example: http://192.168.1.101:50070/ http://192.168.1.103:8088/ # Stop command: do not operate $HADOOP_HOME/sbin/stop-dfs.sh $HADOOP_HOME/sbin/stop-yarn.sh # If everything is normal, you can use jps to view the running Hadoop service. The display result on the machine is: [root@node1 hadoop]# jps 7312 Jps 1793 NameNode 2163 JournalNode 357 NodeManager 2696 QuorumPeerMain 14428 DFSZKFailoverController 1917 DataNode

Processes started so far:

| \ | node1 | node2 | node3 | node4 | node5 |

|---|---|---|---|---|---|

| JDK | ✔ | ✔ | ✔ | ✔ | ✔ |

| QuorumPeerMain | ✔ | ✔ | ✔ | ||

| JournalNode | ✔ | ✔ | ✔ | ||

| NameNode | ✔ | ✔ | |||

| DFSZKFailoverController | ✔ | ✔ | |||

| DataNode | ✔ | ✔ | ✔ | ✔ | ✔ |

| NodeManager | ✔ | ✔ | ✔ | ✔ | ✔ |

| ResourceManager | ✔ | ✔ |

10. Verify the HA function of HDFS

Find the process number of namenode through jps command on any namenode machine, kill the process through kill-9, and observe whether another namenode will change from the status standby to the active state.

[root@node1 bigdata]# jps 16704 JournalNode 16288 NameNode 16433 DataNode 23993 QuorumPeerMain 17241 NodeManager 18621 Jps 16942 DFSZKFailoverController [root@node1 bigdata]# kill -9 16288

Then observe the zkfc log of the namenode machine in the status of standby. If the following log appears in the last line, the switch is successful:

2018-05-31 16:14:41,114 INFOorg.apache.hadoop.ha.ZKFailoverController: Successfully transitioned NameNodeat hd0/192.168.1.102:53310 to active state

At this time, start the namenode process that has been kill ed by the command

[root@node1 bigdata]# ./sbin/hadoop-daemon.sh start namenode

The last log line of zkfc of the corresponding process is as follows:

2018-05-31 16:14:55,683 INFOorg.apache.hadoop.ha.ZKFailoverController: Successfully transitioned NameNodeat hd2/192.168.1.101:53310 to standby state

You can kill the namenode process back and forth between two namenode machines to check the HA configuration of HDFS!

7, scala

1. Preparation before configuration

scala runs in the jvm virtual machine, which needs to be configured with jdk;

2. Decompress

[root@node1 sbin]# tar -zxvf /data/tools/scala-2.11.12.tgz -C /data/bigdata/src [root@node1 sbin]# ln -s /data/bigdata/src/scala-2.11.12 /data/bigdata/scala # Add environment variable [root@node1 ~]# echo -e "\n# scala\nexport scala_HOME=/data/bigdata/scala\nexport PATH=\$scala_HOME/bin:\$PATH" >> /etc/profile.d/bigdata_path.sh [root@node1 ~]# cat /etc/profile.d/bigdata_path.sh # zookeeper export ZOOKEEPER_HOME=/data/bigdata/zookeeper export PATH=$ZOOKEEPER_HOME/bin:$PATH # hadoop export HADOOP_HOME=/data/bigdata/hadoop export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH # scala export scala_HOME=/data/bigdata/scala export PATH=$scala_HOME/bin:$PATH [root@node1 ~]# source /etc/profile

3. View scala version

[root@node1 ~]# scala -version Scala code runner version 2.11.12 -- Copyright 2002-2016, LAMP/EPFL

4. Running scala commands

[root@node1 ~]# scala Welcome to Scala 2.11.12 (Java HotSpot(TM) 64-Bit Server VM, Java 1.8.0_162). Type in expressions for evaluation. Or try :help. scala> 1+1 res0: Int = 2 scala>

If the above two steps are OK, it means that scala has been installed and configured successfully.

8, Install spark

1. Extract spark

[root@node1 conf]# tar -zxvf /data/tools/spark-2.1.2-bin-hadoop2.7.tgz -C /data/bigdata/src/ [root@node1 conf]# ln -s /data/bigdata/src/spark-2.1.2-bin-hadoop2.7 /data/bigdata/spark # Add environment variable [root@node1 ~]# echo -e "\n# spark\nexport SPARK_HOME=/data/bigdata/spark\nexport PATH=\$SPARK_HOME/bin:\$PATH" >> /etc/profile.d/bigdata_path.sh [root@node1 ~]# cat /etc/profile.d/bigdata_path.sh # zookeeper export ZOOKEEPER_HOME=/data/bigdata/zookeeper export PATH=$ZOOKEEPER_HOME/bin:$PATH # hadoop export HADOOP_HOME=/data/bigdata/hadoop export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH # scala export scala_HOME=/data/bigdata/scala export PATH=$scala_HOME/bin:$PATH [root@node1 ~]# source /etc/profile # spark export SPARK_HOME=/data/bigdata/spark export PATH=$SPARK_HOME/bin:$PATH [root@node1 ~]#

2. Configure spark-env.sh

[root@node1 conf]# cd /data/bigdata/spark/conf/ [root@node1 conf]# cp spark-env.sh{.template,} # add to: [root@node1 conf]# vim spark-env.sh export SPARK_LOCAL_IP="192.168.1.101" # Change from node to its own IP (or 127.0.0.1), or note out export SPARK_MASTER_IP="192.168.1.101" export JAVA_HOME=/opt/java export SPARK_PID_DIR=/data/bigdata/hdfs/pids export SPARK_LOCAL_DIRS= /data/bigdata/sparktmp export PYSPARK_PYTHON=/usr/local/bin/python3 # When developing with python3, the absolute path of python3 is provided. # Set the memory that this node can call export SPARK_WORKER_MEMORY=58g export SPARK_MASTER_PORT=7077 export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop export LD_LIBRARY_PATH=$HADOOP_HOME/lib/native export SPARK_HISTORY_OPTS="-Dspark.history.ui.port=18080 -Dspark.history.retainedApplications=3 -Dspark.history.fs.logDirectory=hdfs://mycluster/directory" # Limit the maximum number of cores that the program can apply for, and the number of cpu cores that this node can call export SPARK_MASTER_OPTS="-Dspark.deploy.defaultCores=16" export SPARK_SSH_OPTS="-p 22 -o StrictHostKeyChecking=no $SPARK_SSH_OPTS"

3. Configure spark-defaults.conf

[root@node1 conf]# cp spark-defaults.conf{.template,} [root@node1 conf]# vim spark-defaults.conf #add to spark.serializer org.apache.spark.serializer.KryoSerializer spark.eventLog.enabled true spark.eventLog.dir hdfs://mycluster/directory # Use Python 3 + development time configuration; park.executorEnv.PYTHONHASHSEED=0 # New corresponding directory [root@node1 conf]# mkdir -pv /data/bigdata/sparktmp [root@node1 conf]# hdfs dfs -mkdir /directory //explain: spark.executorEnv.PYTHONHASHSEED=0 to configure: //If you use Python 3 +, and you use distinct on a Spark cluster(),reduceByKey(),and join()These functions will trigger the following exceptions: Exception: Randomness of hash of string should be disabled via PYTHONHASHSEED python When a traversal object object is created, each object is randomly hashed to create an index. However, in a cluster, each node creates different index values for a variable during calculation, which will lead to data index conflicts. So you need to set PYTHONHASHSEED To fix random seeds and ensure index consistency. See:

Spark cluster configuration (4): other pit filling miscellaneous

4. Configure slaves

[root@node1 conf]# cp slaves{.template,} [root@node1 conf]# vim slaves node1 node2 node3 node4 node5

5. Distribution

[root@node1 conf]# scp -rp /data/bigdata/src/spark-2.1.2-bin-hadoop2.7 node2:/data/bigdata/src/ [root@node1 conf]# scp -rp /data/bigdata/src/spark-2.1.2-bin-hadoop2.7 node3:/data/bigdata/src/ [root@node1 conf]# scp -rp /data/bigdata/src/spark-2.1.2-bin-hadoop2.7 node4:/data/bigdata/src/ [root@node1 conf]# scp -rp /data/bigdata/src/spark-2.1.2-bin-hadoop2.7 node5:/data/bigdata/src/ # Distribute created directories [root@node1 bigdata]# scp -rp /data/bigdata/{hdfs,sparktmp,tmp} node2:/data/bigdata/ [root@node1 bigdata]# scp -rp /data/bigdata/{hdfs,sparktmp,tmp} node3:/data/bigdata/ [root@node1 bigdata]# scp -rp /data/bigdata/{hdfs,sparktmp,tmp} node4:/data/bigdata/ [root@node1 bigdata]# scp -rp /data/bigdata/{hdfs,sparktmp,tmp} node5:/data/bigdata/ # Create a soft connection [root@node2 ~]# ln -s /data/bigdata/src/spark-2.1.2-bin-hadoop2.7 /data/bigdata/spark [root@node3 ~]# ln -s /data/bigdata/src/spark-2.1.2-bin-hadoop2.7 /data/bigdata/spark [root@node4 ~]# ln -s /data/bigdata/src/spark-2.1.2-bin-hadoop2.7 /data/bigdata/spark [root@node5 ~]# ln -s /data/bigdata/src/spark-2.1.2-bin-hadoop2.7 /data/bigdata/spark # Modify $SPARK_HOME/conf/spark-env.sh Spark in_ LOCAL_ IP parameters [root@node2 ~]# vim $SPARK_HOME/conf/spark-env.sh export SPARK_LOCAL_IP="192.168.1.102" [root@node3 ~]# vim $SPARK_HOME/conf/spark-env.sh export SPARK_LOCAL_IP="192.168.1.103" [root@node4 ~]# vim $SPARK_HOME/conf/spark-env.sh export SPARK_LOCAL_IP="192.168.1.104" [root@node5 ~]# vim $SPARK_HOME/conf/spark-env.sh export SPARK_LOCAL_IP="192.168.1.105"

6. Start spark

[root@node1 bin]# cd /data/bigdata/spark/sbin [root@node1 sbin]# ./start-all.sh starting org.apache.spark.deploy.master.Master, logging to /data/bigdata/spark/logs/spark-root-org.apache.spark.deploy.master.Master-1-node1.out node1: starting org.apache.spark.deploy.worker.Worker, logging to /data/bigdata/spark/logs/spark-root-org.apache.spark.deploy.worker.Worker-1-node1.out node5: starting org.apache.spark.deploy.worker.Worker, logging to /data/bigdata/spark/logs/spark-root-org.apache.spark.deploy.worker.Worker-1-node5.out node3: starting org.apache.spark.deploy.worker.Worker, logging to /data/bigdata/spark/logs/spark-root-org.apache.spark.deploy.worker.Worker-1-node3.out node4: starting org.apache.spark.deploy.worker.Worker, logging to /data/bigdata/spark/logs/spark-root-org.apache.spark.deploy.worker.Worker-1-node4.out node2: starting org.apache.spark.deploy.worker.Worker, logging to /data/bigdata/spark/logs/spark-root-org.apache.spark.deploy.worker.Worker-1-node2.out [root@node1 sbin]# jps 5443 DFSZKFailoverController 5684 Master # master of spark 1092 HRegionServer 5846 Worker # spark process 904 HMaster 4664 JournalNode 23993 QuorumPeerMain 6266 Jps 7227 NodeManager 4988 NameNode 6495 DataNode

web interface: http://192.168.1.101:8080/

[root@node1 sbin]# ./start-history-server.sh starting org.apache.spark.deploy.history.HistoryServer, logging to /data/bigdata/spark/logs/spark-root-org.apache.spark.deploy.history.HistoryServer-1-node1.out [root@node1 sbin]# jps 5443 DFSZKFailoverController 5684 Master # master of spark 1092 HRegionServer 5846 Worker # spark process 904 HMaster 4664 JournalNode 29366 HistoryServer # spark keeps the historical log 23993 QuorumPeerMain 6266 Jps 7227 NodeManager 4988 NameNode 6495 DataNode

Visit WEBUI: http://192.168.1.101:18080/

Processes started so far:

| \ | node1 | node2 | node3 | node4 | node5 |

|---|---|---|---|---|---|

| JDK | ✔ | ✔ | ✔ | ✔ | ✔ |

| QuorumPeerMain | ✔ | ✔ | ✔ | ||

| JournalNode | ✔ | ✔ | ✔ | ||

| NameNode | ✔ | ✔ | |||

| DFSZKFailoverController | ✔ | ✔ | |||

| DataNode | ✔ | ✔ | ✔ | ✔ | ✔ |

| NodeManager | ✔ | ✔ | ✔ | ✔ | ✔ |

| ResourceManager | ✔ | ✔ | |||

| Master | ✔ | ||||

| Worker | ✔ | ✔ | ✔ | ✔ | ✔ |

| HistoryServer | ✔ |

9, Installing hbase

The NameNode process of Master and Hadoop runs on the same host and communicates with DataNode

To read and write HDFS data. RegionServer runs on the same host as Hadoop's DataNode.

reference resources:

Official documents

Brief introduction of hbase database installation and use of common commands

1. Decompress hbase

[root@node1 sbin]# tar -zxvf /data/tools/hbase-1.2.6-bin.tar.gz -C /data/bigdata/src [root@node1 sbin]# ln -s /data/bigdata/src/hbase-1.2.6 /data/bigdata/hbase # Add environment variable [root@node1 ~]# echo -e "\n# hbase\nexport HBASE_HOME=/data/bigdata/hbase\nexport PATH=\$HBASE_HOME/bin:\$PATH" >> /etc/profile.d/bigdata_path.sh [root@node1 ~]# cat /etc/profile.d/bigdata_path.sh # zookeeper export ZOOKEEPER_HOME=/data/bigdata/zookeeper export PATH=$ZOOKEEPER_HOME/bin:$PATH # hadoop export HADOOP_HOME=/data/bigdata/hadoop export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH # scala export scala_HOME=/data/bigdata/scala export PATH=$scala_HOME/bin:$PATH # spark export SPARK_HOME=/data/bigdata/spark export PATH=$SPARK_HOME/bin:$PATH # hbase export HBASE_HOME=/data/bigdata/hbase export PATH=$HBASE_HOME/bin:$PATH [root@node1 ~]#

2. Modify $HBASE_HOME/conf/hbase-env.sh , add

[root@node1 sbin]# cd /data/bigdata/hbase/conf [root@node1 conf]# vim hbase-env.sh export JAVA_HOME=/opt/java export HBASE_HOME=/data/bigdata/hbase export LD_LIBRARY_PATH=$LD_LIBRARY_PATH:$HADOOP_HOME/lib/native/ export HBASE_LIBRARY_PATH=$HBASE_LIBRARY_PATH:$HBASE_HOME/lib/native/ # The etc/hadoop directory set to Hadoop is used to guide HBase to find Hadoop, that is to say, HBase is associated with Hadoop [it must be set, otherwise hmaster cannot afford it] export HBASE_CLASSPATH=$HADOOP_HOME/etc/hadoop export HBASE_MANAGES_ZK=false #Do not enable zookeeeper with hbase export HBASE_PID_DIR=/data/bigdata/hdfs/pids export HBASE_SSH_OPTS="-o ConnectTimeout=1 -p 22" # ssh port; # Note the following two lines for jdk1.8 and above # Configure PermSize. Only needed in JDK7. You can safely remove it for JDK8+ #export HBASE_MASTER_OPTS="$HBASE_MASTER_OPTS -XX:PermSize=128m -XX:MaxPermSize=128m" #export HBASE_REGIONSERVER_OPTS="$HBASE_REGIONSERVER_OPTS -XX:PermSize=128m -XX:MaxPermSize=128m"

3. Modify the regionservers file

[root@node1 conf]# vim regionservers node1 node2 node3

4. Modify hbase-site.xml file

[root@node1 conf]# vim hbase-site.xml <configuration> <property> <name>hbase.rootdir</name> <value>hdfs://mycluster/hbase</value> </property> <property> <name>hbase.zookeeper.quorum</name> <value>node1,node2,node3</value> <description>Specify cluster zookeeper host name</description> </property> <property> <name>hbase.zookeeper.property.clientPort</name> <value>2181</value> </property> <property> <name>hbase.master.info.port</name> <value>60010</value> </property> <property> <name>hbase.cluster.distributed</name> <value>true</value> <description>Multiple hbase Turn on this parameter</description> </property> </configuration>

5. Distribution

[root@node1 conf]# scp -rp /data/bigdata/src/hbase-1.2.6 node2:/data/bigdata/src/ [root@node1 conf]# scp -rp /data/bigdata/src/hbase-1.2.6 node3:/data/bigdata/src/ # Create a soft connection [root@node2 ~]# ln -s /data/bigdata/src/hbase-1.2.6 /data/bigdata/hbase [root@node3 ~]# ln -s /data/bigdata/src/hbase-1.2.6 /data/bigdata/hbase

6. Start hbase

[root@node1 hadoop]# cd /data/bigdata/hbase/bin [root@node1 bin]# ./start-hbase.sh [root@node1 bin]# jps 5443 DFSZKFailoverController 1092 HRegionServer # hbase process 904 HMaster # hbase master node 4664 JournalNode 23993 QuorumPeerMain 14730 Master 7227 NodeManager 4988 NameNode 14877 Worker 1917 Jps 6495 DataNode [root@node1 bin]#

Processes started so far:

| \ | node1 | node2 | node3 | node4 | node5 |

|---|---|---|---|---|---|

| JDK | ✔ | ✔ | ✔ | ✔ | ✔ |

| QuorumPeerMain | ✔ | ✔ | ✔ | ||

| JournalNode | ✔ | ✔ | ✔ | ||

| NameNode | ✔ | ✔ | |||

| DFSZKFailoverController | ✔ | ✔ | |||

| DataNode | ✔ | ✔ | ✔ | ✔ | ✔ |

| NodeManager | ✔ | ✔ | ✔ | ✔ | ✔ |

| ResourceManager | ✔ | ✔ | |||

| Master | ✔ | ||||

| Worker | ✔ | ✔ | ✔ | ✔ | ✔ |

| HistoryServer | ✔ | ||||

| HMaster | ✔ | ||||

| HRegionServer | ✔ | ✔ | ✔ |

Hbase web page http://192.168.1.101:16030

Hbase Master URL:http://192.168.1.101:60010

# test [root@node1 bin]# ./hbase shell SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/data/bigdata/src/hbase-1.2.6/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/data/bigdata/src/hadoop-2.7.6/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory] HBase Shell; enter 'help<RETURN>' for list of supported commands. Type "exit<RETURN>" to leave the HBase Shell Version 1.2.6, rUnknown, Mon May 29 02:25:32 CDT 2017 hbase(main):001:0> list # Enter command list TABLE 0 row(s) in 0.2670 seconds => [] hbase(main):002:0> quit [root@node1 bin]#

10, kafka

Official documents

Best experience of kafka in actual combat

1. Decompress and create environment variables

[root@node4 conf]# tar -zxvf /data/tools/kafka_2.11-1.1.0.tgz -C /data/bigdata/src/ [root@node4 conf]# ln -s /data/bigdata/src/kafka_2.11-1.1.0 /data/bigdata/kafka # Add environment variable [root@node4 ~]# echo -e "\n# kafka\nexport KAFKA_HOME=/data/bigdata/kafka\nexport PATH=\$KAFKA_HOME/bin:\$PATH" >> /etc/profile.d/bigdata_path.sh [root@node4 ~]# cat /etc/profile.d/bigdata_path.sh # zookeeper export ZOOKEEPER_HOME=/data/bigdata/zookeeper export PATH=$ZOOKEEPER_HOME/bin:$PATH # hadoop export HADOOP_HOME=/data/bigdata/hadoop export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH # hbase export HBASE_HOME=/data/bigdata/hbase export PATH=$HBASE_HOME/bin:$PATH # scala export scala_HOME=/data/bigdata/scala export PATH=$scala_HOME/bin:$PATH # spark export SPARK_HOME=/data/bigdata/spark export PATH=$SPARK_HOME/bin:$PATH # kafka export KAFKA_HOME=/data/bigdata/kafka export PATH=$KAFKA_HOME/bin:$PATH # take effect [root@node4 conf]# source /etc/profile

2. Modification server.properties Profile:

[root@node4 ~]# cd /data/bigdata/kafka/config/ # Enter the conf directory [root@node4 conf]# cp server.properties{,.bak} # Backup profile [root@node4 conf]# vim server.properties broker.id=1 listeners=PLAINTEXT://node4:9092 advertised.listeners=PLAINTEXT://node4:9092 log.dirs=/data/bigdata/kafka/logs zookeeper.connect=node1:2181,node2:2181,node3:2181 [root@node4 conf]# mkdir -p /data/bigdata/kafka/logs

3. Distribution

[root@node4 conf]# scp -rp /data/bigdata/src/kafka_2.11-1.1.0 node5: /data/bigdata/src/ # Child node [root@node5 ~]# ln -s /data/bigdata/src/kafka_2.11-1.1.0 /data/bigdata/kafka [root@node5 ~]# mkdir -p /data/bigdata/kafka/logs # Modify each server.properties In the file broker.id [root@node5 ~]# cd /data/bigdata/kafka/ [root@node5 kafka]# vim ./config/server.properties broker.id=2 listeners=PLAINTEXT://node5:9092 advertised.listeners=PLAINTEXT://node5:9092 # see [root@node1 conf]# ansible kafka -m shell -a '$(which egrep) --color=auto "^broker.id|^listeners|^advertised.listeners" ${KAFKA_HOME}/config/server.properties'

4. Start

[root@node4 kafka]# ./bin/kafka-server-start.sh config/server.properties # Single node foreground operation [2018-06-05 13:32:35,323] INFO Registered kafka:type=kafka.Log4jController MBean (kafka.utils.Log4jControllerRegistration$) [2018-06-05 13:32:35,672] INFO starting (kafka.server.KafkaServer) [2018-06-05 13:32:35,673] INFO Connecting to zookeeper on node1:2181,node2:2181,node3:2181 (kafka.server.KafkaServer) [2018-06-05 13:32:35,692] INFO [ZooKeeperClient] Initializing a new session to node1:2181,node2:2181,node3:2181. (kafka.zookeeper.ZooKeeperClient) [2018-06-05 13:32:35,698] INFO Client environment:zookeeper.version=3.4.10-39d3a4f269333c922ed3db283be479f9deacaa0f, built on 03/23/2017 10:13 GMT .......................................... //Omit some ........................................... [2018-06-05 13:33:38,920] INFO Terminating process due to signal SIGINT (kafka.Kafka$) [2018-06-05 13:33:39,168] INFO [ThrottledRequestReaper-Produce]: Stopped (kafka.server.ClientQuotaManager$ThrottledRequestReaper) [2018-06-05 13:33:39,168] INFO [ThrottledRequestReaper-Produce]: Shutdown completed (kafka.server.ClientQuotaManager$ThrottledRequestReaper) [2018-06-05 13:33:39,168] INFO [ThrottledRequestReaper-Request]: Shutting down (kafka.server.ClientQuotaManager$ThrottledRequestReaper) [2018-06-05 13:33:39,168] INFO [ThrottledRequestReaper-Request]: Stopped (kafka.server.ClientQuotaManager$ThrottledRequestReaper) [2018-06-05 13:33:39,168] INFO [ThrottledRequestReaper-Request]: Shutdown completed (kafka.server.ClientQuotaManager$ThrottledRequestReaper) [2018-06-05 13:33:39,169] INFO [SocketServer brokerId=1] Shutting down socket server (kafka.network.SocketServer) [2018-06-05 13:33:39,193] INFO [SocketServer brokerId=1] Shutdown completed (kafka.network.SocketServer) [2018-06-05 13:33:39,199] INFO [KafkaServer id=1] shut down completed (kafka.server.KafkaServer) [root@node4 kafka]# ./bin/kafka-server-start.sh -daemon config/server.properties # Start a single node in the background, and other Kafka will also be started. The process name is Kafka

Processes started so far:

| \ | node1 | node2 | node3 | node4 | node5 |

|---|---|---|---|---|---|

| JDK | ✔ | ✔ | ✔ | ✔ | ✔ |

| QuorumPeerMain | ✔ | ✔ | ✔ | ||

| JournalNode | ✔ | ✔ | ✔ | ||

| NameNode | ✔ | ✔ | |||

| DFSZKFailoverController | ✔ | ✔ | |||

| DataNode | ✔ | ✔ | ✔ | ✔ | ✔ |

| NodeManager | ✔ | ✔ | ✔ | ✔ | ✔ |

| ResourceManager | ✔ | ✔ | |||

| HMaster | ✔ | ||||

| HRegionServer | ✔ | ✔ | ✔ | ||

| HistoryServer | ✔ | ||||

| Master | ✔ | ||||

| Worker | ✔ | ✔ | ✔ | ✔ | ✔ |

| Kafka | ✔ | ✔ |

Kafka does not provide the execution script to start all nodes in the cluster at the same time. In production, a Kafka cluster often has multiple nodes. If it is a little troublesome to start one by one, a custom script is used to start all nodes in the cluster, as follows:

[root@node1 bigdata]# cat kafka_cluster_start.sh #!/bin/bash brokers="node4 node5" KAFKA_HOME="/data/bigdata/kafka" for broker in $brokers do ssh $broker -C "source /etc/profile; cd ${KAFKA_HOME}/bin && ./kafka-server-start.sh -daemon ../config/server.properties" if [ $? -eq 0 ]; then echo "INFO:[${broker}] Start successfully " fi done [root@node1 bigdata]#

5. Testing

Create a topic: (indicates the zookeeper to connect) for example, the topic name is: TestTopic

[root@node4 kafka]# ./bin/kafka-topics.sh --create --zookeeper node1:2181,node2:2181,node3:2181 --replication-factor 1 --partitions 1 --topic TestTopic Created topic "TestTopic". # Indicates successful creation

View theme:

[root@node4 kafka]# ./bin/kafka-topics.sh --list --zookeeper node1:2181,node2:2181,node3:2181 TestTopic # You can see all the created themes

Select any one and create the producer: (kafka cluster user)

[root@node4 kafka]# ./bin/kafka-console-producer.sh --broker-list node4:9092,node5:9092 --topic TestTopic >hi >hello 1 >hello 2 >

Another, create consumers

[root@node5 kafka]# ./bin/kafka-console-consumer.sh --bootstrap-server node4:9092,node5:9092 --from-beginning --topic TestTopic hi hello 1 hello 2

The producer inputs some data to see if the consumer displays the data entered by the producer.

6. Close

[ root@node4 kafka]# ./bin/kafka-server- stop.sh #Close, other Kafka will also close,

Sometimes it doesn't work. "No kafka server to stop" is displayed,

The reason for the failure is Kafka server- stop.sh PS ax | grep - I 'in script kafka.Kafka The command '| grep Java | grep - V grep | awk' {print $1} 'does not get the PID of Kafka process in the operating system I use:

So here Kafka server- stop.sh The command to find PID in the script is modified as follows:

#PIDS=$(ps ax | grep -i 'kafka\.Kafka' | grep java | grep -v grep | awk '{print #}') note out, change to the following command

PIDS=$(jps | grep -i 'Kafka' |awk '{print $1}')

Kafka also does not provide a script to shut down the cluster operation. Here I provide a script to shut down Kafka cluster (it can be placed on any node):

[root@node1 bigdata]# cat kafka_cluster_stop.sh #!/bin/bash brokers="node4 node5" KAFKA_HOME="/data/bigdata/kafka" for broker in $brokers do ssh $broker -C "cd ${KAFKA_HOME}/bin && ./kafka-server-stop.sh" if [ $? -eq 0 ]; then echo "INFO:[${broker}] shut down completed " fi done [root@node1 bigdata]# chmod +x kafka-cluster-stop.sh

11, hive

Official documents

1. Install mysql database

reference resources: https://blog.51cto.com/moerjinrong/2092614

# Create a new hive user and metastore Library root@node2 14:37: [(none)]> grant all privileges on *.* to 'hive'@'192.168.1.%' identified by '123456'; Query OK, 0 rows affected, 1 warning (0.00 sec) root@node2 15:14: [(none)]> create database metastore; # undetermined Query OK, 1 row affected (0.01 sec)

2. Unzip add environment variable

[root@node2 ~]# tar -zxvf /data/tools/apache-hive-2.3.3-bin.tar.gz -C /data/bigdata/src/ [root@node2 ~]# ln -s /data/bigdata/src/apache-hive-2.3.3-bin /data/bigdata/hive # Add environment variable [root@node2 ~]# echo -e "\n# hive\nexport HIVE_HOME=/data/bigdata/hive\nexport PATH=\$HIVE_HOME/bin:\$PATH" >> /etc/profile.d/bigdata_path.sh [root@node2 ~]# cat /etc/profile.d/bigdata_path.sh # zookeeper export ZOOKEEPER_HOME=/data/bigdata/zookeeper export PATH=$ZOOKEEPER_HOME/bin:$PATH # hadoop export HADOOP_HOME=/data/bigdata/hadoop export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH # hbase export HBASE_HOME=/data/bigdata/hbase export PATH=$HBASE_HOME/bin:$PATH # scala export scala_HOME=/data/bigdata/scala export PATH=$scala_HOME/bin:$PATH # spark export SPARK_HOME=/data/bigdata/spark export PATH=$SPARK_HOME/bin:$PATH # kafka export KAFKA_HOME=/data/bigdata/kafka export PATH=$KAFKA_HOME/bin:$PATH # hive export HIVE_HOME=/data/bigdata/hive export PATH=$HIVE_HOME/bin:$PATH" # take effect [root@node2 ~]# source /etc/profile

3. New directory on hdfs

stay hdfs New directory in/user/hive/warehouse //Start the hadoop task first hdfs dfs -mkdir /tmp hdfs dfs -mkdir /user hdfs dfs -mkdir /user/hive hdfs dfs -mkdir /user/hive/warehouse hadoop fs -chmod g+w /tmp hadoop fs -chmod g+w /user/hive/warehouse # Copy mysql-connector-java-5.1.46.jar, the driver jar package of MySQL, into the lib directory of hive, and then download it wget -P $HIVE_HOME/lib http://central.maven.org/maven2/mysql/mysql-connector-java/5.1.46/mysql-connector-java-5.1.46.jar

4. Modification server.properties Profile:

[root@node2 ~]# cd /data/bigdata/hive/conf/ [root@node2 conf]# cp hive-default.xml.template hive-site.xml

[root@node2 conf]# vim hive-site.xml # Modify the following property values (look through the / instruction, if the first location is not correct, look for the next) <property> <name>javax.jdo.option.ConnectionURL</name> <value>jdbc:mysql://192.168.1.102:3306/hive?createDatabaseIfNotExist=true&useSSL=false</value> # mysql does not open ssl <description> JDBC connect string for a JDBC metastore; '?'The symbol is in URL Back pass get Method to pass the starting flag of the parameter, Available between multiple parameters'&'Symbolic connection because these characters are HTML It has special significance, So in Java The escape character is used in, and&stay HTML Will be escaped as'&'Symbol for parameter connection. </description> </property> <property> <name>javax.jdo.option.ConnectionDriverName</name> <value>com.mysql.jdbc.Driver</value> <description>Driver class name for a JDBC metastore</description> </property> <property> <name>javax.jdo.option.ConnectionUserName</name> <value>hive</value> <description>Username to use against metastore database</description> </property> <property> <name>javax.jdo.option.ConnectionPassword</name> <value>123456</value> <description>password to use against metastore database</description> </property> <property> <name>hive.metastore.warehouse.dir</name> <value>/data/bigdata/hive/warehouse</value> </property> <property> <name>hive.metastore.local</name> <value>true</value> </property> <property> <name>hive.exec.local.scratchdir</name> <value>/data/bigdata/hive/tmp</value> <description>Local scratch space for Hive jobs</description> </property> <property> <name>hive.downloaded.resources.dir</name> <value>/data/bigdata/hive/tmp/resources</value> <description>Temporary local directory for added resources in the remote file system.</description> </property> <property> <name>hive.querylog.location</name> <value>/data/bigdata/hive/tmp</value> <description>Location of Hive run time structured log file</description> </property> <property> <name>hive.server2.logging.operation.log.location</name> <value>/data/bigdata/hive/tmp/operation_logs</value> <description>Top level directory where operation logs are stored if logging functionality is enabled</description> </property>

Note: due to HTML format problems, the & in the above jdbc URL is changed to the red underlined symbol in the photo

# Create the specified directory [root@node2 conf]# mkdir -pv /data/bigdata/hive/{tmp/{operation_logs,resources},warehouse}

5. Initialize and run

# Initialize metastore's schema with schematool: [root@node2 conf]# schematool -initSchema -dbType mysql SLF4J: Class path contains multiple SLF4J bindings. SLF4J: Found binding in [jar:file:/data/bigdata/src/apache-hive-2.3.3-bin/lib/log4j-slf4j-impl-2.6.2.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: Found binding in [jar:file:/data/bigdata/src/hadoop-2.7.6/share/hadoop/common/lib/slf4j-log4j12-1.7.10.jar!/org/slf4j/impl/StaticLoggerBinder.class] SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation. SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory] Metastore connection URL: jdbc:mysql://127.0.0.1:3306/metastore?useSSL=false Metastore Connection Driver : com.mysql.jdbc.Driver Metastore connection User: hive Starting metastore schema initialization to 2.3.0 Initialization script hive-schema-2.3.0.mysql.sql Initialization script completed schemaTool completed [root@node2 conf]# # Run hive [root@node2 conf]# hive # Corresponding to RunJar process hive> show databases; OK default Time taken: 1.881 seconds, Fetched: 1 row(s) hive> use default; OK Time taken: 0.081 seconds hive> create table kylin_test(test_count int); OK Time taken: 2.9 seconds hive> show tables; OK Time taken: 0.151 seconds, Fetched: 1 row(s) hive> quit;

Processes started so far:

| \ | node1 | node2 | node3 | node4 | node5 |

|---|---|---|---|---|---|

| JDK | ✔ | ✔ | ✔ | ✔ | ✔ |

| QuorumPeerMain | ✔ | ✔ | ✔ | ||

| JournalNode | ✔ | ✔ | ✔ | ||

| NameNode | ✔ | ✔ | |||

| DFSZKFailoverController | ✔ | ✔ | |||

| DataNode | ✔ | ✔ | ✔ | ✔ | ✔ |

| NodeManager | ✔ | ✔ | ✔ | ✔ | ✔ |

| ResourceManager | ✔ | ✔ | |||

| HMaster | ✔ | ||||

| HRegionServer | ✔ | ✔ | ✔ | ||

| HistoryServer | ✔ | ||||

| Master | ✔ | ||||

| Worker | ✔ | ✔ | ✔ | ✔ | ✔ |

| Kafka | ✔ | ✔ | |||

| Runjar (only when hive is started) | ✔ |

12, kylin

Official documents

1. Preparation before installation

Before installing kylin, make sure: hadoop 2.4 +, hbase 0.13 +, hive 0.98 +, 1. * has been installed and started.

Hive needs to start metastore and hiveserver2.

Apache Kylin can also use cluster deployment, but using cluster deployment does not increase the computing speed

Because the calculation process uses MapReduce engine, which has nothing to do with Kylin itself, but mainly provides load balancing for queries. Single node is adopted this time.

2. Extract and create environment variables

[root@node2 ~]# tar zxvf /data/tools/apache-kylin-2.3.1-hbase1x-bin.tar.gz -C /data/bigdata/src/ [root@node2 ~]# ln -s /data/bigdata/src/apache-kylin-2.3.1-bin/ /data/bigdata/kylin # Add environment variable [root@node2 ~]# echo -e "\n# kylin\nexport KYLIN_HOME=/data/bigdata/kylin\nexport PATH=\$KYLIN_HOME/bin:\$PATH" >> /etc/profile.d/bigdata_path.sh [root@node2 ~]# cat /etc/profile.d/bigdata_path.sh # zookeeper export ZOOKEEPER_HOME=/data/bigdata/zookeeper export PATH=$ZOOKEEPER_HOME/bin:$PATH # hadoop export HADOOP_HOME=/data/bigdata/hadoop export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH # hbase export HBASE_HOME=/data/bigdata/hbase export PATH=$HBASE_HOME/bin:$PATH # scala export scala_HOME=/data/bigdata/scala export PATH=$scala_HOME/bin:$PATH # spark export SPARK_HOME=/data/bigdata/spark export PATH=$SPARK_HOME/bin:$PATH # kafka export KAFKA_HOME=/data/bigdata/kafka export PATH=$KAFKA_HOME/bin:$PATH # kylin export KYLIN_HOME=/data/bigdata/kylin export PATH=$KYLIN_HOME/bin:$PATH" # take effect [root@node2 ~]# source /etc/profile

3. Copy the relevant jar of hive to kylin

Copy all jar packages in the lib directory of hive installation directory to the lib directory of kylin installation directory.

[root@node2 ~]# cp -a /data/bigdata/hive/lib/* /data/bigdata/kylin/lib/

4. To configure Hive database for Kylin:

[root@node2 ~]# cd /data/bigdata/kylin/conf [root@node2 conf]# vim kylin.properties kylin.server.cluster-servers=node2:7070 # kylin cluster settings, modify host name or ip, port kylin.job.jar=$KYLIN_HOME/lib/kylin-job-2.3.1.jar # Modify the version and path of jar package kylin.coprocessor.local.jar=$KYLIN_HOME/lib/kylin-coprocessor-2.3.1.jar # Modify the version and path of jar package # List of web servers in use, this enables one web server instance to sync up with other servers kylin.rest.servers=node2:7070 ## Configure the Hive database used by Kylin. Here, configure the schema used in Hive to the current user kylin.job.hive.database.for.intermediatetable=root

5. If https is not started, please close

[root@node2 ~]# cd /data/bigdata/kylin/tomcat/conf [root@node2 conf]# cp -a server.xml{,_$(date +%F)} [root@node2 conf]# vim server.xml 85 maxThreads="150" SSLEnabled="true" scheme="https" secure="true" //Change to: 85 maxThreads="150" SSLEnabled="false" scheme="https" secure="false"

If it is not closed, the following error will be reported

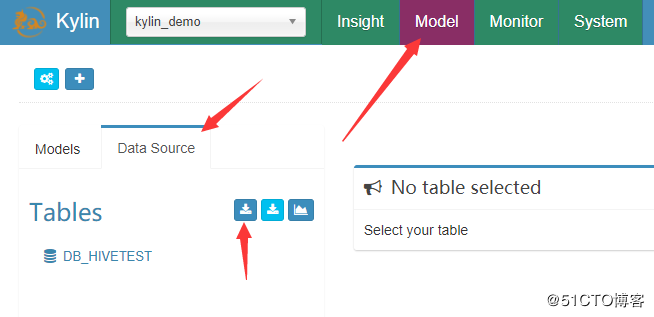

SEVERE: Failed to load keystore type JKS with path conf/.keystore due to /data/bigdata/kylin/tomcat/conf/.keystore (No such file or directory) java.io.FileNotFoundException: /data/bigdata/kylin/tomcat/conf/.keystore (No such file or directory)