Prometheus

Introduction to Prometheus

Prometheus is an open source monitoring, alarm, and time series database project developed by SoundCloud, which collects indicators that need statistics by pulling interfaces periodically

The basic principle of Prometheus is to periodically capture the status of monitored components through the HTTP protocol. The advantage of this is that any component can access the monitoring system by providing an HTTP interface without any SDK or other integration process.This is ideal for virtualized environments such as VM or Docker.

Prometheus features

- Multidimensional data model (time series data consists of metric names and a set of key/value s)

- Flexible Query Language on Multidimensional (PromQl)

- No dependency on distributed storage, single primary node works

- Collecting time series data by pull based on HTTP

- Time Series Data Pushing via Intermediate Gateway

- Target servers can be achieved by discovering services or by static configuration

- Multiple visualizations and dashboard support

deploy

Prerequisite: K8S cluster (kube-dns or coredns) deployed

principle

Monitor the k8s cluster using node-exporter, prometheus, grafana

- The node-exporter component collects metrics monitoring data on the node and pushes it to prometheus

- prometheus is responsible for storing this data

- grafana presents these data graphically to users through web pages

Environmental introduction

| host name | ip | Host Configuration | Remarks |

|---|---|---|---|

| master01 | 192.168.213.181 | 4U4G | control plane |

| node01 | 192.168.213.192 | 2U2G | node |

| node02 | 192.168.213.192 | 2U2G | node |

Deploying the node-exporter component

Deploying node-exporter components using daemonset

[root@master01 ~]# cat node-exporter.yaml apiVersion: extensions/v1beta1 kind: DaemonSet metadata: name: node-exporter namespace: kube-system labels: k8s-app: node-exporter spec: template: metadata: labels: k8s-app: node-exporter spec: containers: - image: prom/node-exporter name: node-exporter ports: - containerPort: 9100 protocol: TCP name: http --- apiVersion: v1 kind: Service metadata: labels: k8s-app: node-exporter name: node-exporter namespace: kube-system spec: ports: - name: http port: 9100 nodePort: 31672 protocol: TCP type: NodePort selector: k8s-app: node-exporter

Deploying prometheus components

rbac file

[root@master01 ~]# cat rbac-setup.yaml apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: name: prometheus rules: - apiGroups: [""] resources: - nodes - nodes/proxy - services - endpoints - pods verbs: ["get", "list", "watch"] - apiGroups: - extensions resources: - ingresses verbs: ["get", "list", "watch"] - nonResourceURLs: ["/metrics"] verbs: ["get"] --- apiVersion: v1 kind: ServiceAccount metadata: name: prometheus namespace: kube-system --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: prometheus roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: prometheus subjects: - kind: ServiceAccount name: prometheus namespace: kube-system

Manage configuration files for prometheus components as configmap

[root@master01 ~]# cat configmap.yaml apiVersion: v1 kind: ConfigMap metadata: name: prometheus-config namespace: kube-system data: prometheus.yml: | global: scrape_interval: 15s evaluation_interval: 15s scrape_configs: - job_name: 'kubernetes-apiservers' kubernetes_sd_configs: - role: endpoints scheme: https tls_config: ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token relabel_configs: - source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name] action: keep regex: default;kubernetes;https - job_name: 'kubernetes-nodes' kubernetes_sd_configs: - role: node scheme: https tls_config: ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token relabel_configs: - action: labelmap regex: __meta_kubernetes_node_label_(.+) - target_label: __address__ replacement: kubernetes.default.svc:443 - source_labels: [__meta_kubernetes_node_name] regex: (.+) target_label: __metrics_path__ replacement: /api/v1/nodes/${1}/proxy/metrics - job_name: 'kubernetes-cadvisor' kubernetes_sd_configs: - role: node scheme: https tls_config: ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token relabel_configs: - action: labelmap regex: __meta_kubernetes_node_label_(.+) - target_label: __address__ replacement: kubernetes.default.svc:443 - source_labels: [__meta_kubernetes_node_name] regex: (.+) target_label: __metrics_path__ replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor - job_name: 'kubernetes-service-endpoints' kubernetes_sd_configs: - role: endpoints relabel_configs: - source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape] action: keep regex: true - source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme] action: replace target_label: __scheme__ regex: (https?) - source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path] action: replace target_label: __metrics_path__ regex: (.+) - source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port] action: replace target_label: __address__ regex: ([^:]+)(?::\d+)?;(\d+) replacement: $1:$2 - action: labelmap regex: __meta_kubernetes_service_label_(.+) - source_labels: [__meta_kubernetes_namespace] action: replace target_label: kubernetes_namespace - source_labels: [__meta_kubernetes_service_name] action: replace target_label: kubernetes_name - job_name: 'kubernetes-services' kubernetes_sd_configs: - role: service metrics_path: /probe params: module: [http_2xx] relabel_configs: - source_labels: [__meta_kubernetes_service_annotation_prometheus_io_probe] action: keep regex: true - source_labels: [__address__] target_label: __param_target - target_label: __address__ replacement: blackbox-exporter.example.com:9115 - source_labels: [__param_target] target_label: instance - action: labelmap regex: __meta_kubernetes_service_label_(.+) - source_labels: [__meta_kubernetes_namespace] target_label: kubernetes_namespace - source_labels: [__meta_kubernetes_service_name] target_label: kubernetes_name - job_name: 'kubernetes-ingresses' kubernetes_sd_configs: - role: ingress relabel_configs: - source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_probe] action: keep regex: true - source_labels: [__meta_kubernetes_ingress_scheme,__address__,__meta_kubernetes_ingress_path] regex: (.+);(.+);(.+) replacement: ${1}://${2}${3} target_label: __param_target - target_label: __address__ replacement: blackbox-exporter.example.com:9115 - source_labels: [__param_target] target_label: instance - action: labelmap regex: __meta_kubernetes_ingress_label_(.+) - source_labels: [__meta_kubernetes_namespace] target_label: kubernetes_namespace - source_labels: [__meta_kubernetes_ingress_name] target_label: kubernetes_name - job_name: 'kubernetes-pods' kubernetes_sd_configs: - role: pod relabel_configs: - source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape] action: keep regex: true - source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path] action: replace target_label: __metrics_path__ regex: (.+) - source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port] action: replace regex: ([^:]+)(?::\d+)?;(\d+) replacement: $1:$2 target_label: __address__ - action: labelmap regex: __meta_kubernetes_pod_label_(.+) - source_labels: [__meta_kubernetes_namespace] action: replace target_label: kubernetes_namespace - source_labels: [__meta_kubernetes_pod_name] action: replace target_label: kubernetes_pod_name

Prometheus deployment file

[root@master01 ~]# cat prometheus.yaml apiVersion: apps/v1beta2 kind: Deployment metadata: labels: name: prometheus-deployment name: prometheus namespace: kube-system spec: replicas: 1 selector: matchLabels: app: prometheus template: metadata: labels: app: prometheus spec: containers: - image: prom/prometheus:v2.0.0 name: prometheus command: - "/bin/prometheus" args: - "--config.file=/etc/prometheus/prometheus.yml" - "--storage.tsdb.path=/prometheus" - "--storage.tsdb.retention=24h" ports: - containerPort: 9090 protocol: TCP volumeMounts: - mountPath: "/prometheus" name: data - mountPath: "/etc/prometheus" name: config-volume resources: requests: cpu: 100m memory: 100Mi limits: cpu: 500m memory: 2500Mi serviceAccountName: prometheus volumes: - name: data emptyDir: {} - name: config-volume configMap: name: prometheus-config --- kind: Service apiVersion: v1 metadata: labels: app: prometheus name: prometheus namespace: kube-system spec: type: NodePort ports: - port: 9090 targetPort: 9090 nodePort: 30003 selector: app: prometheus

Create corresponding object from yaml file

kubectl create -f node-exporter.yaml kubectl create -f rbac-setup.yaml kubectl create -f configmap.yaml kubectl create -f promethues.yaml

View related pod s and service s

Result Verification

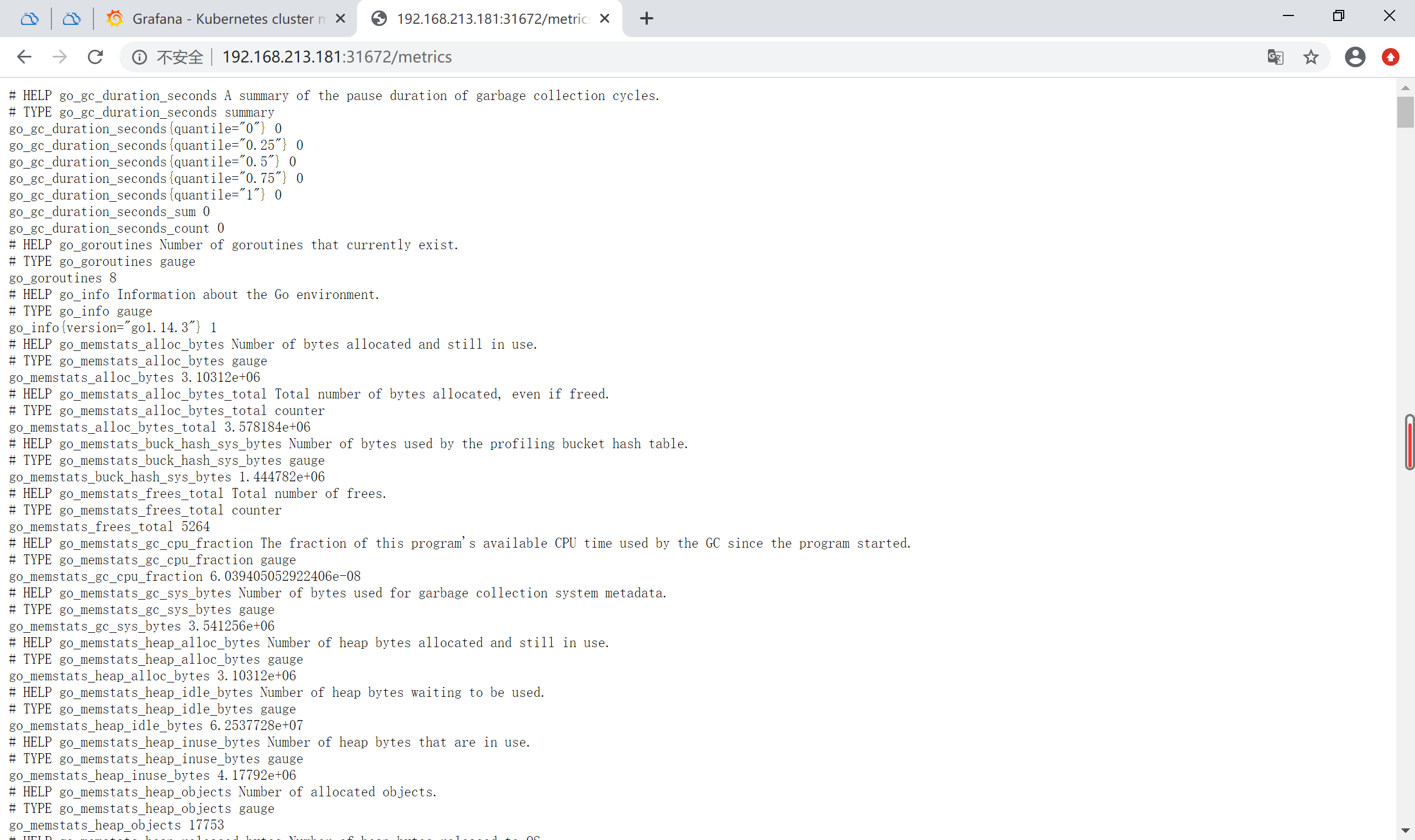

Node-exporter corresponds to a Noeport port of 31672, accessed byHttp://192.168.213.181: 31672/metrics can see the corresponding metrics

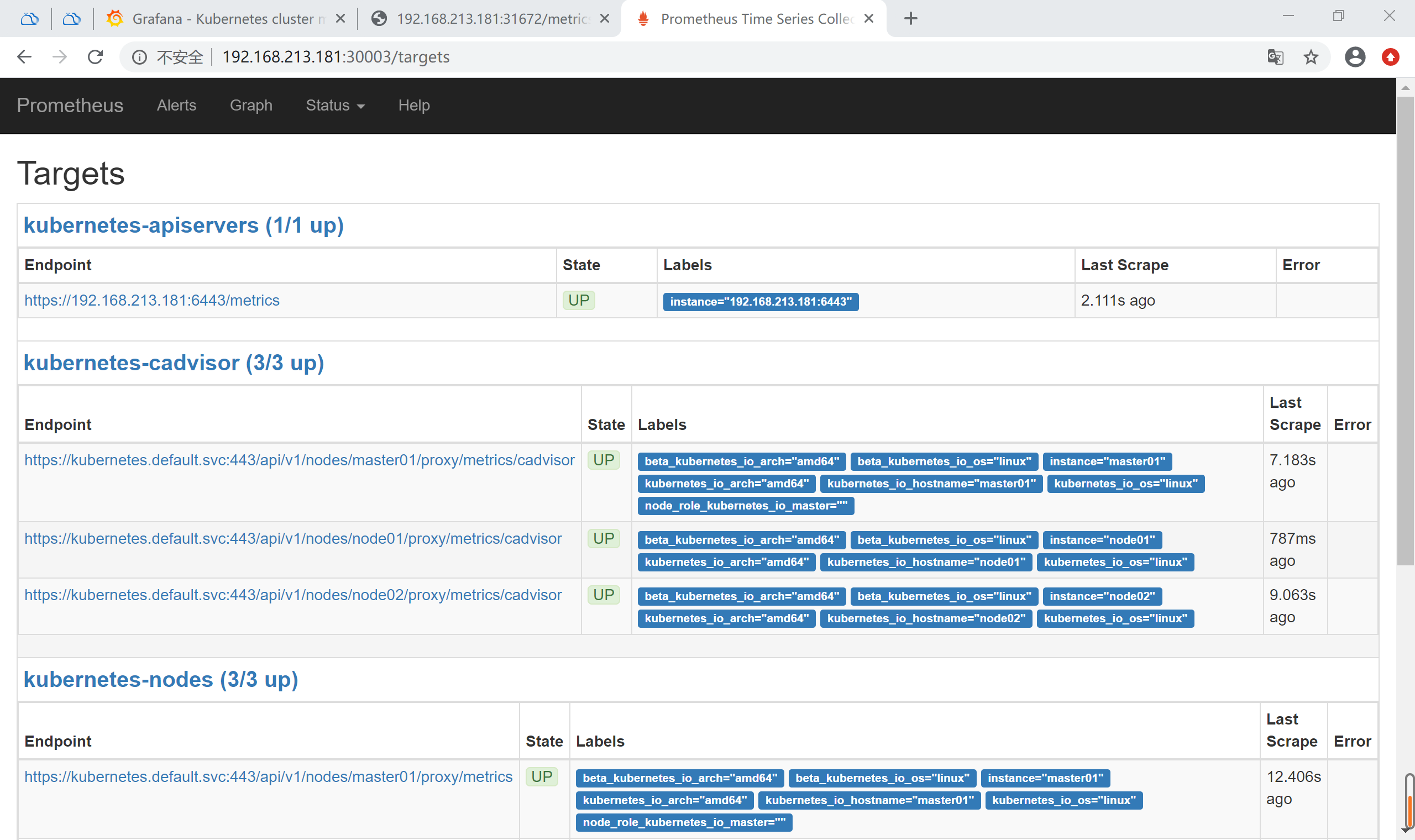

prometheus corresponds to a nodeport port of 30003, accessed byHttp://192.168.213.181: 30003/targets can see that prometheus has successfully connected to k8s apiserver The basic query provided on the WEB interface of prometheus can be used to query CPU usage for each POD in the K8S cluster using the following query criteria

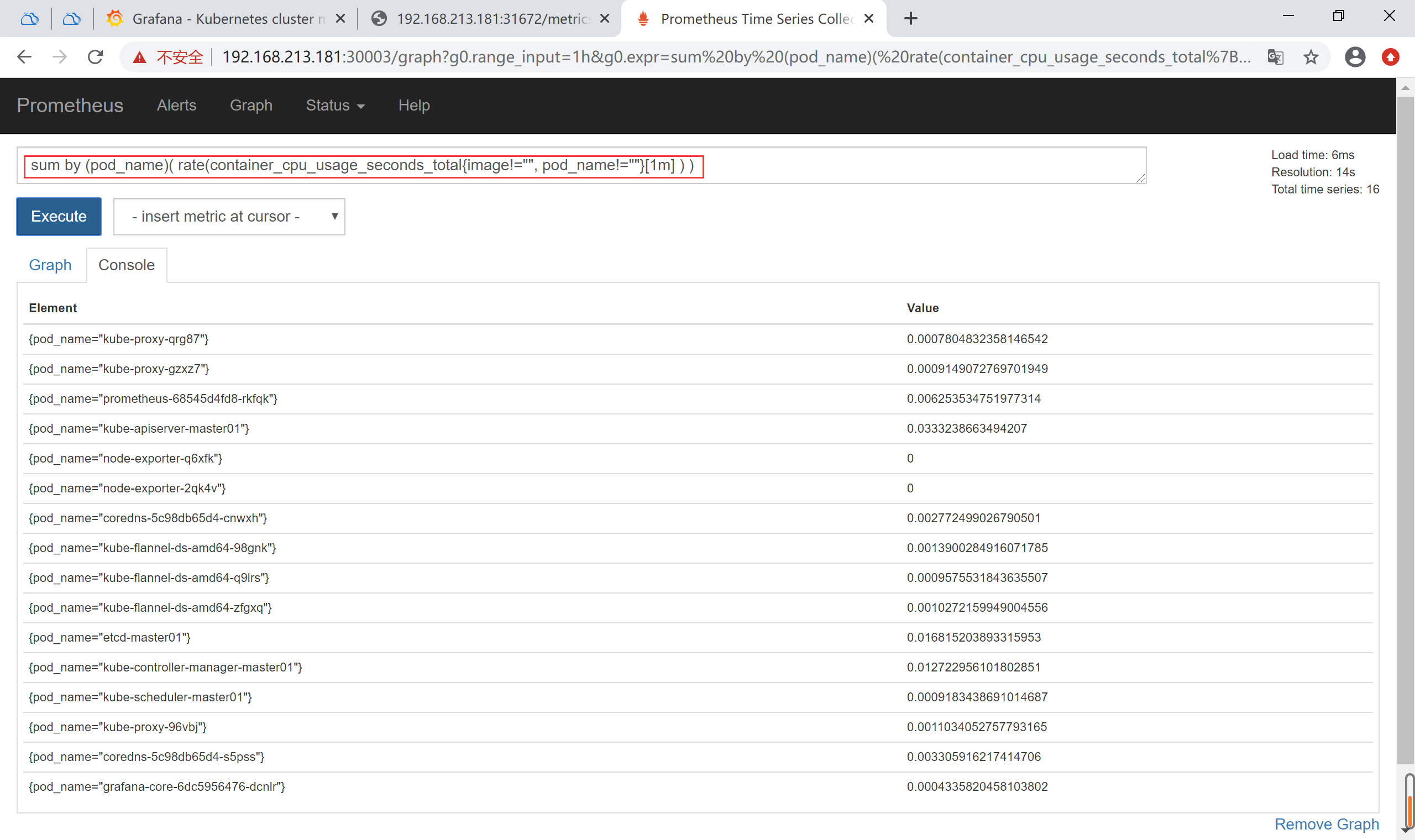

The basic query provided on the WEB interface of prometheus can be used to query CPU usage for each POD in the K8S cluster using the following query criteria

sum by (pod_name)( rate(container_cpu_usage_seconds_total{image!="", pod_name!=""}[1m] ) )

The above query has data indicating that it is normal for node-exporter to write data to prometheus

Deploying grafana components

Deploy grafana components for a more friendly WEBUI display of data

#grafana deployment configuration file [root@master01 ~]# cat grafana.yaml apiVersion: extensions/v1beta1 kind: Deployment metadata: name: grafana-core namespace: kube-system labels: app: grafana component: core spec: replicas: 1 template: metadata: labels: app: grafana component: core spec: containers: - image: grafana/grafana:5.0.0 name: grafana-core imagePullPolicy: IfNotPresent resources: limits: cpu: 100m memory: 100Mi requests: cpu: 100m memory: 100Mi env: - name: GF_AUTH_BASIC_ENABLED value: "true" - name: GF_AUTH_ANONYMOUS_ENABLED value: "false" readinessProbe: httpGet: path: /login port: 3000 volumeMounts: - name: grafana-persistent-storage mountPath: /var volumes: - name: grafana-persistent-storage emptyDir: {} --- apiVersion: v1 kind: Service metadata: name: grafana namespace: kube-system labels: app: grafana component: core spec: type: NodePort ports: - port: 3000 nodePort: 31000 selector: app: grafana [root@master01 ~]# kubectl create -f grafana.yaml

View grafana pod and service

[root@master01 ~]# kubectl get pod -n kube-system [root@master01 ~]# kubectl get svc -n kube-system

You can see that the grafana nodeport port is 3000, and you can access grafana using nodeip+nodeport Http://192.168.213.181: 31000 Configuration database source is prometheus, import panel (can be imported online by directly entering template number 315, or by downloading the corresponding json template file locally)

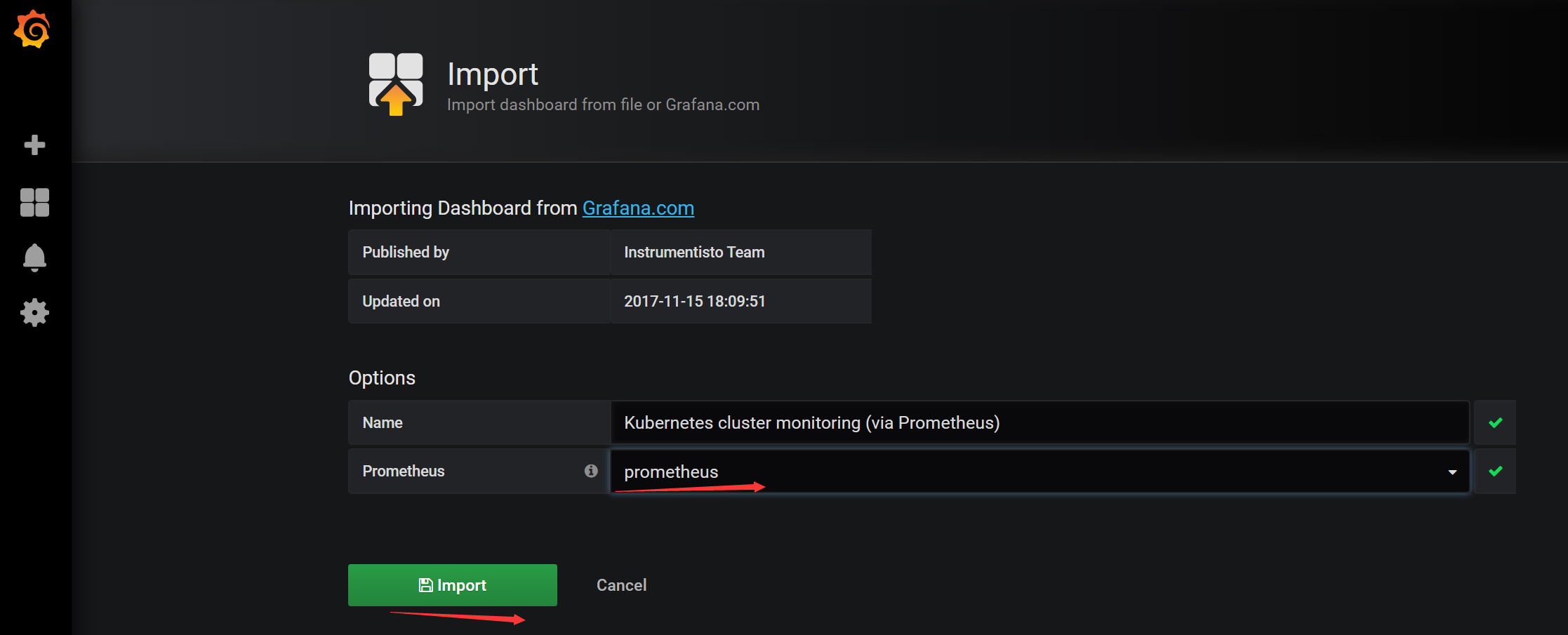

Configuration database source is prometheus, import panel (can be imported online by directly entering template number 315, or by downloading the corresponding json template file locally)

After loading the template, select the prometheus database instance

After loading the template, select the prometheus database instance

View the monitoring page