origin

The company wants to make a product. Of course, the environment is built before making the product. Most of the environments have been written before. Today, let's write about the installation of kafka.

Step 1: download the installation package

Download the kafka installation package. Mine is kafka_2.11-2.1.1. However, there are some differences between the latest version and the previous version. The command to start the consumer has changed. The -- zookeeper in the command has been changed to -- bootstrap server. Pay attention to your version.

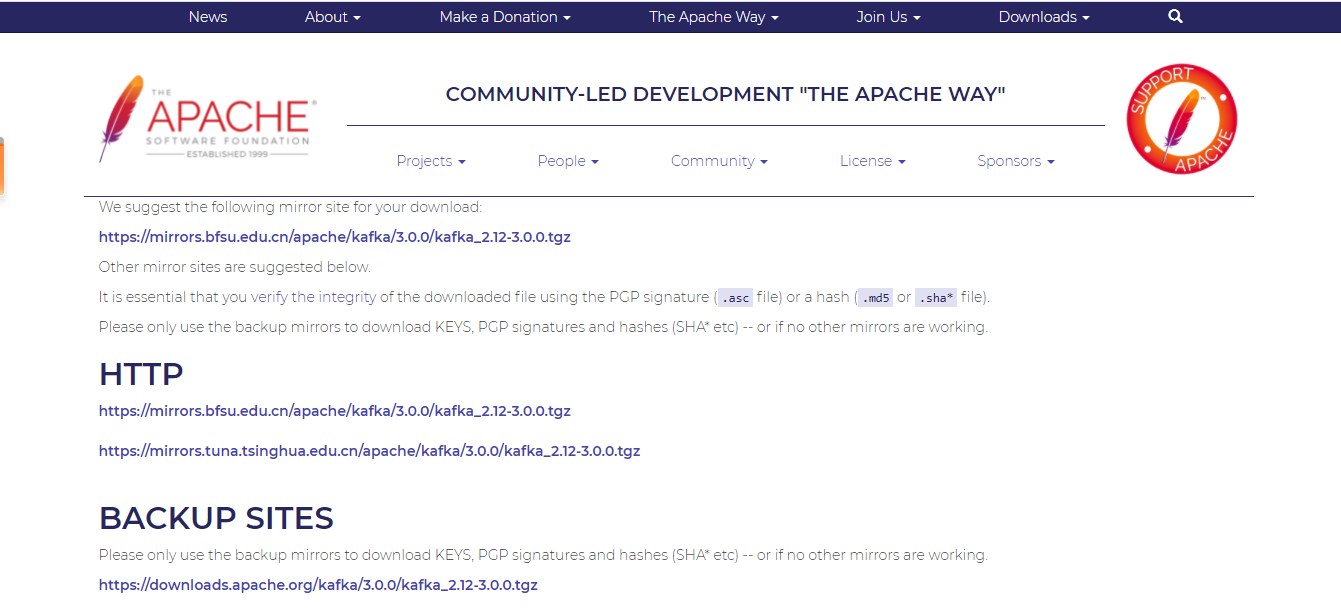

Installation package address: https://www.apache.org/dyn/closer.cgi?path=/kafka/3.0.0/kafka_2.12-3.0.0.tgz

Click this interface to select the image you can download

If you don't know how to choose, you can click download below:

| name | Download address |

|---|---|

| kafka_2.12-3.0.0.tgz | https://mirrors.bfsu.edu.cn/apache/kafka/3.0.0/kafka_2.12-3.0.0.tgz |

| kafka_2.13-3.0.0.tgz | https://dlcdn.apache.org/kafka/3.0.0/kafka_2.13-3.0.0.tgz |

In order to facilitate downloading, I uploaded it to my own Baidu cloud. The shared address is:

Link: https://pan.baidu.com/s/1GQmdqFtJbajoBm6FJ1igJw Extraction code: 35si

Then download or upload through the command, and the uploaded will not be mentioned. The download command is as follows:

#The download command is as follows: [root@localhost kanq]# wget https://mirrors.bfsu.edu.cn/apache/kafka/3.0.0/kafka_2.12-3.0.0.tgz --no-check-certificate #The returned results are as follows: --2021-10-12 03:06:16-- https://mirrors.bfsu.edu.cn/apache/kafka/3.0.0/kafka_2.12-3.0.0.tgz Resolving mirrors.bfsu.edu.cn (mirrors.bfsu.edu.cn)... 39.155.141.16, 2001:da8:20f:4435:4adf:37ff:fe55:2840 Connecting to mirrors.bfsu.edu.cn (mirrors.bfsu.edu.cn)|39.155.141.16|:443... connected. WARNING: cannot verify mirrors.bfsu.edu.cn's certificate, issued by '/C=US/O=Let's Encrypt/CN=R3': Issued certificate has expired. HTTP request sent, awaiting response... 200 OK Length: 86486610 (82M) [application/octet-stream] Saving to: 'kafka_2.12-3.0.0.tgz' 100%[==========================================================================================================================>] 86,486,610 27.0MB/s in 3.2s 2021-10-12 03:06:19 (26.0 MB/s) - 'kafka_2.12-3.0.0.tgz' saved [86486610/86486610]

Step 2: installation

The installation is actually very simple. Unzip the installation package and start it with a command.

Put the file into the server and unzip it. The command is:

[root@localhost kanq]# tar -zxvf kafka_2.12-3.0.0.tgz [root@localhost kanq]# ll drwxr-xr-x 7 root root 98 Sep 8 14:24 kafka_2.12-3.0.0 -rw-r--r-- 1 root root 86486610 Sep 20 01:46 kafka_2.12-3.0.0.tgz

After downloading, it will enter the folder, as shown in the figure:

[root@localhost kafka_2.12-3.0.0]# ll total 68 drwxr-xr-x 3 root root 4096 Sep 8 14:24 bin drwxr-xr-x 3 root root 4096 Sep 8 14:24 config drwxr-xr-x 2 root root 8192 Oct 12 03:08 libs -rw-r--r-- 1 root root 14521 Sep 8 14:21 LICENSE drwxr-xr-x 2 root root 4096 Sep 8 14:24 licenses -rw-r--r-- 1 root root 28184 Sep 8 14:21 NOTICE drwxr-xr-x 2 root root 43 Sep 8 14:24 site-docs

Folder Description:

| folder | describe |

|---|---|

| bin | Startup directory |

| config | configuration file |

| libs | Library file |

*After introducing the directory, let's start zookeeper and kafka. Note: start zookeeper first and then kafka. The commands are as follows:

#Start zookeeper [root@localhost kafka_2.12-3.0.0]# ./bin/zookeeper-server-start.sh ./config/zookeeper.properties #Start kafka [root@localhost kafka_2.12-3.0.0]# ./bin/kafka-server-start.sh ./config/server.properties

If no error is reported during startup, the startup is successful, and then the producer and consumer commands:

#Producer, send Hello, I am producer! [root@localhost kafka_2.12-3.0.0]# bin/kafka-console-producer.sh --broker-list localhost:9092 --topic iceter >hello ,I`m producer! #Open a new window, and the consumer window will display: Hello, I am producer [root@localhost kafka_2.12-3.0.0]# ./bin/kafka-console-consumer.sh --bootstrap-server localhost:9092 --topic iceter --from-beginning hello ,I`m producer!

After thinking about it, I still write the configuration file at last, because the configuration file can be started successfully without changing it (after successful startup, I don't bother to write the configuration file, hahaha).

Let's look at the configuration file. The directory of the configuration file is as follows:

[root@localhost config]# ll total 72 -rw-r--r-- 1 root root 906 Sep 8 14:21 connect-console-sink.properties -rw-r--r-- 1 root root 909 Sep 8 14:21 connect-console-source.properties -rw-r--r-- 1 root root 5475 Sep 8 14:21 connect-distributed.properties -rw-r--r-- 1 root root 883 Sep 8 14:21 connect-file-sink.properties -rw-r--r-- 1 root root 881 Sep 8 14:21 connect-file-source.properties -rw-r--r-- 1 root root 2103 Sep 8 14:21 connect-log4j.properties -rw-r--r-- 1 root root 2540 Sep 8 14:21 connect-mirror-maker.properties -rw-r--r-- 1 root root 2262 Sep 8 14:21 connect-standalone.properties -rw-r--r-- 1 root root 1221 Sep 8 14:21 consumer.properties drwxr-xr-x 2 root root 98 Sep 8 14:21 kraft -rw-r--r-- 1 root root 4674 Sep 8 14:21 log4j.properties -rw-r--r-- 1 root root 1925 Sep 8 14:21 producer.properties -rw-r--r-- 1 root root 6849 Sep 8 14:21 server.properties -rw-r--r-- 1 root root 1032 Sep 8 14:21 tools-log4j.properties -rw-r--r-- 1 root root 1169 Sep 8 14:21 trogdor.conf -rw-r--r-- 1 root root 1205 Sep 8 14:21 zookeeper.properties

Here we need to pay attention to the following documents:

zookeeper.properties (zookeeper configuration)

server.properties (kafka configuration file)

producer.properties (producer configuration)

consumer.properties (consumer configuration)

After reading the configuration file, you find that there is nothing to say. If you have anything to say in the future, just remember the corresponding configuration file above. If you need to configure anything in the future, just find the corresponding configuration file and edit it.

Gossip: I'll only accompany you on the journey of thousands of miles. Since then, I won't care about the wind, snow and sunshine.