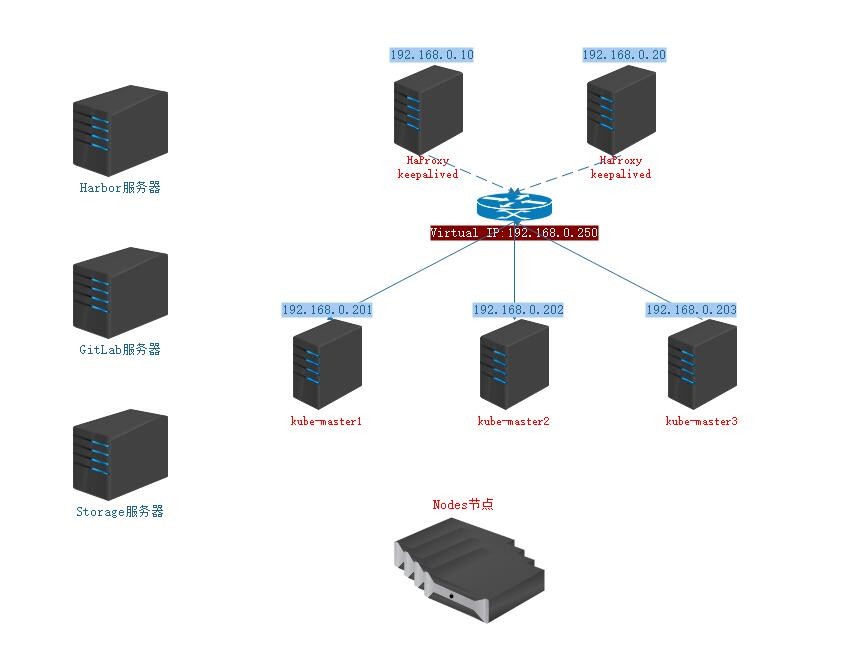

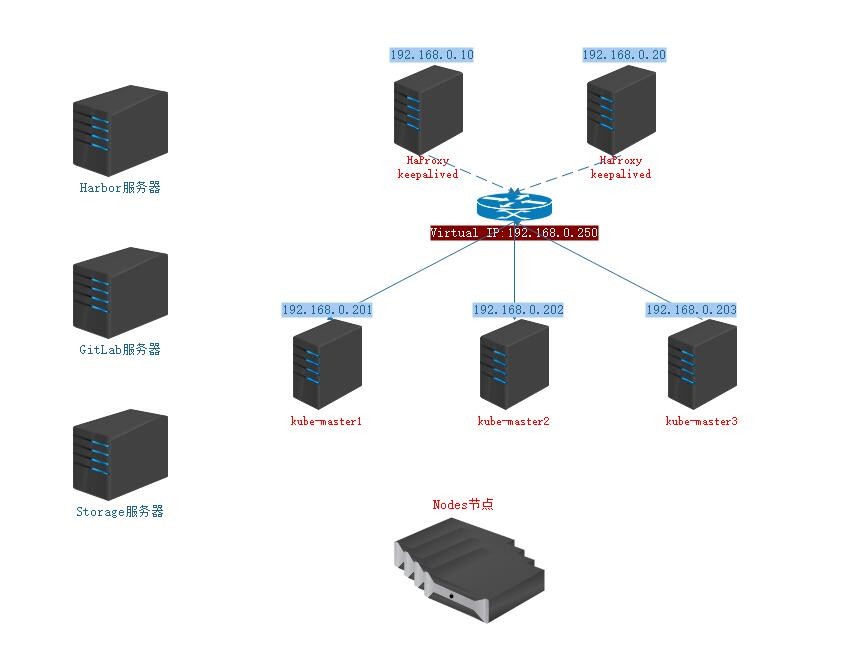

1. Architectural design and environmental design

1.1. Architectural design

- Deploy Haproxy to provide Endpoint access entry for Kubernetes

- The Endpoint entry address is set to Virtual IP using Keepalived, and redundancy is achieved by deploying multiple nodes.

- Deploy highly available Kubernetes clusters using kubeadm, specifying Endpoint IP as Virtual IP generated by Keepalived

- Using prometheus as Kubernetes's cluster monitoring system, using grafana as chart monitoring chart display system, using alertmanager as alarm system.

- Construction of CI/CD System with Jenkins+gitlab+harbor

- Using a separate domain name to communicate within the Kubernetes cluster, DNS services are built in the intranet for domain name resolution.

1.2. Environmental Design

| host name |

IP |

role |

| kube-master-01.sk8s.io-01.sk8s.io |

192.168.0.201 |

K8s master, haprxoy + keepalived (virtual IP: 192.168.0.250) |

| kube-master-01.sk8s.io-02.sk8s.io |

192.168.0.202 |

K8s master, haprxoy + keepalived (virtual IP: 192.168.0.250) |

| kube-master-01.sk8s.io-03.sk8s.io |

192.168.0.203 |

k8s master, DNS, Storage, GitLab, Harbor |

| kube-node-01.sk8s.io |

192.168.0.204 |

node |

| kube-node-02.sk8s.io |

192.168.0.205 |

node |

2. operating system initialization settings

2.1. Turn off SELINUX

[root@localhost ~]# setenforce 0

[root@localhost ~]# sed -i 's#^SELINUX=.*#SELINUX=disabled#' /etc/sysconfig/selinux

[root@localhost ~]# sed -i 's#^SELINUX=.*#SELINUX=disabled#' /etc/selinux/config

2.2. Turn off useless services

[root@localhost ~]# systemctl disable firewalld postfix auditd kdump NetworkManager

2.3. Upgrade the System Kernel

[root@master ~]# rpm --import https://www.elrepo.org/RPM-GPG-KEY-elrepo.org

[root@master ~]# rpm -Uvh http://www.elrepo.org/elrepo-release-7.0-3.el7.elrepo.noarch.rpm

[root@master ~]# yum -y --disablerepo=\* --enablerepo=elrepo-kernel install kernel-lt.x86_64 kernel-lt-devel.x86_64 kernel-lt-headers.x86_64

[root@master ~]# yum -y remove kernel-tools-libs.x86_64 kernel-tools.x86_64

[root@master ~]# yum -y --disablerepo=\* --enablerepo=elrepo-kernel install kernel-lt-tools.x86_64

[root@master ~]# cat <<EOF > /etc/default/grub

GRUB_TIMEOUT=5

GRUB_DISTRIBUTOR="$(sed 's, release .*$,,g' /etc/system-release)"

GRUB_DEFAULT=0

GRUB_DISABLE_SUBMENU=true

GRUB_TERMINAL_OUTPUT="console"

GRUB_CMDLINE_LINUX="crashkernel=auto console=ttyS0 console=tty0 panic=5"

GRUB_DISABLE_RECOVERY="true"

GRUB_TERMINAL="serial console"

GRUB_TERMINAL_OUTPUT="serial console"

GRUB_SERIAL_COMMAND="serial --speed=9600 --unit=0 --word=8 --parity=no --stop=1"

EOF

[root@localhost ~]# grub2-mkconfig -o /boot/grub2/grub.cfg

2.4. Unified Network Card Name

[root@localhost ~]# grub_cinfig='GRUB_CMDLINE_LINUX="crashkernel=auto ipv6.disable=1 net.ifnames=0 rd.lvm.lv=centos/root rd.lvm.lv=centos/swap rhgb quiet"'

[root@localhost ~]# sed -i "s#GRUB_CMDLINE_LINUX.*#${grub_cinfig}#" /etc/default/grub

[root@localhost ~]# grub2-mkconfig -o /boot/grub2/grub.cfg

# ATTR{address} is the MAC address of the network card and NAME is the modified address.

[root@localhost ~]# cat /etc/udev/rules.d/70-persistent-net.rules

SUBSYSTEM=="net", ACTION=="add", DRIVERS=="?*", ATTR{address}=="ec:xx:yy:cc:b6:xx", NAME="eth0"

SUBSYSTEM=="net", ACTION=="add", DRIVERS=="?*", ATTR{address}=="ec:xx:yy:cc:b6:xx", NAME="eth1"

# Modify / etc/sysconfig/network-scripts / the following network card configuration file before restarting

[root@localhost ~]# reboot

2.5. Other configurations

[root@localhost ~]# yum -y install vim net-tools lrzsz lbzip2 bzip2 ntpdate curl wget psmisc

[root@localhost ~]# timedatectl set-timezone Asia/Shanghai

[root@localhost ~]# echo "nameserver 223.5.5.5" > /etc/resolv.conf

[root@localhost ~]# echo "nameserver 114.114.114.114" >> /etc/resolv.conf

[root@localhost ~]# echo 'LANG="en_US.UTF-8"' > /etc/locale.conf

[root@localhost ~]# echo 'export LANG="en_US.UTF-8"' >> /etc/profile.d/custom.sh

[root@localhost ~]# cat >> /etc/security/limits.conf <<EOF

* soft nproc 65530

* hard nproc 65530

* soft nofile 65530

* hard nofile 65530

EOF

[root@localhost ~]# cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1

net.ipv4.ip_forward=1

net.ipv4.tcp_tw_recycle=0

vm.swappiness=0

vm.overcommit_memory=1

vm.panic_on_oom=0

fs.inotify.max_user_instances=8192

fs.inotify.max_user_watches=1048576

fs.file-max=52706963

fs.nr_open=52706963

net.ipv4.tcp_sack = 0

EOF

[root@localhost ~]# sysctl -p /etc/sysctl.d/k8s.conf

2.6. Configure Hosts Deny

[root@localhost ~]# echo "sshd:192.168.0." > /etc/hosts.allow

[root@localhost ~]# echo "sshd:ALL" > /etc/hosts.deny

2.7. ssh configuration

# Create an administrator user and generate SSH key (download the private key and prohibit it from being left on the server; copy the public key to ~/.ssh/authorized_keys on other servers)

[root@localhost ~]# useradd huyuan

[root@localhost ~]# echo "sycx123" | passwd --stdin huyuan

[root@localhost ~]# su - huyuan

[root@localhost ~]# ssh-keygen -b 4096

[root@localhost ~]# mv ~/.ssh/id_rsa.pub ~/.ssh/authorized_keys

# Back to the root user

[root@localhost ~]# exit

# Forbid DNS Inverse Solution and Optimize SSH Connection Speed

[root@localhost ~]# sed -i 's/^#UseDNS.*/UseDNS no/' /etc/ssh/sshd_config

# Disable password authentication

[root@localhost ~]# sed -i 's/^PasswordAuthentication.*/PasswordAuthentication no/' /etc/ssh/sshd_config

# Prohibit root user from logging in

[root@localhost ~]# sed -i 's/#PermitRootLogin.*/PermitRootLogin no/' /etc/ssh/sshd_config

# Only huyuan is allowed to log on to the server, and multiple users are separated by spaces

[root@localhost ~]# echo "AllowUsers huyuan" >> /etc/ssh/sshd_config

# Restart service

[root@localhost ~]# systemctl restart sshd

2.7. Setting a Unified root Password

[root@localhost ~]# echo "xxxxx" | passwd --stdin root

2.8. Setting Host Name

[root@localhost ~]# hostnamectl set-hostname kube-master-01.sk8s.io-01.sk8s.io

[root@localhost ~]# echo "192.168.0.201 kube-master-01.sk8s.io-01.sk8s.io" >> /etc/hosts

[root@localhost ~]# echo "192.168.0.202 kube-master-01.sk8s.io-02.sk8s.io" >> /etc/hosts

[root@localhost ~]# echo "192.168.0.203 kube-master-01.sk8s.io-03.sk8s.io" >> /etc/hosts

[root@localhost ~]# echo "192.168.0.204 kube-node-01.sk8s.io" >> /etc/hosts

[root@localhost ~]# echo "192.168.0.205 kube-node-02.sk8s.io" >> /etc/hosts

3. Initialization of Kubernetes Cluster

3.1. Install and configure docker (all nodes)

[root@kube-master-01.sk8s.io01 ~]# yum install -y yum-utils device-mapper-persistent-data lvm2

[root@kube-master-01.sk8s.io01 ~]# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

[root@kube-master-01.sk8s.io01 ~]# yum -y install docker-ce-18.09.6 docker-ce-cli-18.09.6

[root@kube-master-01.sk8s.io01 ~]# cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://c7i79lkw.mirror.aliyuncs.com"],

"insecure-registries": ["122.228.208.72:9000"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"graph": "/opt/docker",

"log-opts": {

"max-size": "100m"

},

"storage-driver": "overlay2"

}

[root@kube-master-01.sk8s.io01 ~]# systemctl enable docker

[root@kube-master-01.sk8s.io01 ~]# systemctl start docker

3.2. Configure haproxy as ApiServer Agent

# Installation and configuration on kube-master-01.sk8s.io01 and kube-master-01.sk8s.io02 hosts

[root@kube-master-01.sk8s.io01 ~]# yum -y install haproxy

[root@kube-master-01.sk8s.io01 ~]# cat > /etc/haproxy/haproxy.cfg << EOF

global

log 127.0.0.1 local2 notice

chroot /var/lib/haproxy

stats socket /var/run/haproxy.sock mode 660 level admin

stats timeout 30s

user haproxy

group haproxy

daemon

nbproc 1

defaults

log global

timeout connect 5000

timeout client 10m

timeout server 10m

listen admin_stats

bind 0.0.0.0:8000

mode http

stats refresh 30s

stats uri /status

stats realm welcome login\ Haproxy

stats auth admin:tuitui99

stats hide-version

stats admin if TRUE

listen kube-master-01.sk8s.io

bind 0.0.0.0:8443

mode tcp

option tcplog

balance source

server 192.168.0.201 192.168.0.201:6443 check inter 2000 fall 2 rise 2 weight 1

server 192.168.0.202 192.168.0.202:6443 check inter 2000 fall 2 rise 2 weight 1

server 192.168.0.203 192.168.0.203:6443 check inter 2000 fall 2 rise 2 weight 1

EOF

[root@kube-master-01.sk8s.io01 ~]# systemctl enable haproxy

[root@kube-master-01.sk8s.io01 ~]# systemctl start haproxy

3.3. Configure keepalived as master-slave backup for haproxy

# Installation and configuration on kube-master-01.sk8s.io01 and kube-master-01.sk8s.io02 hosts

[root@kube-master-01.sk8s.io01 ~]# yum -y install keepalived

[root@kube-master-01.sk8s.io01 ~]# cp /etc/keepalived/keepalived.conf{,.bak}

[root@kube-master-01.sk8s.io01 ~]# cat > /etc/keepalived/keepalived.conf << EOF

global_defs {

# Unique representation 192.168.0.201 node ip

router_id master-192.168.0.201

# Users who execute notify_master notify_backup notify_fault and other scripts

script_user root

}

vrrp_script check-haproxy {

# Detect whether the process exists

script "/bin/killall -0 haproxy &>/dev/null"

interval 5

weight -30

user root

}

vrrp_instance k8s {

state MASTER

# Set priority, 120 for primary server and 100 for slave server

priority 120

dont_track_primary

interface eth0

virtual_router_id 80

advert_int 3

track_script {

check-haproxy

}

authentication {

auth_type PASS

auth_pass tuitui99

}

virtual_ipaddress {

# Setting up virtual ip

192.168.0.254

}

# The script refers to https://blog.51cto.com/hongchen99/2298896.

notify_master "/bin/python /etc/keepalived/notify_keepalived.py master"

notify_backup "/bin/python /etc/keepalived/notify_keepalived.py backup"

notify_fault "/bin/python /etc/keepalived/notify_keepalived.py fault"

}

EOF

[root@kube-master-01.sk8s.io01 ~]# chmod +x /etc/keepalived/notify_keepalived.py

[root@kube-master-01.sk8s.io01 ~]# systemctl enable keepalived

[root@kube-master-01.sk8s.io01 ~]# systemctl start keepalived

3.4. Configure haproxy and keepalived logs

# Configure haproxy logs

[root@kube-master-01.sk8s.io01 ~]# echo "local2.* /var/log/haproxy.log" >> /etc/rsyslog.conf

# Configure keepalived logs

[root@kube-master-01.sk8s.io01 ~]# cp /etc/sysconfig/keepalived{,.bak}

[root@kube-master-01.sk8s.io01 ~]# echo KEEPALIVED_OPTIONS="-D -d -S 0" > /etc/sysconfig/keepalived

[root@kube-master-01.sk8s.io01 ~]# echo "local0.* /var/log/keepalived.log" >> /etc/rsyslog.conf

# Because the haproxy log is transmitted through udp, we need to open the UDP port of rsyslog. In rsyslog, we delete the comments of the following two variables.

[root@kube-master-01.sk8s.io01 ~]# cat /etc/rsyslog.conf

$ModLoad imudp

$UDPServerRun 514

[root@kube-master-01.sk8s.io01 ~]# systemctl restart rsyslog

[root@kube-master-01.sk8s.io01 ~]# systemctl restart haproxy

[root@kube-master-01.sk8s.io01 ~]# systemctl restart keepalived

3.5. Install kubelet kubeadm and kubectl

[root@kube-master-01.sk8s.io01 ~]# cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

[root@kube-master-01.sk8s.io01 ~]# yum install -y kubelet-1.14.0 kubeadm-1.14.0 kubectl-1.14.0

[root@kube-master-01.sk8s.io01 ~]# systemctl enable kubelet

3.6. Initialize the kubernetes cluster

# Loading ipvs module

[root@kube-master-01.sk8s.io01 ~]# cat > /etc/sysconfig/modules/ipvs.modules << EOF

#!/bin/bash

modprobe -- ip_vs

modprobe -- ip_vs_rr

modprobe -- ip_vs_wrr

modprobe -- ip_vs_sh

modprobe -- nf_conntrack_ipv4

EOF

[root@kube-master-01.sk8s.io01 ~]# chmod +x /etc/sysconfig/modules/ipvs.modules

[root@kube-master-01.sk8s.io01 ~]# sh /etc/sysconfig/modules/ipvs.modules

[root@kube-master-01.sk8s.io01 ~]# lsmod | grep ip_vs

# Install ipvsadm to manage ipvs

[root@kube-master-01.sk8s.io01 ~]# yum -y install ipvsadm

# Writing Initialization Profile

[root@kube-master-01.sk8s.io01 ~]# cat > kubeadm-init.yaml << EOF

apiVersion: kubeadm.k8s.io/v1beta1

kind: ClusterConfiguration

kubernetesVersion: v1.14.0

# 192.168.0.254 is a virtual IP and 8443 is a haproxy listening port.

controlPlaneEndpoint: "192.168.0.254:8443"

# Setting the pull-out initialization mirror address

imageRepository: registry.cn-hangzhou.aliyuncs.com/google_containers

networking:

podSubnet: 10.244.0.0/16

apiServer:

certSANs:

- "kube-master-01.sk8s.io01"

- "kube-master-01.sk8s.io02"

- "kube-master-01.sk8s.io03"

- "192.168.0.201"

- "192.168.0.202"

- "192.168.0.203"

- "192.168.0.254"

- "127.0.0.1"

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: "ipvs"

EOF

# Initialize the kubernetes cluster--experimental-upload-certs shared certificate

[root@kube-master-01.sk8s.io01 ~]# kubeadm init --config=kubeadm-init.yaml --experimental-upload-certs

# Creating kubernetes Cluster Management Users

[root@kube-master-01.sk8s.io01 ~]# groupadd -g 5000 kubelet

[root@kube-master-01.sk8s.io01 ~]# useradd -c "kubernetes-admin-user" -G docker -u 5000 -g 5000 kubelet

[root@kube-master-01.sk8s.io01 ~]# echo "kubelet" | passwd --stdin kubelet

# Copy the kubernetes cluster configuration file to the administrative user

[root@kube-master-01.sk8s.io01 ~]# mkdir /home/kubelet/.kube

[root@kube-master-01.sk8s.io01 ~]# cp -i /etc/kubernetes/admin.conf /home/kubelet/.kube/config

[root@kube-master-01.sk8s.io01 ~]# chown -R kubelet:kubelet /home/kubelet/.kube

3.7. Update coredns

[root@kube-master-01.sk8s.io01 ~]# su - kubelet

[kubelet@kube-master-01.sk8s.io01 ~]$ cat > coredns.yaml << EOF

apiVersion: apps/v1

kind: Deployment

metadata:

labels:

k8s-app: kube-dns

name: coredns

namespace: kube-system

spec:

replicas: 3

selector:

matchLabels:

k8s-app: kube-dns

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 1

type: RollingUpdate

template:

metadata:

labels:

k8s-app: kube-dns

spec:

affinity:

podAntiAffinity:

preferredDuringSchedulingIgnoredDuringExecution:

- weight: 100

podAffinityTerm:

labelSelector:

matchExpressions:

- key: k8s-app

operator: In

values:

- kube-dns

topologyKey: kubernetes.io/hostname

containers:

- args:

- -conf

- /etc/coredns/Corefile

image: coredns/coredns:1.5.0

imagePullPolicy: IfNotPresent

livenessProbe:

failureThreshold: 5

httpGet:

path: /health

port: 8080

scheme: HTTP

initialDelaySeconds: 60

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 5

name: coredns

ports:

- containerPort: 53

name: dns

protocol: UDP

- containerPort: 53

name: dns-tcp

protocol: TCP

- containerPort: 9153

name: metrics

protocol: TCP

resources:

limits:

memory: 170Mi

requests:

cpu: 100m

memory: 70Mi

securityContext:

allowPrivilegeEscalation: false

capabilities:

add:

- NET_BIND_SERVICE

drop:

- all

readOnlyRootFilesystem: true

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

volumeMounts:

- mountPath: /etc/coredns

name: config-volume

readOnly: true

dnsPolicy: Default

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

serviceAccount: coredns

serviceAccountName: coredns

terminationGracePeriodSeconds: 30

tolerations:

- key: CriticalAddonsOnly

operator: Exists

- effect: NoSchedule

key: node-role.kubernetes.io/master

- effect: NoSchedule

key: node.kubernetes.io/not-ready

volumes:

- configMap:

defaultMode: 420

items:

- key: Corefile

path: Corefile

name: coredns

name: config-volume

EOF

[kubelet@kube-master-01.sk8s.io01 ~]$ kubectl apply -f coredns.yaml

3.8. Other nodes join the cluster

# Other master s join the cluster

[kubelet@kube-master-01.sk8s.io01 ~]$ kubeadm join 192.168.0.254:8443 --token h4n7uy.5qibssxu27vveko5 \

--discovery-token-ca-cert-hash sha256:a27738a4457d57ee611dd1c0281aeaabd32bc834797fe307980b95755b052e41 \

--experimental-control-plane --certificate-key eb37e5810fe300a42c5b610117ad57acf682a92da928cf94435a135aa338bc12

# Other node s join the cluster

[kubelet@kube-master-01.sk8s.io01 ~]$ kubeadm join 192.168.0.254:8443 --token h4n7uy.5qibssxu27vveko5 \

--discovery-token-ca-cert-hash sha256:a27738a4457d58ee611dd1c0281aeaabd34bc834797fe307980b95755b052e41