StreanGraph

It is a DAG (stored in the form of adjacency table), which stores the topology information of the whole flow and is composed of a series of StreamEdge and StreamNode.

Call StreamExecutionEnvironment.getStreamGraph() to generate a StreamGraph. StreamExecutionEnvironment.getStreamGraph() clears the transformations saved in StreamExecutionEnvironment by default. StreamExecutionEnvironment.execute() cannot be called later. Therefore, if transformations need to be used repeatedly, the overloaded method StreamExecutionEnvironment.getStreamGraph(String jobName, boolean clearTransformations) can be used, Its source code is as follows:

public StreamGraph getStreamGraph(String jobName, boolean clearTransformations) {

StreamGraph streamGraph = getStreamGraphGenerator().setJobName(jobName).generate();

if (clearTransformations) {

this.transformations.clear();

}

return streamGraph;

}

This part of the code will generate a StreamGraphGenerator (the information of all transformations has been stored in the generation process) and call its generate method to generate StreamGraph.

StreamGraphGenerator

generate

public StreamGraph generate() {

streamGraph = new StreamGraph(executionConfig, checkpointConfig, savepointRestoreSettings);

shouldExecuteInBatchMode = shouldExecuteInBatchMode(runtimeExecutionMode);

configureStreamGraph(streamGraph);

alreadyTransformed = new HashMap<>();

for (Transformation<?> transformation : transformations) {

transform(transformation);

}

final StreamGraph builtStreamGraph = streamGraph;

alreadyTransformed.clear();

alreadyTransformed = null;

streamGraph = null;

return builtStreamGraph;

}

The core of this method does four things:

- Initialize StreamGraph;

- Create alreadyTransformed to prevent duplicate creation of StreamNode for the same transformation;

- Transform each transformation to create the corresponding StreamNode and StreamEdge;

- After some environmental cleaning.

The alreadyTransformed created in step 2 not only prevents repeated creation of streamnodes, but also establishes the mapping relationship between Transformation IDS before and after Transformation, so that the corresponding streamnodes can be retrieved directly through Transformation. During the conversion process, some transformations will not be converted to StreamNode, but to a virtual node. The IDs of these virtual nodes will be regenerated. At this time, they need to be retrieved through the mapping relationship of alreadyTransformed records. There is a static member idCounter in the Transformation, which is used to assign a unique id to each Transformation. Call Transformation.getNewNodeId() to obtain a new and unique Transformation id. the getNewNodeId code is as follows:

public static int getNewNodeId() {

idCounter++;

return idCounter;

}

During the Transformation in step 3, the StreamGraphGenerator will recursively transform the upstream part of each Transformation to ensure that each Transformation will be transformed (in fact, the transformations in the StreamGraphGenerator do not save all transformations. Only some transformations will be added to the StreamExecutionEnvironment when using the DataStream API and assigned to the StreamGraphGenerator when creating the StreamGraphGenerator later).

Virtual node

The structure of the virtual node is a Tuple3:

Tuple3<Integer, StreamPartitioner<?>, ShuffleMode>

Three parts of information are recorded:

- Upstream node id;

- Zoning device;

- Data processing mode (batch / stream).

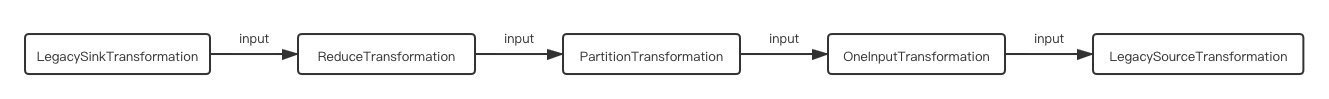

The partition transformation in the above figure is taken as an example. It only records partition information and does not contain real operations, so it will be transformed into a virtual node.

Assuming that the DataStream is executed as soon as the process is started, the transformation id is incremented from 1 (the above figure is incremented from 1 from right to left). The id of PartitionTransformation will be 3. When it is converted to a virtual node, the value of idCounter is 5 (there are five transformations in the figure, so idCounter has been incremented to 5 when creating DataStream) At this time, calling Transformation.getNewNodeId() again will get a new value of 6, assign it to the virtual node transformed by PartitionTransformation, and record the mapping of 3 - > 6 in alreadyTransformed.

The id of the upstream node of the PartitionTransformation is 2. It is generated by keyBy. The partition is KeyGroupStreamPartitioner. Since we do not specify the shuffleMode manually, it is UNDEFINED by default. Therefore, the specific information of the virtual node finally generated is: (2. KeyGroupStreamPartitioner, UNDEFINED).

transform

There is a map - Translator map in the StreamGraphGenerator, which stores the transformation to translator After obtaining the TransformationTranslator corresponding to the transformation, we execute translateForStreaming or translateForBatch according to whether it is a stream processing environment or a batch processing environment. Here is a stream processing environment, so we directly look at the source code of translateForStreaming.

public final Collection<Integer> translateForStreaming(

final T transformation, final Context context) {

checkNotNull(transformation);

checkNotNull(context);

final Collection<Integer> transformedIds =

translateForStreamingInternal(transformation, context);

configure(transformation, context);

return transformedIds;

}

translateForStreaming adopts the design pattern of template method. It is defined in simpletransformation translator as a final method. Each TransformationTranslator implements the translateForStreamingInternal method according to its own needs. Generally, the final logic leads to the method translateInternal.

translateInternal

The specific implementation of each TransformationTranslator may be different, but it basically does some or a combination of the following:

- Build a StreamNode for the Transformation and add it to the StreamGraph (addOperator);

- Establish an edge between the StreamNode and the corresponding upstream StreamNode and add it to the StreamGraph;

- Build a virtual node for the Transformation and add it to the StreamGraph.

Taking the above figure as an example, LegacySinkTransformation will first find the corresponding Translator: LegacySinkTransformationTranslator and call its implemented translateForStreamingInternal (and finally lead to translateInternal). The core logic in translateInternal is these two steps:

- Call StreamGraph.addSink() to add StreamNode;

- Call StreamGraph.addEdge() to add an edge.

StreamGraph.addSink()

addSink essentially calls the addOperator method, but adds a reference to the StreamNode in the StreamGraph member sinks. Similarly, addSource only adds a reference to the StreamNode to sources on the basis of addOperator.

StreamGraph.addEdge()

When adding an edge to the StreamGraph, it is mainly to establish the connection between the upstream node and the node. Therefore, you need to get all inputids first and call addEdge with each upstream node as an input parameter. The code is as follows:

for (Integer inputId : context.getStreamNodeIds(input)) {

streamGraph.addEdge(inputId, transformationId, 0);

}

context.getStreamNodeIds(input) takes the id of the upstream node from StreamGraphGenerator.alreadyTransformed. As mentioned above, some transformations will become virtual nodes during the transformation process to regenerate the id. therefore, directly using the id of the upstream node saved in the transformation may be invalid and a mapping query needs to be performed.

After getting the upstream node id, we can call streamGraph.addEdge(inputId, transformationId, 0) Add edges. This method will eventually lead to streamGraph.addEdgeInternal. In addEdgeInternal, StreamNode will deal with the situation that the upstream node is a virtual node. After finding the real upstream node x recorded in the virtual node, establish the relationship between X and the current StreamNode, as follows:

private void addEdgeInternal(

Integer upStreamVertexID,

Integer downStreamVertexID,

int typeNumber,

StreamPartitioner<?> partitioner,

List<String> outputNames,

OutputTag outputTag,

ShuffleMode shuffleMode) {

if (virtualSideOutputNodes.containsKey(upStreamVertexID)) {

int virtualId = upStreamVertexID;

// Find the upstream node of the virtual node record

upStreamVertexID = virtualSideOutputNodes.get(virtualId).f0;

if (outputTag == null) {

outputTag = virtualSideOutputNodes.get(virtualId).f1;

}

addEdgeInternal(

upStreamVertexID,

downStreamVertexID,

typeNumber,

partitioner,

null,

outputTag,

shuffleMode);

} else if (virtualPartitionNodes.containsKey(upStreamVertexID)) {

int virtualId = upStreamVertexID;

// Find the upstream node of the virtual node record

upStreamVertexID = virtualPartitionNodes.get(virtualId).f0;

if (partitioner == null) {

partitioner = virtualPartitionNodes.get(virtualId).f1;

}

shuffleMode = virtualPartitionNodes.get(virtualId).f2;

addEdgeInternal(

upStreamVertexID,

downStreamVertexID,

typeNumber,

partitioner,

outputNames,

outputTag,

shuffleMode);

} else {

StreamNode upstreamNode = getStreamNode(upStreamVertexID);

StreamNode downstreamNode = getStreamNode(downStreamVertexID);

// Set partitioner and shuffleMode

StreamEdge edge =

new StreamEdge(

upstreamNode,

downstreamNode,

typeNumber,

partitioner,

outputTag,

shuffleMode);

getStreamNode(edge.getSourceId()).addOutEdge(edge);

getStreamNode(edge.getTargetId()).addInEdge(edge);

}

}

Take ReduceTransformation as an example (its own id is 4). The transformation id of the input recorded by ReduceTransformation is 3. First, find the mapped node id: 6 through alreadyTransformed. Then find the real upstream node 2 according to the information of the virtual node (2, keygroupstream partitioner, undefined), and create a StreamEdge of 2-4. When creating a StreamEdge, the divider and shuffleMode will be recorded in it, and this edge will be added to the outEdges of the source vertex and the indedges of the target vertex respectively.