1: rbd introduction

A block is a sequence of bytes (for example, a 512 byte block of data). Block based storage interface is the most commonly used method to store data using rotating media (such as hard disk, CD, floppy disk or even traditional 9-track tape). The ubiquitous block device interface makes the virtual block device an ideal candidate for interaction with mass data storage systems (such as Ceph).

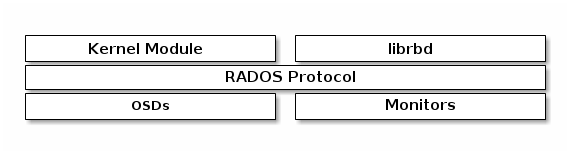

Ceph block devices are thinly configured, resizable, and store striped data on multiple OSDs in the Ceph cluster. Ceph block devices take advantage of RADOS functions, such as snapshot, replication, and consistency. Ceph's RADOS block device (RBD) uses kernel modules or librbd libraries to interact with OSD. '

'

Ceph's block devices provide high performance and unlimited scalability to kernel devices, KVMS such as QEMU, cloud based computing systems such as OpenStack and CloudStack. You can use the same cluster to operate Ceph RADOS gateway, Ceph's file system, and Ceph block devices at the same time.

2: Create and use block devices

Create pools and blocks

[root@ceph-node1 ~]# ceph osd pool create block 6

pool 'block' created

Create a user for the client and scp the key file to the client

[root@ceph-node1 ~]# ceph auth get-or-create client.rbd mon 'allow r' osd 'allow class-read object_prefix rbd_children, allow rwx pool=block'| tee ./ceph.client.rbd.keyring

[client.rbd]

key = AQA04PpdtJpbGxAAd+lCJFQnDfRlWL5cFUShoQ==

[root@ceph-node1 ~]#scp ceph.client.rbd.keyring root@ceph-client:/etc/ceph

The client creates a block device of 2G size

[root@ceph-client /]# rbd create block/rbd0 --size 2048 --name client.rbd

Map this block device to the client

[root@ceph-client /]# rbd map --image block/rbd0 --name client.rbd

/dev/rbd0

[root@ceph-client /]# rbd showmapped --name client.rbd

id pool image snap device

0 block rbd0 - /dev/rbd0

Note: the following errors may be reported here

[root@ceph-client /]# rbd map --image block/rbd0 --name client.rbd

rbd: sysfs write failed

In some cases useful info is found in syslog - try "dmesg | tail".

rbd: map failed: (2) No such file or directory

There are three solutions. Look at my blog rbd: sysfs write failed solution

Create a file system and mount block devices

[root@ceph-client /]# fdisk -l /dev/rbd0

Disk /dev/rbd0: 2147 MB, 2147483648 bytes, 4194304 sectors

Units = sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 4194304 bytes / 4194304 bytes[root@ceph-client /]# mkfs.xfs /dev/rbd0

meta-data=/dev/rbd0 isize=512 agcount=8, agsize=65536 blks

= sectsz=512 attr=2, projid32bit=1

= crc=1 finobt=0, sparse=0

data = bsize=4096 blocks=524288, imaxpct=25

= sunit=1024 swidth=1024 blks

naming =version 2 bsize=4096 ascii-ci=0 ftype=1

log =internal log bsize=4096 blocks=2560, version=2

= sectsz=512 sunit=8 blks, lazy-count=1

realtime =none extsz=4096 blocks=0, rtextents=0[root@ceph-client /]# mount /dev/rbd0 /ceph-rbd0

[root@ceph-client /]# df -Th /ceph-rbd0

Filesystem Type Size Used Avail Use% Mounted on

/dev/rbd0 xfs 2.0G 33M 2.0G 2% /ceph-rb

Write data test

[root@ceph-client /]# dd if=/dev/zero of=/ceph-rbd0/file count=100 bs=1M

100+0 records in

100+0 records out

104857600 bytes (105 MB) copied, 0.0674301 s, 1.6 GB/s

[root@ceph-client /]# ls -lh /ceph-rbd0/file

-rw-r--r-- 1 root root 100M Dec 19 10:50 /ceph-rbd0/file

Make system service

[root@ceph-client /]#cat /usr/local/bin/rbd-mount

#!/bin/bash # Pool name where block device image is stored export poolname=block # Disk image name export rbdimage0=rbd0 # Mounted Directory export mountpoint0=/ceph-rbd0 # Image mount/unmount and pool are passed from the systemd service as arguments # Are we are mounting or unmounting if [ "$1" == "m" ]; then modprobe rbd rbd feature disable $rbdimage0 object-map fast-diff deep-flatten rbd map $rbdimage0 --id rbd --keyring /etc/ceph/ceph.client.rbd.keyring mkdir -p $mountpoint0 mount /dev/rbd/$poolname/$rbdimage0 $mountpoint0 fi if [ "$1" == "u" ]; then umount $mountpoint0 rbd unmap /dev/rbd/$poolname/$rbdimage0 fi

[root@ceph-client ~]# cat /etc/systemd/system/rbd-mount.service

[Unit]

Description=RADOS block device mapping for $rbdimage in pool $poolname"

Conflicts=shutdown.target

Wants=network-online.target

After=NetworkManager-wait-online.service

[Service]

Type=oneshot

RemainAfterExit=yes

ExecStart=/usr/local/bin/rbd-mount m

ExecStop=/usr/local/bin/rbd-mount u

[Install]

WantedBy=multi-user.target

Power on auto mount

[root@ceph-client ~]#systemctl daemon-reload

[root@ceph-client ~]#systemctl enable rbd-mount.service