Linux device driver learning notes - Advanced Character driver operations - Note.4 [blocking I/O]

- LINUX DEVICE DRIVERS,3RD EDITION

- Many of them are extracted from the Linux kernel source code

6.2 blocking I/O

The driver should (by default) block the process and put it to sleep until the request can continue. This section shows how to put a process to sleep and then wake it up again

6.2.1 introduction to sleep

When a process is set to sleep, it is identified as in a special state and removed from the scheduler's run queue. Until something changes that state, the process will not be scheduled on any CPU and will not run. A sleeping process has been put aside to the side of the system for future events

Several rules for encoding sleep in a safe way:

(1) You can't sleep when you're running in an atomic context. Chapter 5 introduces atomic operations; An atomic context is just a state, where multiple steps must be performed without any type of concurrent access

(2) Your driver cannot sleep while holding a spin lock, seqlock, or RCU lock. If you have turned off interrupt, you cannot sleep

(3) It is legal to sleep while holding a flag, but you should look carefully at any code that does so. If the code sleeps while holding a flag, any other thread waiting for the flag also sleeps. Therefore, any sleep that occurs when holding a flag should be short, and you should convince yourself that due to holding the flag, You cannot block the process that will eventually wake you up

(4) When you wake up, you never know how long your process has left the CPU or what has changed at the same time. You often don't know whether another process has slept and waited for the same event; That process may wake up before you and get the resources you are waiting for. As a result, you can't make any assumptions about the system state after you wake up, and you must check to ensure that the conditions you are waiting for are true

(5) Your process cannot sleep unless you are sure that others will wake it up. The code that does the wake-up work must also be able to find your process to do its work. Ensure that a wake-up occurs,

A wait queue is what it sounds like: a process list, waiting for a specific event

In Linux, a waiting queue is managed by a "waiting queue header" and a wait queue_ queue_ head_ T type structure, defined in < Linux / wait. H >

A waiting queue header can be defined and initialized using:

DECLARE_WAIT_QUEUE_HEAD(name);

Or dynamically, as follows:

wait_queue_head_t my_queue; init_waitqueue_head(&my_queue);

We will return to the waiting queue structure soon, but we know enough to look at sleep and wake up first

6.2.2 simple sleep

(1) Sleep:

Any sleeping process must check when it wakes up again to ensure that the waiting condition is true. The simplest way to sleep in the Linux kernel is a macro definition called wait_event (there are several variants); It combines the details of dealing with sleep and the inspection of the waiting conditions of the process. Wait_ The form of event is:

wait_event(queue, condition) wait_event_interruptible(queue, condition) wait_event_timeout(queue, condition, timeout) wait_event_interruptible_timeout(queue, condition, timeout)

Queue is the waiting queue header to use. Note that it is passed "by value". The condition is an arbitrary Boolean expression evaluated by this macro before and after sleep; The process continues to sleep until the condition evaluates to true. Note that the condition may be evaluated any time, so it should not have any boundary effect

If you use wait_event, your process is set to sleep uninterrupted. The preferred choice is wait_event_ This version returns an integer value that you should check; A non-zero value means that your sleep is interrupted by some signal, and your driver may return - erestatsys

The final versions (wait_event_timeout and wait_event_interruptible_timeout) wait for a limited period of time; After a timeout (in ticks) during this time period, the macro returns a value of 0 regardless of how the condition is evaluated

(2) Wake up:

Wake up. Some other execution thread (a different process, or an interrupt handler) must wake you up because your process is sleeping

The basic function to wake up the sleep process is called wake_up. It has several forms (but we only look at two of them now):

void wake_up(wait_queue_head_t *queue); void wake_up_interruptible(wait_queue_head_t *queue);

wake_ Up wakes up all processes waiting on a given queue. Other forms (wake_up_interruptible) limit itself to handling an interruptible sleep

Usually, these two are indistinguishable (if you use interruptible sleep); In fact, the Convention is to use wake_up if you are using wait_event ,

wake_up_interruptible if you are using wait_event_interruptible.

//Example:

Any process trying to read from this device is set to sleep. Whenever a process writes to this device, all sleep processes are awakened

This behavior is implemented by the following read and write methods:

static DECLARE_WAIT_QUEUE_HEAD(wq);

static int flag = 0;

ssize_t sleepy_read (struct file *filp, char __user *buf, size_t count, loff_t *pos)

{

printk(KERN_DEBUG "process %i (%s) going to sleep\n", current->pid, current->comm);

wait_event_interruptible(wq, flag != 0);

flag = 0;

printk(KERN_DEBUG "awoken %i (%s)\n", current->pid, current->comm);

return 0; /* EOF */

}

ssize_t sleepy_write (struct file *filp, const char __user *buf, size_t count, loff_t *pos)

{

printk(KERN_DEBUG "process %i (%s) awakening the readers...\n", current->pid, current->comm);

flag = 1;

wake_up_interruptible(&wq);

return count; /* succeed, to avoid retrial */

}

Note the use of flag variable in this example, because wait_event_interruptible check a condition that must become true. We use flag to create that condition. Consider when sleep_ What happens if two processes are waiting when write is called. Because sleepy_read resets the flag to 0. Once it wakes up, you may think that the second process waking up will go back to sleep immediately. In a single processor system, this almost always happens. But it is important to understand why you can't rely on this behavior. Wake_ up_ The interruptible call will wake up two sleep processes. It is entirely possible that they both notice that the flag is non-zero before another has a chance to reset it. For this small module, this competition condition is not important. In a real driver, this competition may lead to a rare crash that is difficult to find. If the correct operation requires that only one process can see this non-zero value, It will have to be tested atomically

6.2.3 blocking and non blocking operation

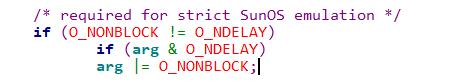

Explicit non blocking I/O is defined by FILP - > F_ O in flags_ Nonblock flag. This flag is defined in < Linux / fcntl. H > and is automatically included by < Linux / Fs. H >

This flag is named "open - non blocking" because it can be specified when it is opened (and can only be specified there at first)

If you browse the source code, you will find some to an o_ Reference to NDELAY flag; This is an alternative o_ The name of Nonblock, which is accepted for compatibility with System V code

This flag is cleared by default because the normal behavior of a process waiting for data is only sleep

In the case of a blocking operation, the following behaviors should be implemented to comply with the standard syntax:

(1) If a process calls read but no data is available (not yet), the process must block. The process wakes up immediately when data arrives, and that data is returned to the caller, even if it is less than the number requested in the count parameter of the method

(2) If a process calls write and there is no space in the buffer, the process must block and it must be in a different waiting queue than used as read

When some data is written to the hardware device and the space in the output buffer becomes free, the process wakes up and the write call succeeds,

Although the data may only be partially written, if there is no space in the buffer for the requested count bytes

Both sentences assume input and output buffers; In fact, almost every device driver has an input buffer. The requirement is to avoid losing incoming data when no one is reading. On the contrary, data cannot be lost when writing, because if system calls cannot receive data bytes, they remain in user space buffer. Even so, output buffer is almost always useful to squeeze more performance from hardware

The performance obtained by implementing output buffer in the driver comes from reducing the number of context switching and user / kernel level switching. Without an output buffer (assuming a slow device), each system call receives one or more characters, and when one process sleeps in write, another process runs (that is a context switching). When the first process is awakened, It recovers (another context switch), writes back (kernel / user conversion), and the process reissues the system call to write more data (user / kernel conversion); This call blocks and the loop continues. Adding an output buffer allows the driver to receive large data blocks in each write call, resulting in a corresponding improvement in performance

If the buffer is large enough, the write call succeeds on the first attempt - the buffered data will be pushed to the device - there is no need to control the need to return user space for the second or third write call. Selecting an appropriate value for the output buffer is obviously device specific

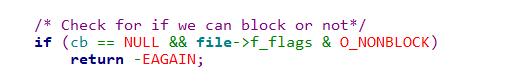

If O is specified_ Nonblock, read and write behave differently. In this case,

This call simply returns - EAGAIN("try it agin") if a process calls read when no data is available, or write if there is no space in the buffer

You expect the non blocking operation to return immediately, allowing the application to poll for data. The application must be careful when using the stdio function to process non blocking files,

Because they are prone to error, a non blocking return is EOF. They must always check errno

Naturally, O_NONBLOCK also makes sense in the open method. This occurs when the call is really blocked for a long time; For example, when opening (for read access) a FIFO without a writer, or accessing a disk file, use a hanging lock. Often, opening a device succeeds or fails, and there is no need to wait for external events. However, sometimes opening the device requires a long initialization, and you may choose to support O in your open method_ Nonblock, by immediately returning - EAGAIN, if this flag is set. After starting the initialization process of the device

This driver may also implement a blocking open to support the access policy in a way similar to file locking. We will see such an implementation in "blocking open as an alternative to EBUSY" later in this chapter. Some drivers may also implement special semantics to O_NONBLOCK; For example, the open of a tape device is often blocked until a tape is inserted

If this tape drive uses o_ When Nonblock is opened, the open succeeds immediately, regardless of whether the media is present or not

Only read, write, and open file operations are affected by non blocking flags

6.2.4 an example of blocking I/O

In the driver, a process blocking the read call is awakened when the data arrives; Often, the hardware sends an interrupt to indicate such an event,

The scullpipe driver is so different that it can run without any special hardware or an interrupt handler

We choose to use another process to generate data and wake up the read process; Similarly, the read process is used to wake up the writer process waiting for buffer space to be available

This device driver uses a device structure, which contains two waiting queues and a buffer. The buffer size is configurable in common methods (at compile time, load time, or run time)

struct scull_pipe

{

wait_queue_head_t inq, outq; /* read and write queues */

char *buffer, *end; /* begin of buf, end of buf */

int buffersize; /* used in pointer arithmetic */

char *rp, *wp; /* where to read, where to write */

int nreaders, nwriters; /* number of openings for r/w */

struct fasync_struct *async_queue; /* asynchronous readers */

struct semaphore sem; /* mutual exclusion semaphore */

struct cdev cdev; /* Char device structure */

};

The read implementation manages both blocking and non blocking inputs, which looks like this:

static ssize_t scull_p_read (struct file *filp, char __user *buf, size_t count, loff_t *f_pos)

{

struct scull_pipe *dev = filp->private_data;

if (down_interruptible(&dev->sem))

return -ERESTARTSYS;

while (dev->rp == dev->wp)

{ /* nothing to read */

up(&dev->sem); /* release the lock */

if (filp->f_flags & O_NONBLOCK)

return -EAGAIN;

PDEBUG("\"%s\" reading: going to sleep\n", current->comm);

if (wait_event_interruptible(dev->inq, (dev->rp != dev->wp)))

return -ERESTARTSYS; /* signal: tell the fs layer to

handle it */ /* otherwise loop, but first reacquire the lock */

if (down_interruptible(&dev->sem))

return -ERESTARTSYS;

}

/* ok, data is there, return something */

if (dev->wp > dev->rp)

count = min(count, (size_t)(dev->wp - dev->rp));

else /* the write pointer has wrapped, return data up to dev->end */

count = min(count, (size_t)(dev->end - dev->rp));

if (copy_to_user(buf, dev->rp, count))

{

up (&dev->sem);

return -EFAULT;

}

dev->rp += count;

if (dev->rp == dev->end)

dev->rp = dev->buffer; /* wrapped */

up (&dev->sem);

/* finally, awake any writers and return */

wake_up_interruptible(&dev->outq);

PDEBUG("\"%s\" did read %li bytes\n",current->comm, (long)count);

return count;

}

Let's take a closer look at scull_p_read how to handle waiting for data. This while loop tests the buffer under holding the device flag. If there is data there, we know that we can return to the user immediately without sleep, so the whole loop is skipped. On the contrary, if the buffer is empty, we must sleep. But before we can do this, we must lose the device flag// Key points

If we want to hold it and sleep, no writer will have a chance to wake us up. Once this guarantee is lost, we do a quick check to see if the user has requested non blocking I/O, and if so, return. Otherwise, it is time to call wait_event_interruptible.

Once we have passed this call, something has awakened us, but we don't know what it is. One may be that the process has received a signal

Include wait_ event_ The if statement called by interruptible checks this situation. This statement ensures the correct and expected response to the signal,

It may be responsible for waking up the process (because we are in an interruptible sleep). If a signal has arrived and it is not blocked by the process, the correct way is to let the upper layer of the kernel handle the event. At this point, the driver returns - erestatsys to the caller; This value is used internally by the virtual file system (VFS). It either restarts the system call or returns - EINTR to user space. We use the same type of check to process signals for each read and write implementation

However, even without a signal, we still don't know exactly where the data is for acquisition. Others may already be waiting for the data, and they may win the competition and get the data first. Therefore, we must obtain the equipment flag again; Only then can we test the read buffer (in the while loop) and really know that we can return the data in the buffer to the user. The final result of all this code is that when we exit from the while loop, we know that the flag is obtained and there is data in the buffer that we can use

Just for completeness, we have to pay attention to scull_p_read can sleep in another place after we get the device flag: copy_to_user's call

If scull sleeps while copying data between the kernel and user space, it sleeps in holding the device flag

In this case, it is reasonable to hold the flag because it cannot deadlock the system (we know that the kernel will copy to user space and wake us up under the same flag in the unlocked process),

And because it is important that the device memory array does not change when driving sleep

6.2.5 advanced sleep

6.2.5.1 how does a process sleep

If we go deep into < Linux / wait. H >, you can see in wait_ queue_ head_ The data structure behind type T is very simple; It contains a spin lock and a linked list. This linked list is a waiting queue entry, which is declared as wait_queue_t. This structure contains information about the sleep process and how it wants to be awakened

The first step in putting a process to sleep is often to allocate and initialize a wait_queue_t structure, and then add it to the correct waiting queue. When everything is in place, the person responsible for waking up can find the correct process. The next step is to set the state of the process to mark it as sleep. Several task states are defined in < Linux / sched. H >

TASK_RUNNING means that a process can run, although it does not have to run on the processor at any specific time. There are two states indicating that a process is sleeping: TASK_INTERRUPTIBLE and TASK_UNTINTERRUPTIBLE; They correspond to two types of sleep. Other states are normally independent of the driver writer

/* * Task state bitmask. NOTE! These bits are also * encoded in fs/proc/array.c: get_task_state(). * * We have two separate sets of flags: task->state * is about runnability, while task->exit_state are * about the task exiting. Confusing, but this way * modifying one set can't modify the other one by * mistake. */ #define TASK_RUNNING 0 #define TASK_INTERRUPTIBLE 1 #define TASK_UNINTERRUPTIBLE 2 #define __TASK_STOPPED 4 #define __TASK_TRACED 8 /* in tsk->exit_state */ #define EXIT_ZOMBIE 16 #define EXIT_DEAD 32 /* in tsk->state again */ #define TASK_DEAD 64 #define TASK_WAKEKILL 128 #define TASK_WAKING 256 #define TASK_PARKED 512 #define TASK_STATE_MAX 1024 #define TASK_STATE_TO_CHAR_STR "RSDTtZXxKWP" extern char ___assert_task_state[1 - 2*!!( sizeof(TASK_STATE_TO_CHAR_STR)-1 != ilog2(TASK_STATE_MAX)+1)]; /* Convenience macros for the sake of set_task_state */ #define TASK_KILLABLE (TASK_WAKEKILL | TASK_UNINTERRUPTIBLE) #define TASK_STOPPED (TASK_WAKEKILL | __TASK_STOPPED) #define TASK_TRACED (TASK_WAKEKILL | __TASK_TRACED) /* Convenience macros for the sake of wake_up */ #define TASK_NORMAL (TASK_INTERRUPTIBLE | TASK_UNINTERRUPTIBLE) #define TASK_ALL (TASK_NORMAL | __TASK_STOPPED | __TASK_TRACED) /* get_task_state() */ #define TASK_REPORT (TASK_RUNNING | TASK_INTERRUPTIBLE | \ TASK_UNINTERRUPTIBLE | __TASK_STOPPED | \ __TASK_TRACED | EXIT_ZOMBIE | EXIT_DEAD)

In the 2.6 kernel, the driver code usually does not need to directly operate the process state. However, if you need to do so, the code used is:

void set_current_state(int new_state);

In old code, you often see such things:

current->state = TASK_INTERRUPTIBLE;

But changing current directly like this is not encouraged; When the data structure changes, such code will easily fail

However, the above code does show that changing the current state of a process can't make it sleep. By changing the current state, you have changed the way the scheduler treats the process, but you haven't given up the processor. Giving up the processor is the last step, But one thing to do first: you must first check your sleep conditions. Failure to do this check will introduce a competitive condition; If you are busy with the above process and another thread has just tried to wake you up, what will happen if this condition becomes true? You may miss waking up and sleep longer than you expected

Therefore, under the sleep code, you will typically see the following code:

if (!condition) schedule();

By checking our conditions after setting the process state, we cover all possible event progress. If the conditions we are waiting for have arrived before setting the process state,

We notice in this check that we don't really sleep. If a wake-up occurs later, the process is set to run regardless of whether we have really entered sleep or not

Whenever you call this function, you are telling the kernel to consider which process should run and convert control to that process, if necessary. Therefore, you never know how long it will take to return to your code in the schedule

After the if test and possible call schedule (and return from it), some cleaning work needs to be done. Because this code no longer wants to sleep, it must ensure that the task state is reset to TASK_RUNNING. If the code only returns from the schedule, this step is unnecessary; That function will not return until the process is runnable. If the call to schedule is skipped because it no longer needs sleep, the process state will be incorrect. It is also necessary to remove the process from the waiting queue, otherwise it may be awakened multiple times

6.2.5.2 manual sleep{

In previous versions of the Linux kernel, formal sleep required programmers to manually handle all the above steps. It was a cumbersome process, including a lot of error prone template code

Programmers can still sleep manually that way if they like; < Linux / sched. H > contains all the required definitions and the kernel source code around the example. However, there is an easier way

The first step is to create and initialize a waiting queue. This is often done by this macro definition:

DEFINE_WAIT(my_wait);

Where name is the name of the entry item of the waiting queue. You can do this in two steps:

wait_queue_t my_wait; init_wait(&my_wait);

But it is often easier to put a DEFINE_WAIT line at the top of the loop to realize your sleep. The next step is to add your wait queue entry to the queue and set the process status. Both tasks are handled by this function:

void prepare_to_wait(wait_queue_head_t *queue, wait_queue_t *wait, int state);

Here, queue and wait are the waiting queue header and process entry respectively. State is the new state of the process; it should either be task_interruptible (for interruptible sleep, which is often what you want) or task_uninterruptible (for non interruptible sleep) . after calling prepare_to_wait, the process can call schedule - after it has checked and confirmed that it still needs to wait. Once the schedule returns, it is time to clean up. This task is also handled by a special function:

void finish_wait(wait_queue_head_t *queue, wait_queue_t *wait);

After that, your code can test its state and see if it needs to wait again. We should need an example long ago. We saw the read method for scullpipe, which uses wait_event. The write method in the same driver uses prepare_to_wait and finish_wait to wait. Normally, you won't mix various methods in a driver like this, But we did this to show two ways to deal with sleep

Look at the write method itself:

/* How much space is free? */

static int spacefree(struct scull_pipe *dev)

{

if (dev->rp == dev->wp)

return dev->buffersize - 1;

return ((dev->rp + dev->buffersize - dev->wp) % dev->buffersize) - 1;

}

static ssize_t scull_p_write(struct file *filp, const char __user *buf, size_t count, loff_t *f_pos)

{

struct scull_pipe *dev = filp->private_data;

int result;

if (down_interruptible(&dev->sem))

return -ERESTARTSYS;

/* Make sure there's space to write */

result = scull_getwritespace(dev, filp);

if (result)

return result; /* scull_getwritespace called up(&dev->sem) */

/* ok, space is there, accept something */

count = min(count, (size_t)spacefree(dev));

if (dev->wp >= dev->rp)

count = min(count, (size_t)(dev->end - dev->wp)); /* to end of-buf */

else /* the write pointer has wrapped, fill up to rp-1 */

count = min(count, (size_t)(dev->rp - dev->wp - 1));

PDEBUG("Going to accept %li bytes to %p from %p\n", (long)count, dev->wp, buf);

if (copy_from_user(dev->wp, buf, count))

{

up (&dev->sem);

return -EFAULT;

}

dev->wp += count;

if (dev->wp == dev->end)

dev->wp = dev->buffer; /* wrapped */

up(&dev->sem);

/* finally, awake any reader */

wake_up_interruptible(&dev->inq); /* blocked in read() and select() */

/* and signal asynchronous readers, explained late in chapter 5 */

if (dev->async_queue)

kill_fasync(&dev->async_queue, SIGIO, POLL_IN);

PDEBUG("\"%s\" did write %li bytes\n",current->comm, (long)count);

return count;

This code looks similar to the read method, except that we have put the sleep code into a separate function called scull_getwritespace. Its job is to ensure that there is space for new data in the buffer and sleep until space is available. Once the space is available, scull_p_write can simply copy the user's data there, adjust the pointer, and wake up may be waiting for reading Data processing

The code to handle the actual sleep is:

/* Wait for space for writing; caller must hold device semaphore. On

* error the semaphore will be released before returning. */

static int scull_getwritespace(struct scull_pipe *dev, struct file *filp)

{

while (spacefree(dev) == 0)

{ /* full */

DEFINE_WAIT(wait);

up(&dev->sem);

if (filp->f_flags & O_NONBLOCK)

return -EAGAIN;

PDEBUG("\"%s\" writing: going to sleep\n", current->comm);

prepare_to_wait(&dev->outq, &wait, TASK_INTERRUPTIBLE);

if (spacefree(dev) == 0)

schedule();

finish_wait(&dev->outq, &wait);

if (signal_pending(current))

return -ERESTARTSYS; /* signal: tell the fs layer to handle it */

if (down_interruptible(&dev->sem))

return -ERESTARTSYS;

}

return 0;

}

Pay attention to the while loop again. If there is space available without sleep, this function simply returns. Otherwise, it must lose the device flag and wait

This code uses DEFINE_WAIT to set a waiting queue entry and prepare_to_wait to get ready for the actual sleep. Then check the buffer as necessary; What we have to deal with is that after we have entered the while loop and before we put ourselves in the waiting queue (and discard the flag), there is space available in the buffer. Without this check, if the read process can completely empty the buffer at that time, We may miss the only wake we can get and sleep forever. After persuading ourselves that we must sleep, we call schedule

- Take another look at this situation: what happens when sleep occurs between if statement testing and calling schedule?

In this case, it's all right. This wake-up resets the process status to TASK_RUNNING and the schedule returns - although not immediately. As long as the test occurs after the process puts itself into the waiting queue and changes its state, things will go well. To end, we call finish_wait. Yes, signal_ The call to pending tells us whether we are awakened by a signal; If so, we need to return to the user and have them try again later. Otherwise, we request the flag and test the free space again as usual

6.2.5.3 mutually exclusive wait

We've seen when a process calls wake_up on the waiting queue, all processes waiting on this queue are set to be runnable. In many cases, this is the correct approach. However, in other cases, it may be known in advance that only one awakened process will successfully obtain the required resources, and the rest will simply sleep again. Each such process must obtain the processor and compete for resources (and any management lock) and explicitly go back to sleep

If the number of processes in the waiting queue is large, this "swarm panic" behavior may seriously reduce the performance of the system,

Kernel developers have added a "mutually exclusive wait" option to the kernel. The behavior of a mutually exclusive wait is very similar to a normal sleep, with two important differences:

(1) When a waiting queue entry has the wq_flag_exclude flag set, it is added to the tail of the waiting queue. Entry items without this flag, on the contrary, are added to the start

(2) When wake_up is called on a waiting queue, it stops after waking up the first process with WQ_FLAG_EXCLUSIVE flag

The final result is that the processes waiting for mutual exclusion are awakened one at a time, in a sequential manner, and do not cause a crowd alarm problem. However, the kernel still wakes up all non mutually exclusive waiters each time

The use of mutually exclusive waiting in the driver should be considered if two conditions are met: you want to compete effectively for resources, and waking up a process is enough to completely consume resources when resources are available

Mutex waiting works well for Apache Web servers. For example, when a new connection enters, indeed, an Apache process in the system should be awakened to deal with it

We don't use mutually exclusive wait in scullpipe driver, but we rarely see readers of competing data (or writers of competing buffer space), and we can't know that once a reader is awakened, it will consume all available data

Making a process enter an interruptible wait is a simple thing to call prepare_to_wait_exclusive:

void prepare_to_wait_exclusive(wait_queue_head_t *queue, wait_queue_t *wait, int state);

This call, when used instead of prepare_to_wait, sets the "mutually exclusive" flag at the entry of the waiting queue and adds the process to the end of the waiting queue

Note that there is no way to use wait_event and its variants for mutually exclusive waiting

6.2.5.4 wake up details

The wake-up process we have shown is simpler than what really happens in the kernel. The real action generated when the process is awakened is controlled by a function located in the entry item of the waiting queue

The default wake-up function sets the process to a running state, and may switch a context to a process with higher priority. The device driver should never need to provide a different wake-up function;

If you are an exception, see < Linux / wait. H > for information on how to do it. We haven't seen all wake_up variants yet

Most driver writers never need anything else. For completeness, here is the whole collection:

wake_up(wait_queue_head_t *queue); wake_up_interruptible(wait_queue_head_t *queue);

Wake_up wakes up every process in the queue that is not waiting for mutual exclusion, and there is only one mutual exclusion waiting person. If it exists, wake_up_interruptible is the same,

Except that it skips processes in non interruptible sleep. These functions wake up one or more processes and schedule them before returning (although this will not happen if they are called from an atomic context)

wake_up_nr(wait_queue_head_t *queue, int nr); wake_up_interruptible_nr(wait_queue_head_t *queue, int nr);

These functions are similar to wake up, except that they can wake up as many as nr mutually exclusive waiters, not just one

Note that passing 0 is interpreted as requesting that all mutex waiters be awakened, not none

wake_up_all(wait_queue_head_t *queue); wake_up_interruptible_all(wait_queue_head_t *queue);

This wake_up wakes up all processes, whether they are mutually exclusive or not (although interruptible types still skip processes that are doing non interruptible waiting)

wake_up_interruptible_sync(wait_queue_head_t *queue);

Normally, a awakened process may preempt the current process and be scheduled to the processor before wake up returns. In other words, calling wake up may not be atomic

If the process calling wake_up runs in an atomic context (it may hold a spin lock, for example, or an interrupt processing), this rescheduling will not occur

Normally, that protection is sufficient. However, if you need to explicitly ask not to be dispatched out of the processor, you can use the "synchronous" variant of wake_up_interruptible at that time

This function is most commonly used when the caller wants to reschedule anyway, and it will be more effective to simply complete any remaining small work first

If all the above contents are not completely clear at the first reading, don't worry. Few requests need to call anything other than wake_up_interruptible

6.2.5.5 previous history: sleep_on

If you spend some time delving into the kernel source code, you may encounter two functions that we have ignored so far:

void sleep_on(wait_queue_head_t *queue); void interruptible_sleep_on(wait_queue_head_t *queue);

These functions unconditionally sleep the current process on a given queue. These functions are strongly deprecated and you should never use them

If you think about it, the problem is obvious: sleep_on does not provide a way to avoid competitive conditions. There is often a window when your code decides that it must sleep and when sleep_on really affects sleep

The wakeup that arrived during that window was missed. Therefore, the code calling sleep_on is never completely safe

Current plan for sleep_ Calls to on and its variants (with multiple timeout types we haven't shown) will be removed from the kernel in the near future

6.2.6 test the scullpipe driver{

We have seen how the scullpipe driver implements blocking I/O. if you want to try, the source code of this driver can be found in the examples in the rest of the book

Blocking I/O can be seen by opening two windows. The first can run a command such as cat /dev/scullpipe

If you then copy the file to / dev/scullpipe in another window, you can see the contents of the file appear in the first window

Testing non blocking actions is tricky because traditional programs that can be used in the shell do not do non blocking operations. The miscprogs source directory contains the following simple program, called nbtest,

All it does is copy its input to its output, use non blocking I/O and delay between retries. The delay time is passed on the command line and defaults to 1 second

int main(int argc, char **argv)

{

int delay = 1, n, m = 0;

if (argc > 1)

delay=atoi(argv[1]);

fcntl(0, F_SETFL, fcntl(0,F_GETFL) | O_NONBLOCK); /* stdin */

fcntl(1, F_SETFL, fcntl(1,F_GETFL) | O_NONBLOCK); /* stdout */

while (1) {

n = read(0, buffer, 4096);

if (n >= 0)

m = write(1, buffer, n);

if ((n < 0 || m < 0) && (errno != EAGAIN))

break;

sleep(delay);

}

perror(n < 0 ? "stdin" : "stdout");

exit(1);

}

If you run this program under a process tracking tool, such as strace, you can see the success or failure of each operation, depending on whether data is available when the operation is performed