If you want to use selenium to realize the functions of B station automatic login and click like, you can check how to solve the sliding unlocking. However, it's about the content of the crawler, and then you start to learn about the crawler. Before long, you want to make the website that records your life, so your friends recommend the layui framework. After a night, you think it's a framework for back-end programmers to get started. Vue feels too difficult, and starts to make Bo Otstrap, didn't come up with a reason. Because of idle mood, I began to learn reptiles again. Every time I write an article, I always read a paragraph in pieces. Someone in the comment area said that I was hypocritical, which is true.

http request returns response object property

Coding problems

import requests

r=requests.get('http://www.baidu.com/')

r.encoding='gbk' or r.encoding=r.apparent_encoding

#The content of the page returned by Baidu is ISO-8859-1 encoded. If it is not set to gbk, it will be garbled

print(response.text)

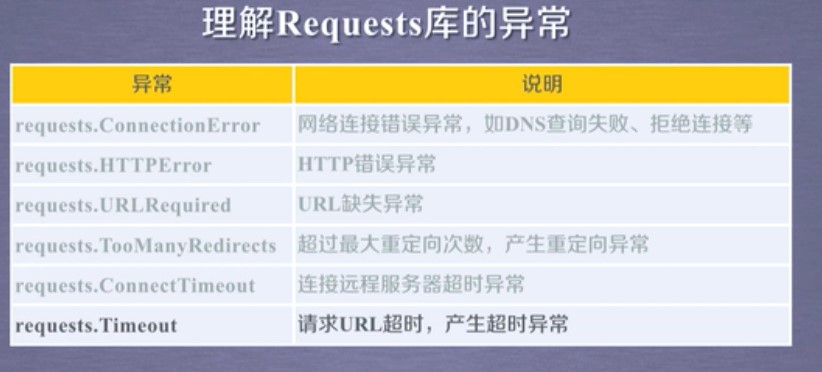

Library Exception Handling of requests

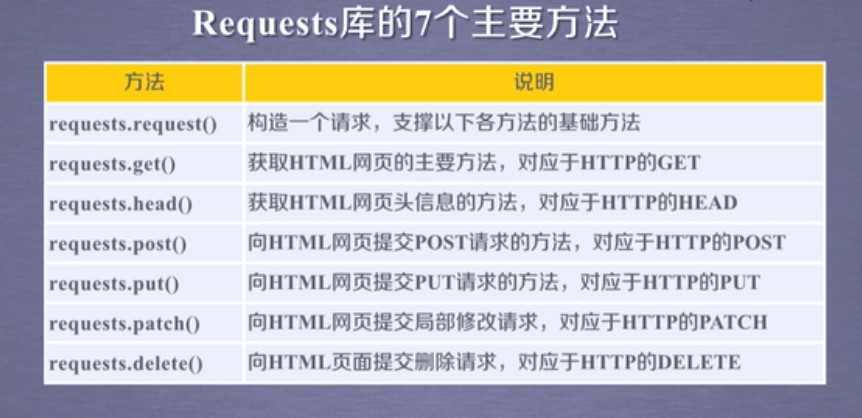

Main methods of requests Library

1 import requests

2 r = requests.get('https://www.cnblogs.com/')

3 r = requests.head('http://httpbin.org/get')

4 r = requests.post('http://httpbin.org/post',key='value')

5 r = requests.put('http://httpbin.org/put',key='value')

6 r = requests.patch('http://httpbin.org/patch',key='value')

7 r = requests.options('http://httpbin.org/get')

8 r = requests.delete('http://httpbin.org/delete')

General framework for crawling web pages

1 import requests

2 def get_Html(url):

3 try:

4 r = requests.get(url,timeout=30)

5 r.raise_for_status()

6 r.encoding=r.apparent_encoding

7 return r.text

8 except:

9 return "raise an exception"

10

11 if __name__=="__main__":

12 url = "http://www.baidu.com"

13 print(get_Html(url))

A few small cases

Case 1 Jingdong commodity crawling import requests url = 'https://item.jd.com/100010131982.html' try: r = requests.get(url) r.raise_for_status() r.encoding=r.apparent_encoding print(r.text[:1000]) #1000 Represents the first 1000 characters of interception except: print("Crawl failure") //Case 2 Amazon import requests url = 'https://www.amazon.cn/dp/B08531C6PV/ref=s9_acsd_hps_bw_c2_x_1_i?pf_rd_m=A1U5RCOVU0NYF2&pf_rd_s=merchandised-search-top-3&pf_rd_r=XXHRT6R61ZYZA5FGMPKJ&pf_rd_t=101&pf_rd_p=b2e55b79-7940-4444-967a-6dbe6d7cb574&pf_rd_i=1935403071' try: kv = {'user-agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/79.0.3945.117 Safari/537.36'} #Simulation browser r= requests.get(url,headers = kv) r.raise_for_status() r.encoding=r.apparent_encoding print(r.text[1000:2000]) except: print("Crawl failure") //Case 300 degrees import requests kv = {'wd':'python'} r = requests.get('http://www.baidu.com/s',params=kv) print(r.requests.url) print(len(r.text))

When I learn something new, I will update it. I remember when I first learned it, I started to post it. Now I look at the foundation honestly

To be continued!